고정된 트윗

Observations_Suggestions

901 posts

Observations_Suggestions

@Observe_Suggest

Joined in 2007. When I was in fourth grade my mother and I put a wall sized photograph of the Apollo lunar lander on the moon with the earth on the horizon.

Nomad 가입일 Haziran 2024

437 팔로잉63 팔로워

@ElonClipsX @elonmusk This makes me sad.

I admired SpaceX and Elon Musk so much back then.

Now I'm at zero trust, not even a tiny bit of entropy, that he and his team care about actual human beings as opposed to the humanity as an abstraction.

Not the best foundation to battle existential risk.

English

Elon Musk: Even if civilization has just a 1% chance of being annihilated, we should back up the biosphere on another planet.

“Sometimes people are puzzled as to why we're doing it. The reason we're doing it is to make life, consciousness, multiplanetary. So as to preserve the future of civilization and consciousness and life as we know it.

There's always some chance of something going wrong on Earth. Overall, I'm like fairly optimistic about Earth. Let's just say there's a 1% chance, for argument's sake of life as we know it and consciousness being annihilated on Earth. I think it's still, even if there's just a 1% chance of civilizational annihilation, you'd want to protect against that by having a second planet back up the biosphere effectively and ensure the continuity of life and consciousness.

This is the first time in the 4.5-billion-year history of Earth that it's been possible to do so. So we should take advantage of this window while it is still open. And you know, we don't want to be complacent and assume that there will be this constant upward trajectory of civilization. Hopefully that happens, but it might not. And so this is about protecting the future of life itself.”

Interview with Sandy Munro, May 27, 2025

English

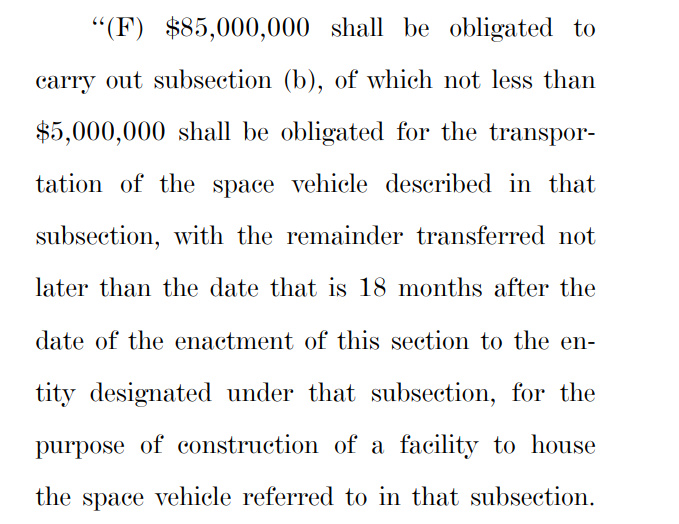

How much do you think Blue Origin's New Shepard capsules cost?

Do you think for $85 milllion NASA could buy the NS-33 capsule, ship it to the Smithsonian and then ship it to Texas for Ted Cruz?

I mean I know a lot of you don't like to refer to Katy Perry as an astronaut, but, if you did then the capsule would qualify under the terms of the language in the BBB.

And Ted Cruz would get *exactly* what he asked for.

English

@wholemars I miss the optimism of the whole earth catalog.

English

I’m beyond devastated by the passing of my dearest friend Alan Hassenfeld—the visionary Hasbro CEO, early Salesforce board member & founding force behind the Salesforce Foundation. Alan was always a Yoda to me. A champion of our core values, children’s health everywhere, and boundless joy. His warmth, humor, and global generosity improved the world. We’ll miss him deeply. May the One who brings peace bring peace to all. 💔

English

When working with LLMs I am used to starting "New Conversation" for each request.

But there is also the polar opposite approach of keeping one giant conversation going forever. The standard approach can still choose to use a Memory tool to write things down in between conversations (e.g. ChatGPT does so), so the "One Thread" approach can be seen as the extreme special case of using memory always and for everything.

The other day I've come across someone saying that their conversation with Grok (which was free to them at the time) has now grown way too long for them to switch to ChatGPT. i.e. it functions like a moat hah.

LLMs are rapidly growing in the allowed maximum context length *in principle*, and it's clear that this might allow the LLM to have a lot more context and knowledge of you, but there are some caveats. Few of the major ones as an example:

- Speed. A giant context window will cost more compute and will be slower.

- Ability. Just because you can feed in all those tokens doesn't mean that they can also be manipulated effectively by the LLM's attention and its in-context-learning mechanism for problem solving (the simplest demonstration is the "needle in the haystack" eval).

- Signal to noise. Too many tokens fighting for attention may *decrease* performance due to being too "distracting", diffusing attention too broadly and decreasing a signal to noise ratio in the features.

- Data; i.e. train - test data mismatch. Most of the training data in the finetuning conversation is likely ~short. Indeed, a large fraction of it in academic datasets is often single-turn (one single question -> answer). One giant conversation forces the LLM into a new data distribution it hasn't seen that much of during training. This is in large part because...

- Data labeling. Keep in mind that LLMs still primarily and quite fundamentally rely on human supervision. A human labeler (or an engineer) can understand a short conversation and write optimal responses or rank them, or inspect whether an LLM judge is getting things right. But things grind to a halt with giant conversations. Who is supposed to write or inspect an alleged "optimal response" for a conversation of a few hundred thousand tokens?

Certainly, it's not clear if an LLM should have a "New Conversation" button at all in the long run. It feels a bit like an internal implementation detail that is surfaced to the user for developer convenience and for the time being. And that the right solution is a very well-implemented memory feature, along the lines of active, agentic context management. Something I haven't really seen at all so far.

Anyway curious to poll if people have tried One Thread and what the word is.

English

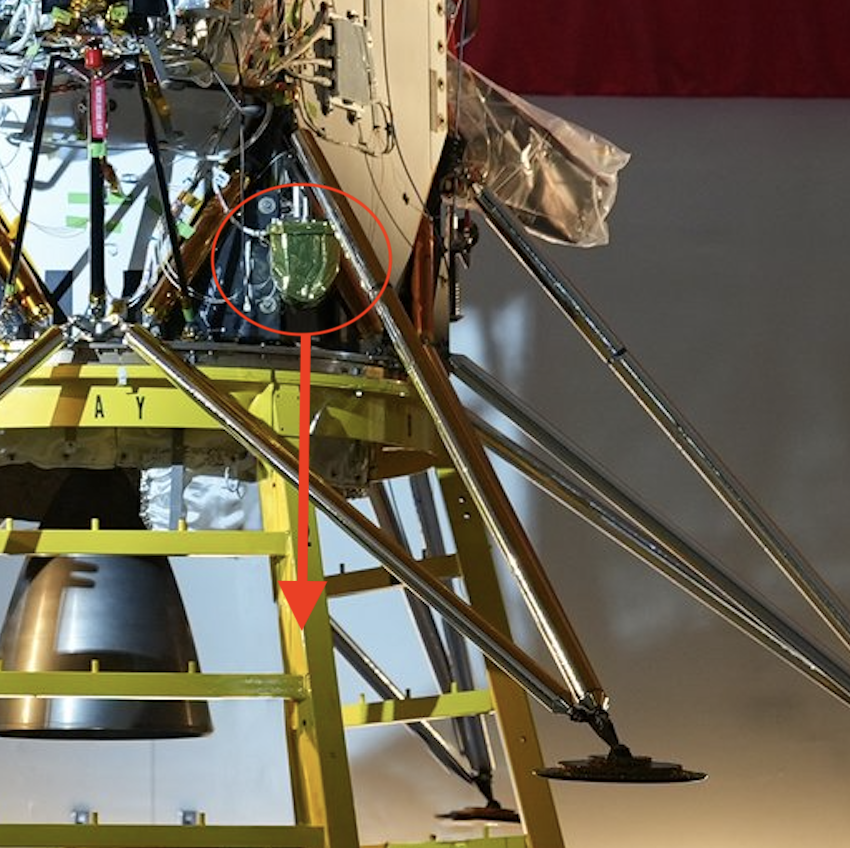

That image from Yaoki (@yaoki_space_g) tells us something that Intuitive machines neglected to mention. Yaoki took the photo from its housing, unable to deploy. Camera looking down, it shouldn't be able to see this landing leg like this.

So IM-2 broke a leg during landing.

(or the Yaoki press release wasn't translated correctly and the rover did manage to deploy somehow)

English

@00aleph00 Cool. I'm going to check this out.

English

Here's a simple fact that gave me pause.

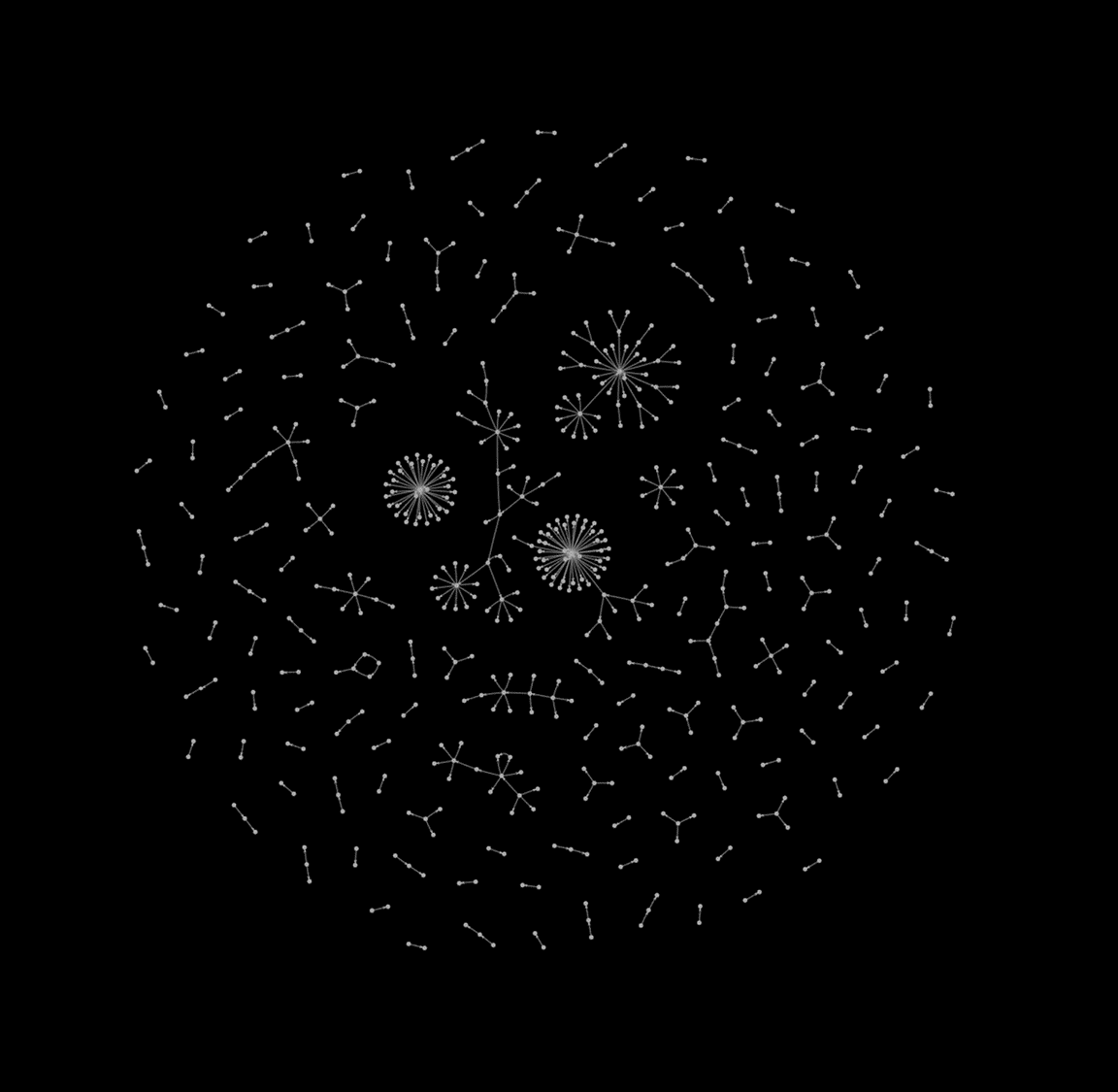

Below I've drawn two graphs:

y^2 = x^3 + 1

AND

y^2 = x^3 + 2

The equations are almost the same.

The graphs look almost the same.

But there's a crucial difference!

The first curve has FINITELY many rational points (in red).

But the second curve has INFINITELY many rational points.

Just by changing 1 --> 2.

Elliptic curves, explained: a 🧵1/n

English

@CarlaDigru38122 @elonmusk Thanks for sharing. He states a truism that provides insight. It's also true that collectively government handles issues well.

Imagine the impact of senior citizens missing mortgage payments if social security income doesn't show up. Keeping contracts keeps us stable.

English

@elonmusk There are those who pass through that impact our lives greatly and when they are gone the energetic loss is still felt it is what they left behind through their actions that influence and move us forward today

English

@elonmusk Why is this in my news feed? I logged on for conversation about active lunar missions?

English

An investigation has found 5 ActBlue-funded groups responsible for Tesla “protests”: Troublemakers, Disruption Project, Rise & Resist, Indivisible Project and Democratic Socialists of America.

ActBlue funders include George Soros, Reid Hoffman, Herbert Sandler, Patricia Bauman, and Leah Hunt-Hendrix.

ActBlue is currently under investigation for allowing foreign and illegal donations in criminal violation of campaign finance regulations. This week, 7 ActBlue senior officials resigned, including the associate general counsel.

If you know anything about this, please post in replies. Thanks, Elon.

English

Observations_Suggestions 리트윗함

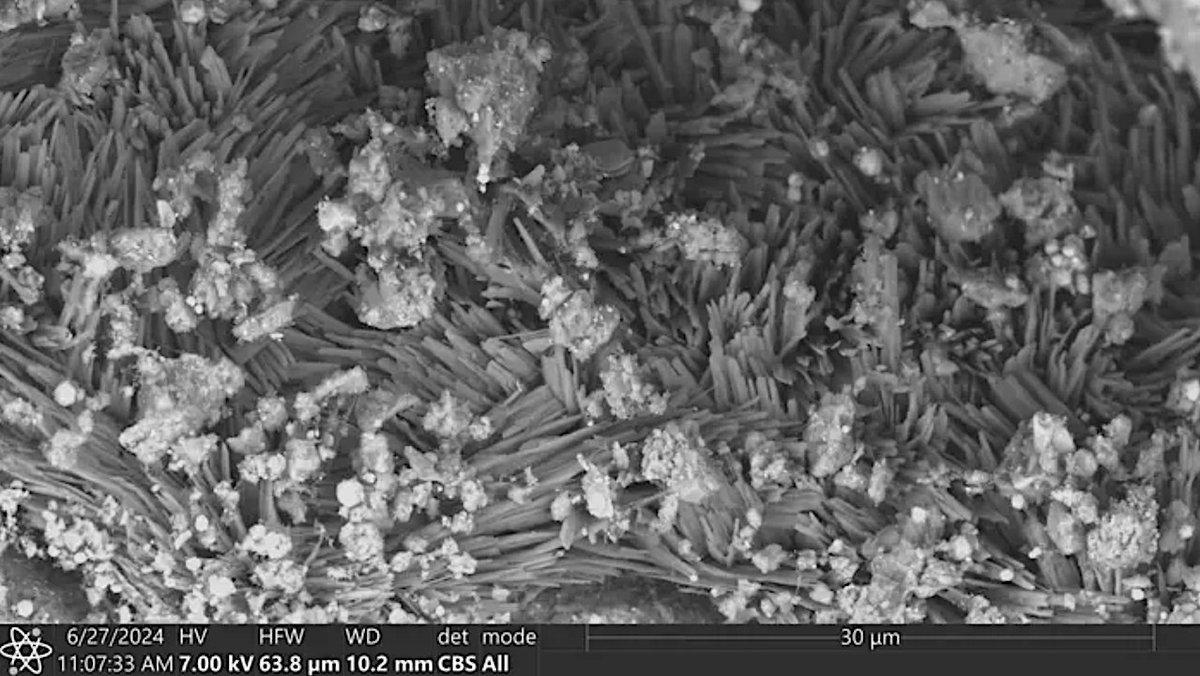

Traces Of Ancient Brine Discovered On Asteroid Bennu Contain Minerals Crucial To Life

astrobiology.com/2025/01/traces… #Astrobiology #Astrochemistry #Astrogeology #Biochemistry @OSIRISREx

English

Observations_Suggestions 리트윗함

@fchollet it's all demons which would like shouting in a pandemonium but eru ilúvatar told them to sing a song.

eru placed Gandalf in the PFC to mediate oscillatory orchestration with beta bursts.

English

Split-brain patients, with severed corpus callosum, can simultaneously believe contradictory things. One hemisphere can genuinely assert a belief (say, "I saw A") while the other asserts the opposite ("No, it was B")

This suggests that the unified situation models your consciousness inhabits are the result of an emergent negotiation between multiple local threads.

English

@fchollet is this to say that we need adversarial dualism in models to reach AGI? the stupidest example is GANs.

English

@nerdalert @aryalpranays @fchollet Is the intersection of these two sets empty or informative? Intersection of two fuzzy sets informs as to the ambiguity of the meaning (semantics) of the variables.

Humor intended. ~smiles

English

@aryalpranays @fchollet He included the reference. But I also believe that he did not include it.

English

Observations_Suggestions 리트윗함

@IntuitMachine Thanks for highlighting our work @IntuitMachine

Read the original research paper here: pubs.acs.org/doi/full/10.10…

English

Observations_Suggestions 리트윗함

@BarghoutLa76868 These are actually terrain captured by @LRO_NASA using NAC. LRO has LOLA which uses Laser Altimeter to get the elevation. Fortunately, I didn't have to do calculate the Slope Barker et.al did most of the work. Elevation maps were calculated ar 20mpp

English