OpenMMLab 리트윗함

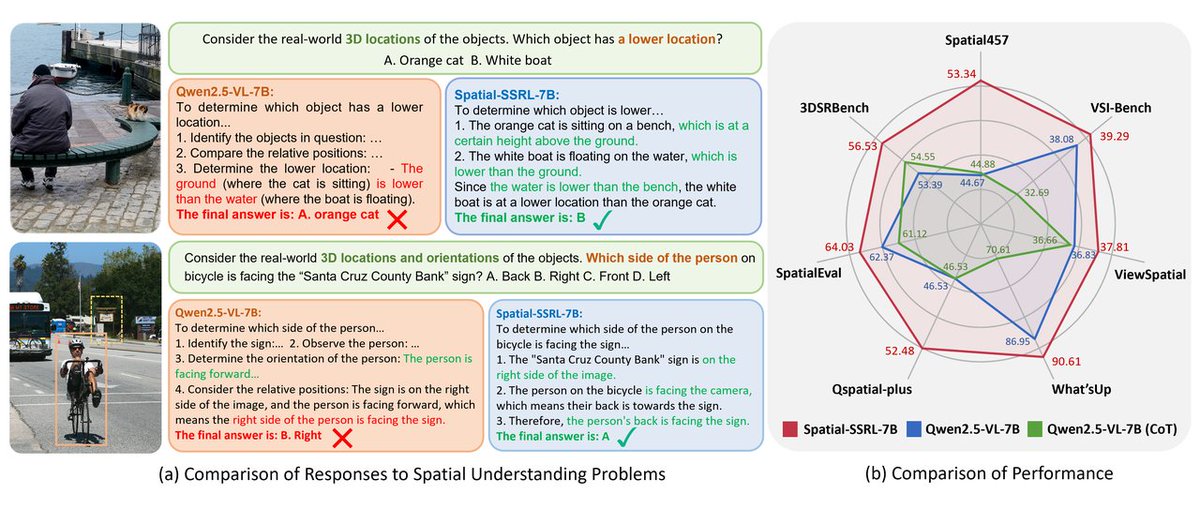

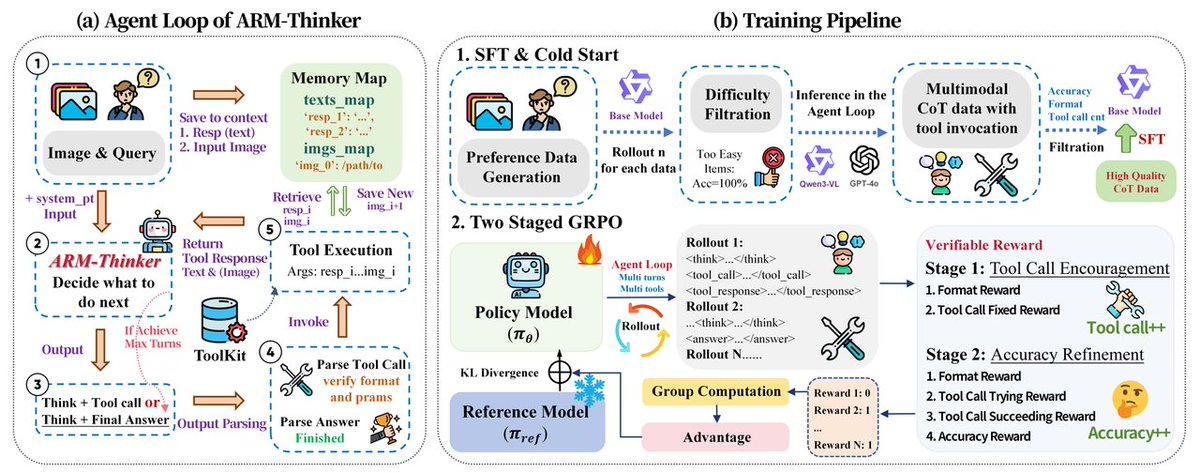

🔥Introducing ARM-Thinker, the first Agentic multimodal Reward Model that autonomously invokes external tools to ground judgments in verifiable evidence. Accepted to CVPR 2026!

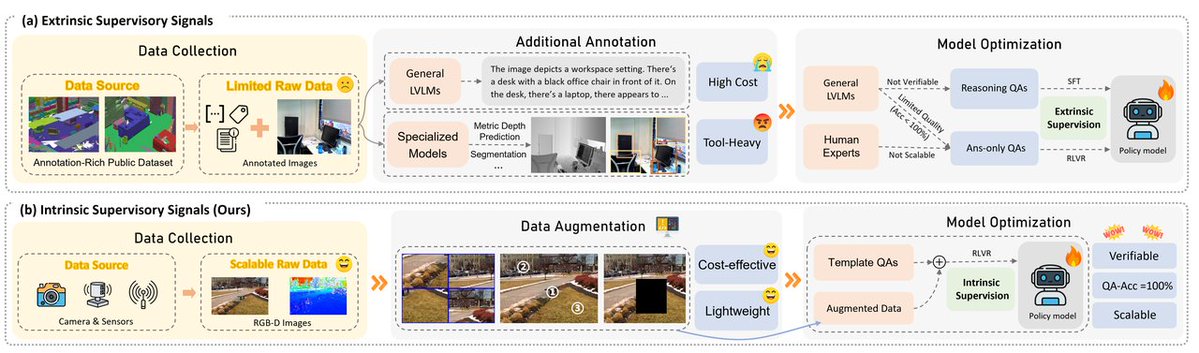

🥳Integrates three families of multimodal tools:

1⃣Image Crop & Zoom-in for fine-grained visual inspection.

2⃣Document Retrieval for multi-page evidence gathering.

3⃣Instruction-Following Validators for constraint verification.

🥳With a Think-Act-Verify loop, ARM-Thinker can call image crop & zoom-in, document retrieval, and instruction-following validators for evidence-based evaluation.

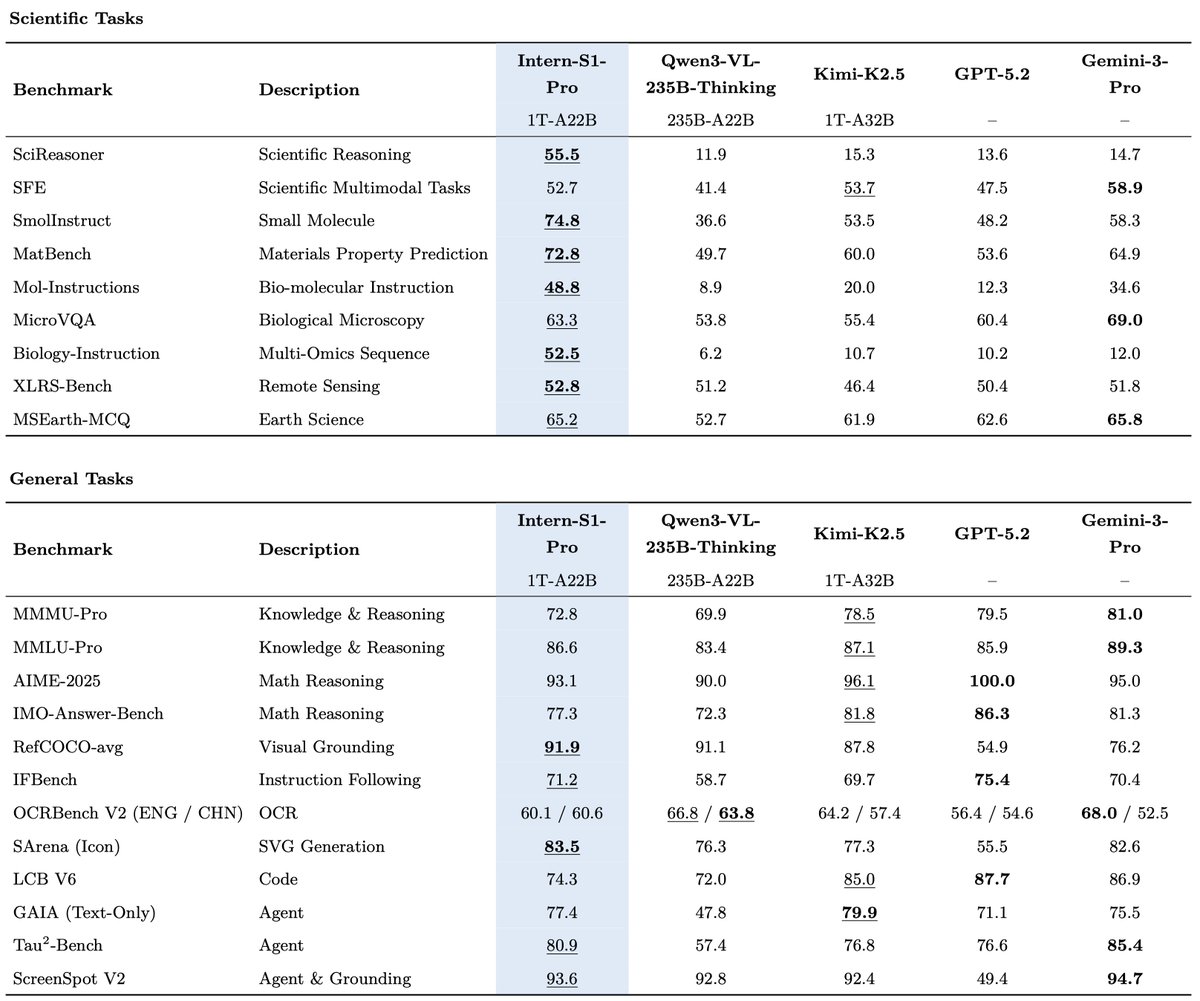

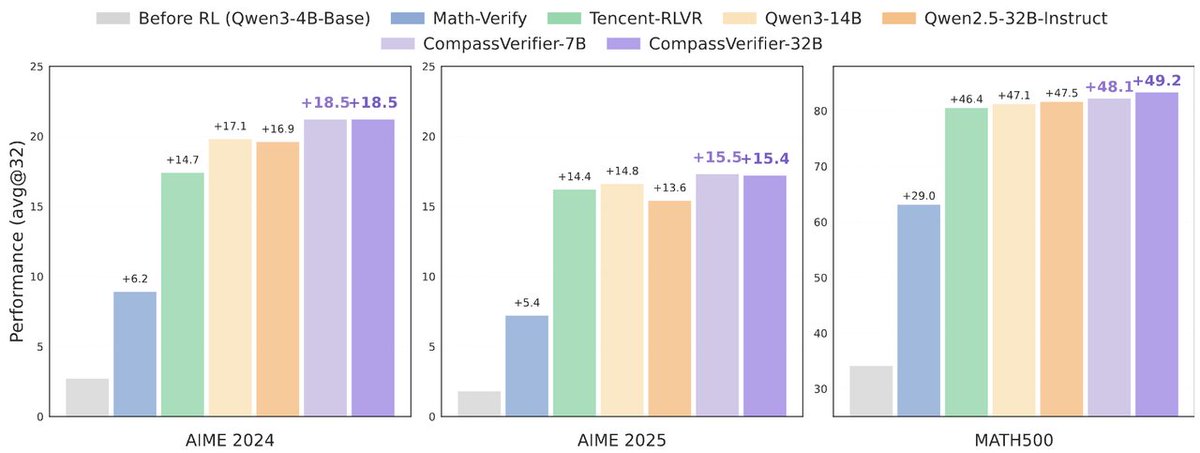

🥳Built on Qwen2.5-VL-7B with SFT + two-stage GRPO, ARM-Thinker improves multimodal reward modeling, tool-use reasoning, and multimodal math/logical reasoning.

😉+16.2% on reward modeling benchmarks (outperforming GPT-4o).

😉+9.6% on tool-use / think-with-images tasks (matching Mini-o3).

😉+4.2% on multimodal math & logical reasoning.

🥳Also introduce ARMBench-VL, the first multimodal reward benchmark that requires tool use.

📄 Paper:

arxiv.org/abs/2512.05111

💻 Code:

github.com/InternLM/ARM-T…

🤗 Dataset: @huggingface

huggingface.co/datasets/inter…

🧪 Evaluation:

github.com/open-compass/V…

English