고정된 트윗

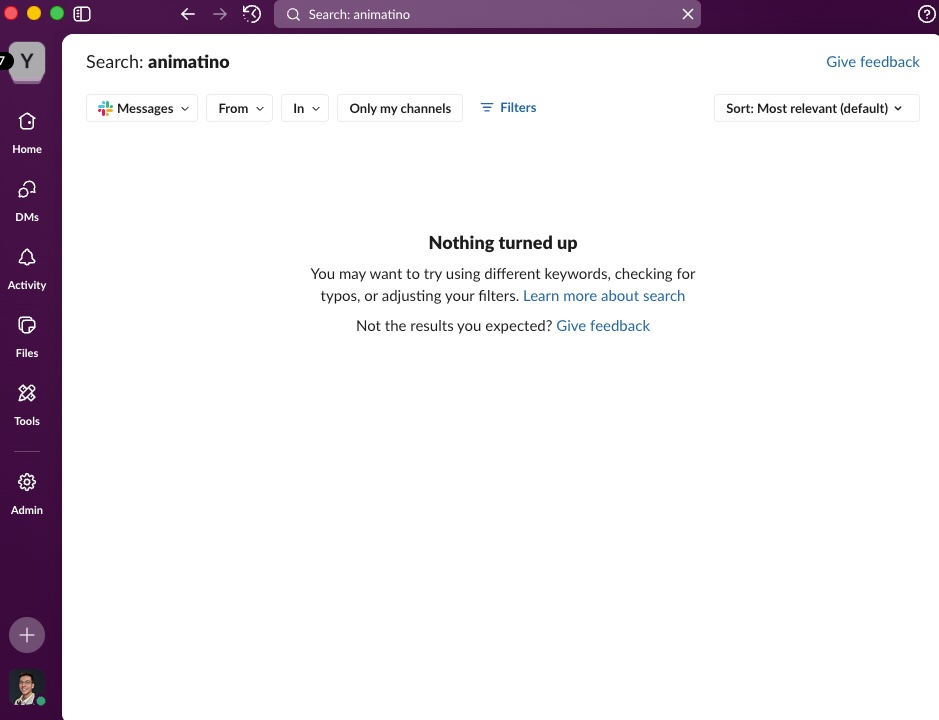

Slack search is somehow still stuck in 2010. No semantic search, no vector search, no personalization. Zero awareness of who you are, what team you're on, or what you're working on.

Just good old, raw, full text search. Type one wrong letter and you may as well have hallucinated that conversation with your PM from last Tuesday.

I know the message exists. Slack knows it exists. But we both have to pretend it's lost because I can't remember the exact phrase someone used.

They did add on an AI feature that gives you some sort of summarized response…but it feels completely bolted on.

We have AI that can summarize entire codebases and write production code, but slack still can't find a conversation from yesterday. The gap between AI capabilities and what slack actually does is wild.

English