0range Crush, CMT

29K posts

0range Crush, CMT

@0rangeCru5h

Perception deviating from reality creates opportunity; Bull or Bear, timeframes & Risk mgt matter most, Looking for Delta, Not advice it may be sarcasm

When I built menugen ~1 year ago, I observed that the hardest part by far was not the code itself, it was the plethora of services you have to assemble like IKEA furniture to make it real, the DevOps: services, payments, auth, database, security, domain names, etc... I am really looking forward to a day where I could simply tell my agent: "build menugen" (referencing the post) and it would just work. The whole thing up to the deployed web page. The agent would have to browse a number of services, read the docs, get all the api keys, make everything work, debug it in dev, and deploy to prod. This is the actually hard part, not the code itself. Or rather, the better way to think about it is that the entire DevOps lifecycle has to become code, in addition to the necessary sensors/actuators of the CLIs/APIs with agent-native ergonomics. And there should be no need to visit web pages, click buttons, or anything like that for the human. It's easy to state, it's now just barely technically possible and expected to work maybe, but it definitely requires from-scratch re-design, work and thought. Very exciting direction!

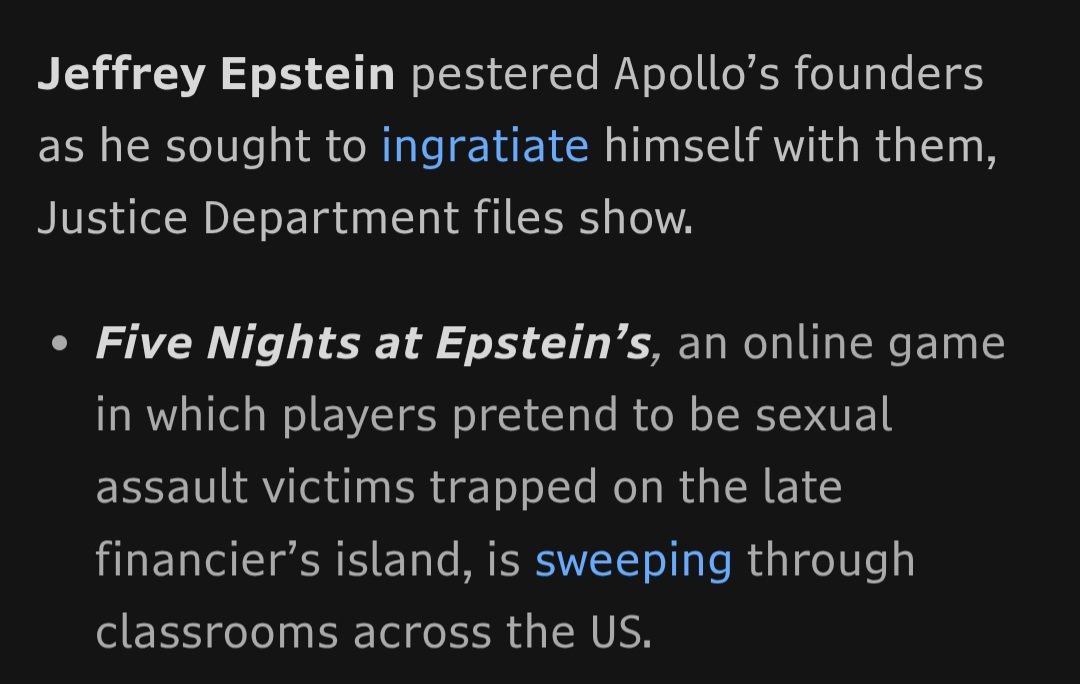

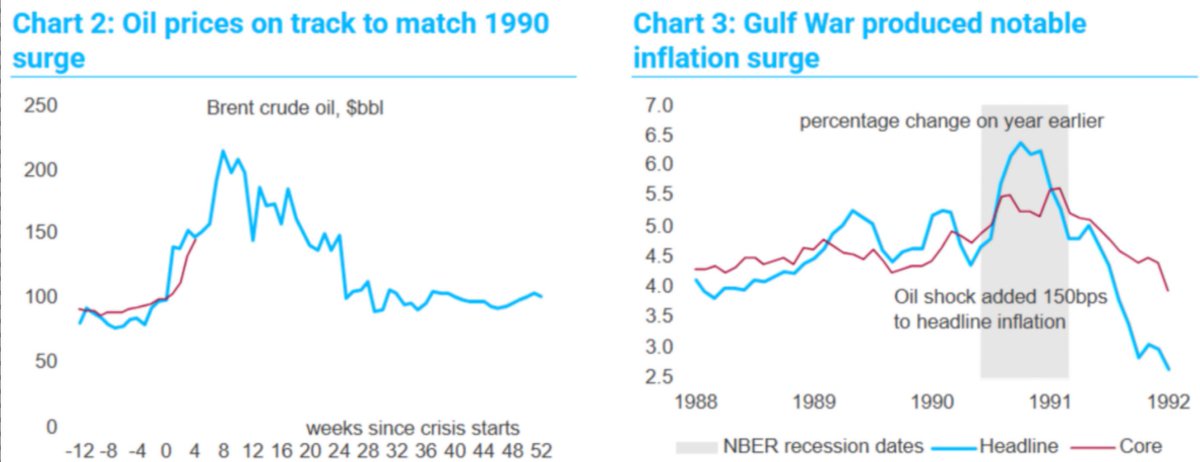

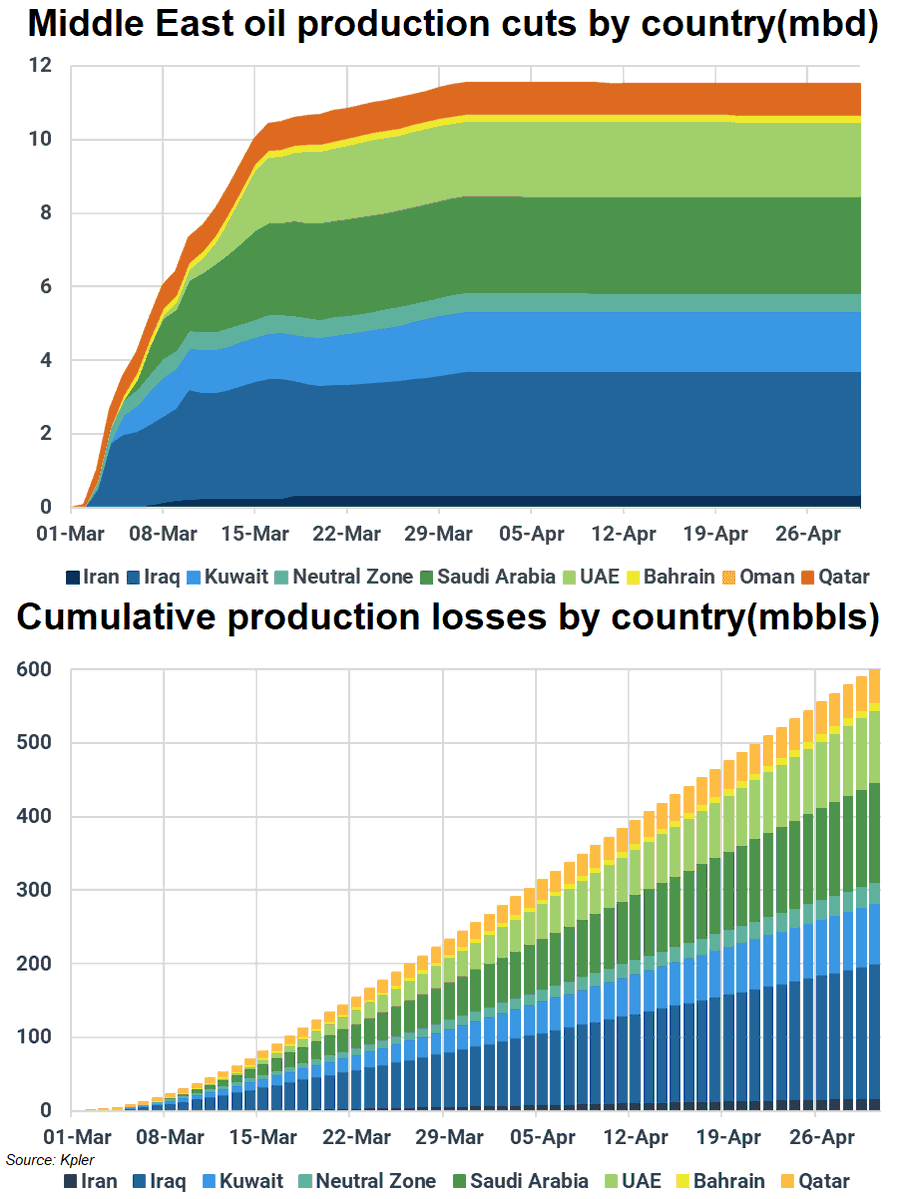

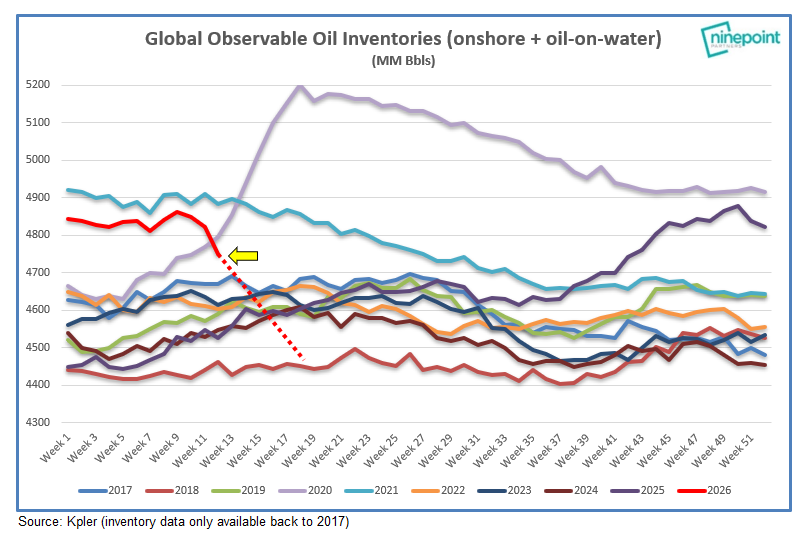

In February, I liked hearing "Nothing will happen," but now, I love hearing "Everything is fine." Even while everyone is optimistic, actual supply losses will continue to accumulate. The more ppl choose to see only what they want to see, the more delayed the recognition of the crisis becomes, pushing any fundamental solution further away. So oil longs, stop being so angry. Don't you realize yet all of this is for your own good? Time is on your side this time. #oott #iran

One common issue with personalization in all LLMs is how distracting memory seems to be for the models. A single question from 2 months ago about some topic can keep coming up as some kind of a deep interest of mine with undue mentions in perpetuity. Some kind of trying too hard.

I've just read about "tokenmaxing," which uses the number of LLM tokens you blow through in a month as a sort of sick productivity metric. This is insane. One of the posts I read inadvertently characterized it perfectly: "Engineers are starting to compare token spending the way they used to compare GitHub commits." GitHub commits are and always have been a crappy metric. They measure your ability to game the system, not your productivity, and they measure the wrong thing: time coding (or asking an LLM to code) as compared to time thinking. Both metrics reward output volume without ever considering if you're building the right thing or providing actual value to your customers. A great engineer thinks (and talks to customers and assesses value and other things) for at least an hour before writing 10 lines of code. A crappy engineer writes 500 lines of crappy code in that hour, all of which will have to be thrown out, and some of which will actively damage a formerly good system. Both tokenmaxing and commits reward the latter behavior. Sure you can vibe up a sh*t load of code in no time at all, and spend a fortune in tokens doing it, but do your customers want or need the result? In the AI world, I should also add that tokenmaxing is intentivising people to waste vast amounts of money by ignoring context engineering and using the largest contexts possible. Need to fix a minor bug? Let's throw 100,000 lines of code into the context! Also, the natural compression that happens when you bang up against context limits degrades quality, increases bugs, and generally makes the LLM less effective. Sure, I can waste lots of your money on unnecessary tokens if it makes my bonus bigger. If that's the game, I'll play it.

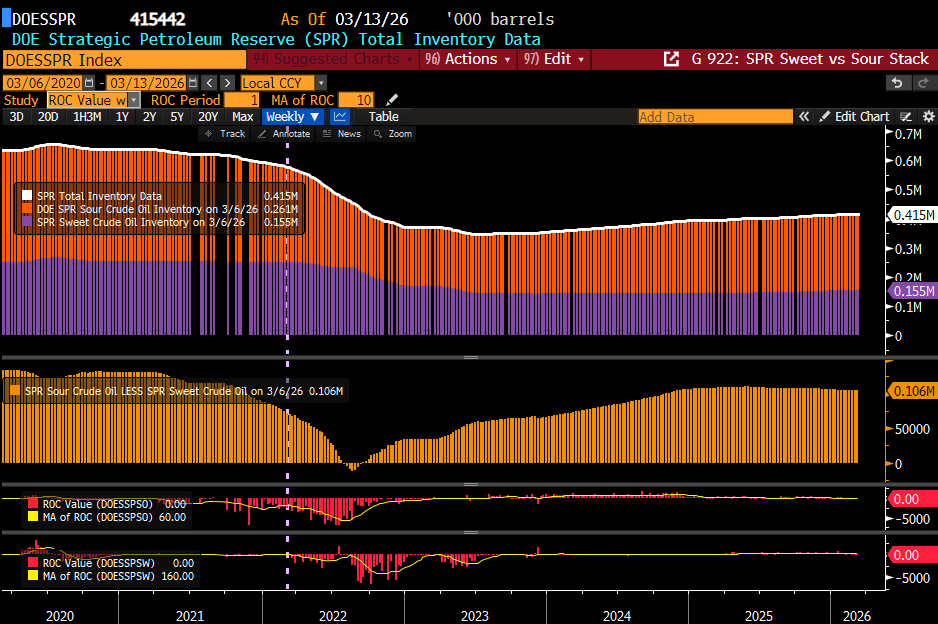

🌮 Trump will have to TACO The 10Y Note Yield is now up ~45 basis points since the war began on February 28th. With the 10Y Note Yield now up to 4.40%, the US economy cannot handle a 5% 10Y Note Yield. He has no choice but to crash oil and bond yields by announcing a deal.

BREAKING: Trump says 'we are getting very close to meeting our objectives as we consider winding down our great Military efforts in the Middle East with respect to the Terrorist Regime of Iran'