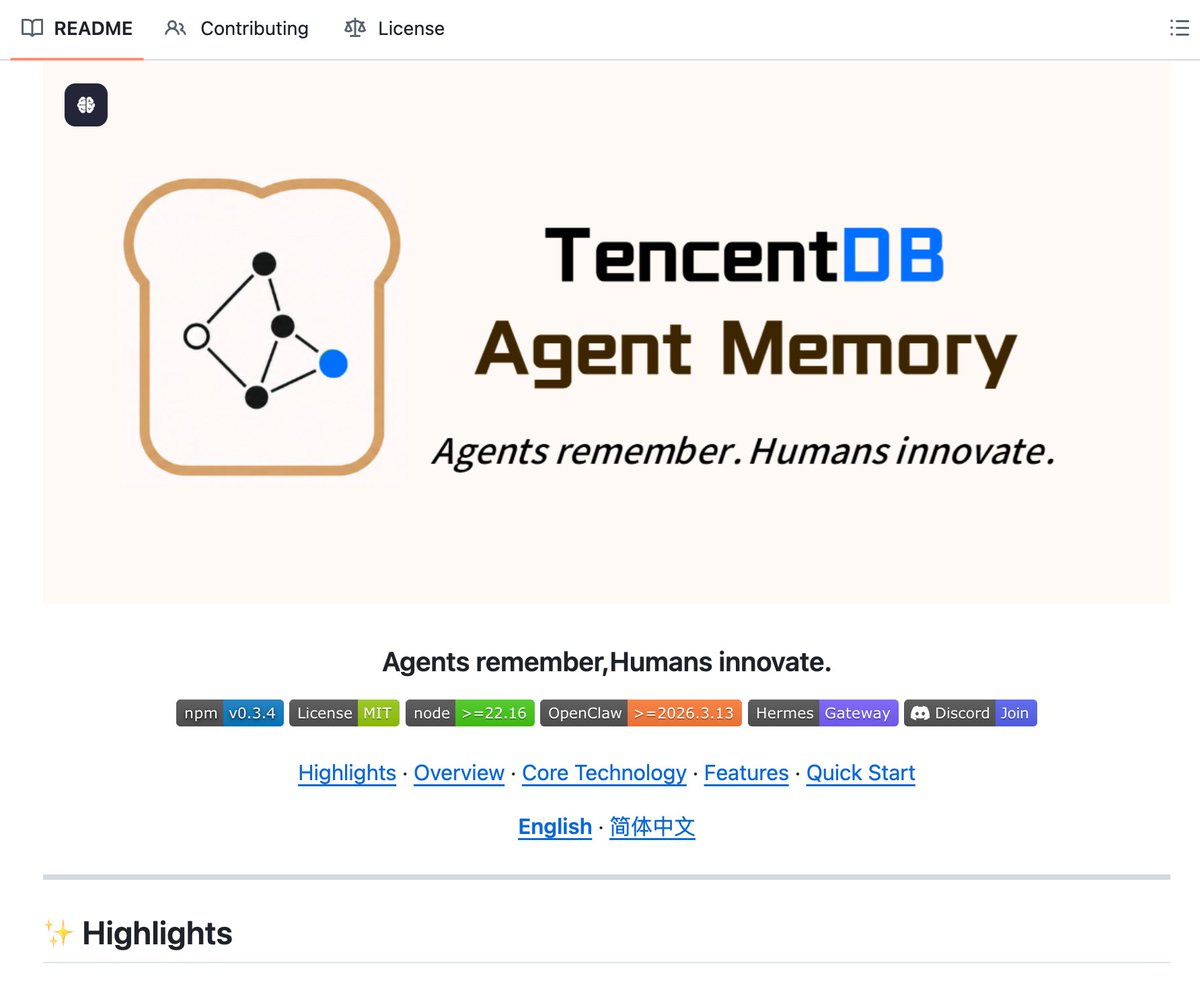

We spent 6 months on one problem: agents losing context in long sessions. Ended up building and open-sourcing an agent memory system. A few things we learned: 🪄compressing stale context mid-session cut token usage by 61% 🪄giving agents a structured task map (mermaid-based) made them way less likely to lose track in 30+ step workflows 🪄persona coherence jumped from 48% to 76% once we added dedicated persona memory repo 👉 github.com/Tencent/Tencen… Agent memory is genuinely hard and we don't have all the answers. Happy to dig into architecture, benchmarks, tradeoffs, whatever. AMA👇 @TencentDBAbxo2 team is here to talk about it.