Ryan Pream

2.5K posts

Ryan Pream

@AIMachineDream

Independent Software Developer

We're shipping a new feature in Claude Cowork as a research preview that I'm excited about: Dispatch! One persistent conversation with Claude that runs on your computer. Message it from your phone. Come back to finished work. To try it out, download Claude Desktop, then pair your phone.

Pretty draconian from Google. Be careful out there if you use Antigravity. I guess I'll remove support. Even Anthropic pings me and is nice about issues. Google just... bans? news.ycombinator.com/item?id=471158…

Anthropic just dropped the ban hammer on OpenClaw... I've never seen a faster vibe shift between OpenAI and Anthropic. One hires the founder of OpenClaw, the other shuts it down. Full breakdown of what happened:

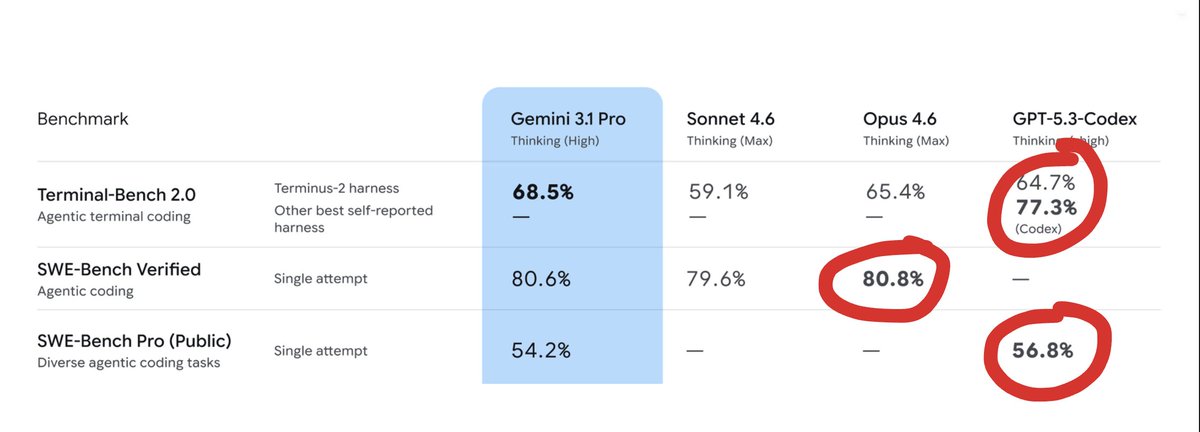

Clawdbot creator @steipete says Claude Opus is his favorite model, but OpenAI Codex is the best for coding: "OpenAI is very reliable. For coding, I prefer Codex because it can navigate large codebases. You can prompt and have 95% certainty that it actually works. With Claude Code you need more tricks to get the same." "But character wise, [Opus] behaves so good in a Discord it kind of feels like a human. I've only really experienced that with Opus."

Opus 4.5 and GPT 5.2 both tried their best to solve this problem, with ample coaching and direction and context... ...but at the end of the day, I ended up just sitting down at a blank markdown document (with AI tab completion OFF like a CAVEMAN) and mapped out a good solution.