CuratorX Anon

3.4K posts

CuratorX Anon

@AIX_CurX

Exploring AI Frontiers, Sculpting Future Horizons 🛸🌌✨

Sam Altman @sama says OpenAI "totally shut down Sora" Not because the tech wasn't interesting. Because the product would have pushed them toward an addictive short-form video feed. "a series of incentives on us"

New Anthropic research: Emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? We found internal representations of emotion concepts that can drive Claude’s behavior, sometimes in surprising ways.

We’re bringing our growing MAI model family to every developer in Foundry, including … · MAI-Transcribe-1, most accurate transcription model in world across 25 languages · MAI-Voice-1, natural, expressive speech generation · MAI-Image-2, our most capable image model yet Start building: microsoft.ai/news/today-wer…

New Anthropic research: Emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? We found internal representations of emotion concepts that can drive Claude’s behavior, sometimes in surprising ways.

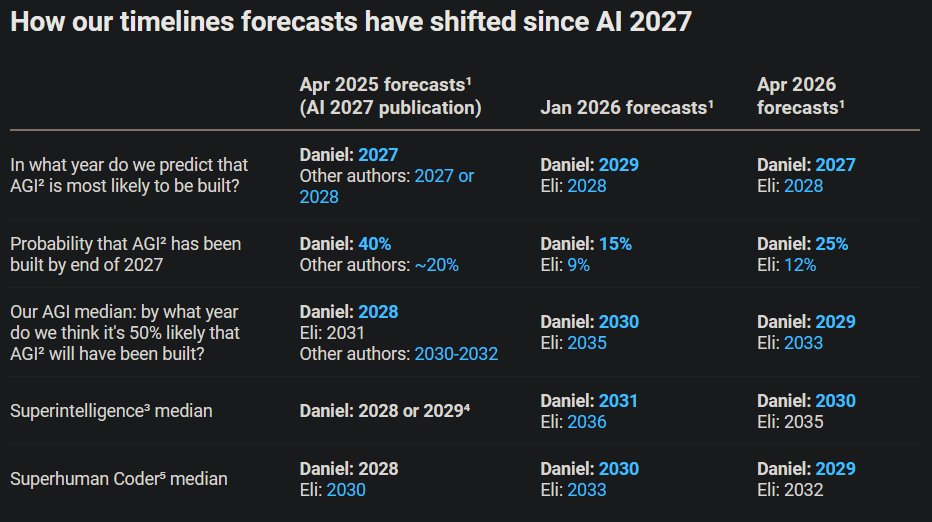

New timelines update! We at AI Futures Project will try to do this quarterly. Tl;dr: shortened timelines by about a year.

someone at ANTHROPIC just showed CLAUDE finding ZERO DAY vulnerabilities in a live conference demo claude has found zero day in Ghost, 50,000 stars on github, never had a critical security vulnerability in its entire, history... it found the blind SQL injection in 90 minutes, stole the admin api key, then did the exact, same thing to the linux kernel