Alex Engler

1.6K posts

@AlexCEngler

Penn Center for Media, Tech & Democracy | "If you can keep it" | Alum: NSC/OSTP @BrookingsGov @urbaninstitute @UChicagoCAPP @McCourtSchool

MN-01 was only Trump +12 and MN-08 only Trump +14. Not saying these are on the table, but if Mike Lindell caused the bottom to fall out with the MN GOP, there are a lot of ancestral Dems in these areas...

New paper: LLMs are increasingly used to label data in political science. But how reliable are these annotations, and what are the consequences for scientific findings? What are best practices? Some new findings from a large empirical evaluation. Paper: eddieyang.net/research/llm_a…

Mentoring and collaborating with Sayash has been a privilege and the highlight of my own career! I look forward to seeing what he will do next. He has executed at least four distinct but interrelated research agendas, each of which would make an impactful PhD thesis on its own — (1) rigorous AI agent evaluation, (2) AI, science, and reproducibility (3) AI ethics and snake oil, and (4) AI policy — and that's not even counting the thinkpiece-y work like the AI as Normal Technology essay and newsletter!

Leavitt: "The Democrat Party's main constituency is made up of Hamas terrorists, illegal aliens, and violent criminals."

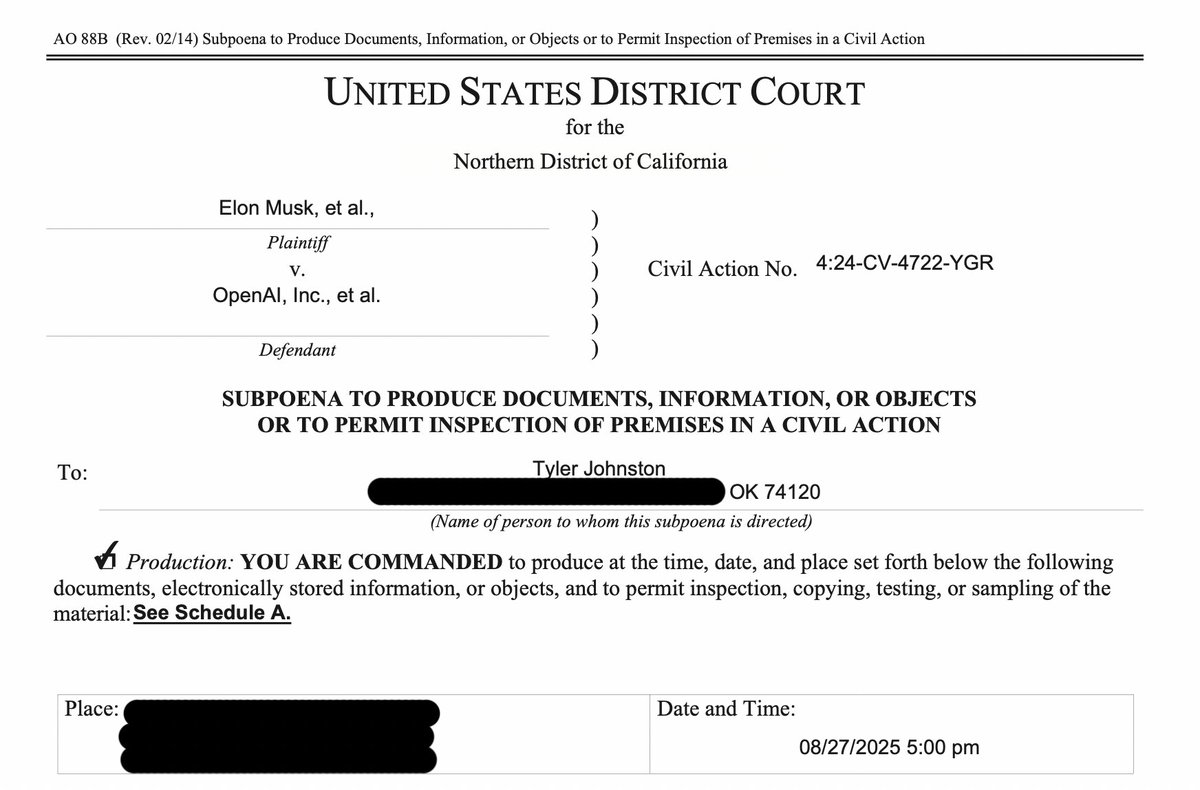

One Tuesday night, as my wife and I sat down for dinner, a sheriff’s deputy knocked on the door to serve me a subpoena from OpenAI. I held back on talking about it because I didn't want to distract from SB 53, but Newsom just signed the bill so... here's what happened: 🧵

Join our 1st public event on October 21, 𝗧𝗵𝗲 𝗗𝗲𝗺𝗼𝗰𝗿𝗮𝘁𝗶𝗰 𝗥𝗲𝗽𝗲𝗿𝗰𝘂𝘀𝘀𝗶𝗼𝗻𝘀 𝗼𝗳 𝗠𝗲𝗱𝗶𝗮 𝗙𝗿𝗮𝗴𝗺𝗲𝗻𝘁𝗮𝘁𝗶𝗼𝗻! Our events aim to share essential research on what ails, and how we can improve, the information environment. …iafragmentation-online.eventbrite.com