Bill

12.4K posts

@PopVerseYT @BillQueens I think local llama on Reddit and @0xSero or @sudoingX have a x community. If you got a 5090 get 27b running on there and install Hermes agent. (It’s not sota but it’s pretty good)

English

Hermes Agent + qwen 27b

This project is to see if I can get a fully functioning and safe saas/business set up w/ hermes and 27b just off telegram.

Site is pretty much done, auth is done, just hooking up stripe payments and manually auditing. Will probably do a sota audit with a couple different models to see if I miss anything.

English

@LottoLabs Ya I was so sick of vLLM horseshit lol. I just built my own frontend today to control everything.

English

@LottoLabs I’ve just been ripping llamma.cpp so GGUFs have been solid for me. Had so many issues with vLLM nightlies said fuck it and haven’t looked back.

English

@BillQueens I think I ran that quant on a rtx6000 pro and it obviously ripped, I like the 27b overall though, mainly used the unsloth, might as well try nvfp if you have Blackwell chips

English

@LottoLabs GGUF will look at trying this tomorrow - what are your thoughts so far?

English

@BillQueens Have you been running the NVFP model? huggingface.co/Kbenkhaled/Qwe…

English

Do you agree? Where do you do most of your dev work?

I personally have a linux server machine in my house that I run hermes-agent on and do my dev work from.

How bout you?

Ryan Carson@ryancarson

100% of dev is going to be done in sandboxes in the cloud, controlled by kanban boards. Trust me, I love my local machine and gorgeous mac apps, but all of it is just a terrible form factor for running a team of agents effectively.

English

Bill retweetledi

@selfhostedmind @maria_rcks Likely the quickest best option available in her country.

English

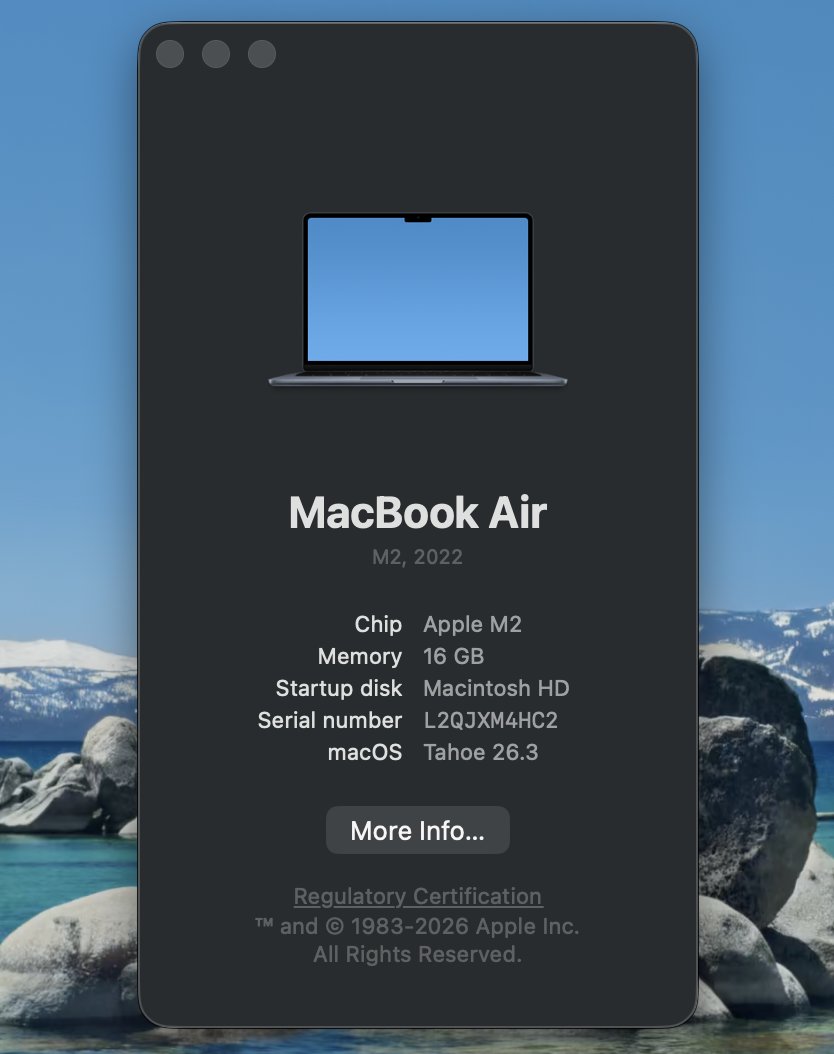

@maria_rcks did theo really cheap out on a refurbished 2022 M2 air ?? wow so generous

English

I can't believe im typing this on a macbook, everything feels really smooth and weird (macos) but really really thank you so much theo, this is amazing, you really didnt have to, but thank you so much ❤️

Theo - t3.gg@theo

Don’t worry, I just bought her a MacBook That said - if she ships this much on a computer less powerful than a Raspberry Pi, what’s your excuse?

English

@__tinygrad__ Imagine not building shipping container token machines in the year of our lord

English

People are too focused on trying to build God and not thinking about the unit economics 🤑

Milind@milindS_

Every day that I use Kimi and GLM, I realize that @__tinygrad__ is going to mint money in a couple years time The big 'labs' don't have any way to compete with cheap inference of ridiculously good models

English

NVIDIA's biggest GTC announcement was a $20 billion bet on the same problem we solved 6 years ago.

Their next-gen inference chip - not available yet - has 140x less memory bandwidth than @cerebras.

To run a single 2 trillion parameter model, you need 2,000+ Groq chips.

On Cerebras, that's just over 20 wafers.

Even paired with GPUs, Groq maxes out at ~1,000 tokens per second.

We run at thousands of tokens per second today.

And every day. In production now.

Why?

When you connect 2,000 chips together, every interconnect has latency. Every cable has overhead.

It doesn't matter what your memory bandwidth is on paper if you're bottlenecked by the wiring between thousands of tiny chips.

We solved this with wafer scale.

One integrated system. Little interconnect tax.

Jensen told the world that fast inference is where the value is.

He’s right - it’s why the world’s leading AI companies and hyperscalers are choosing Cerebras.

English