CogInterp Workshop @ NeurIPS 2025

40 posts

CogInterp Workshop @ NeurIPS 2025

@CogInterp

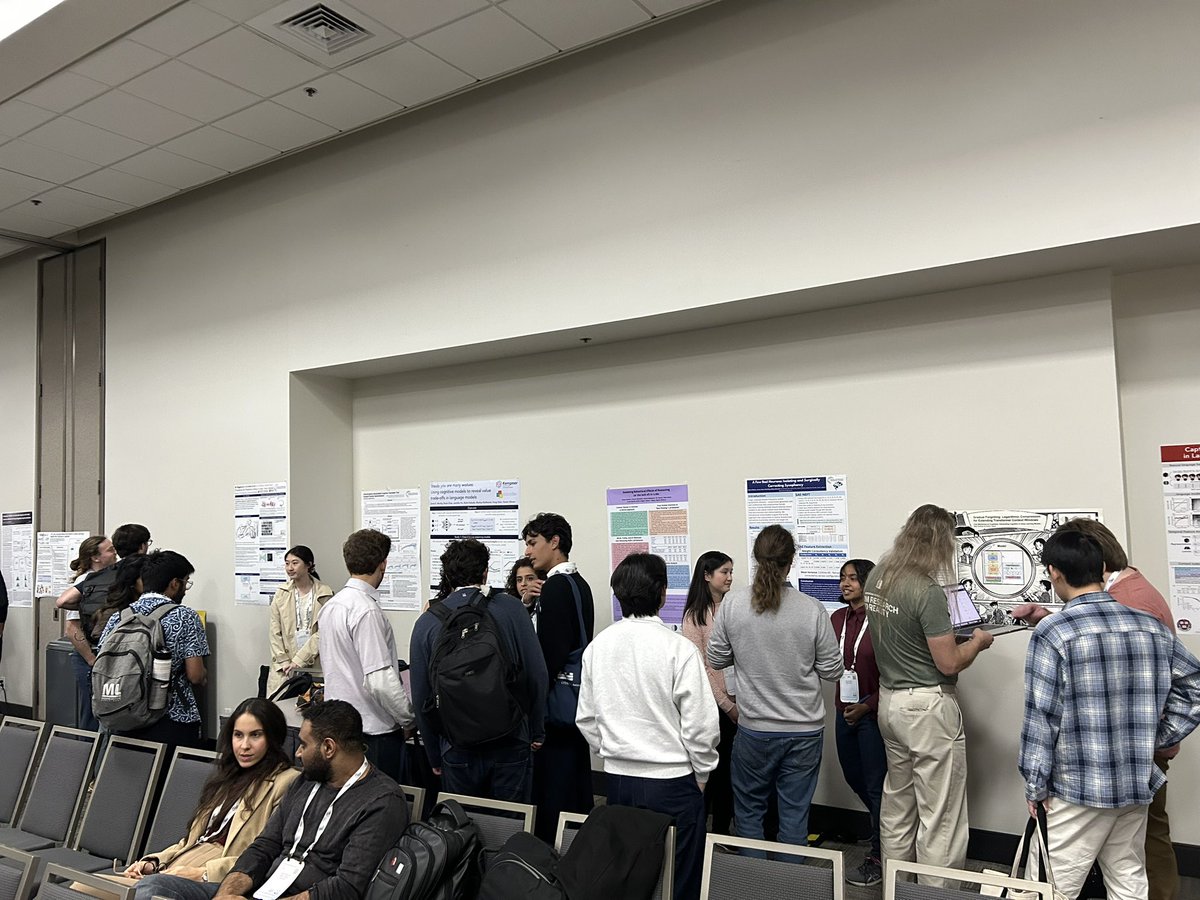

At Upper Level Room 5AB of the conference venue!

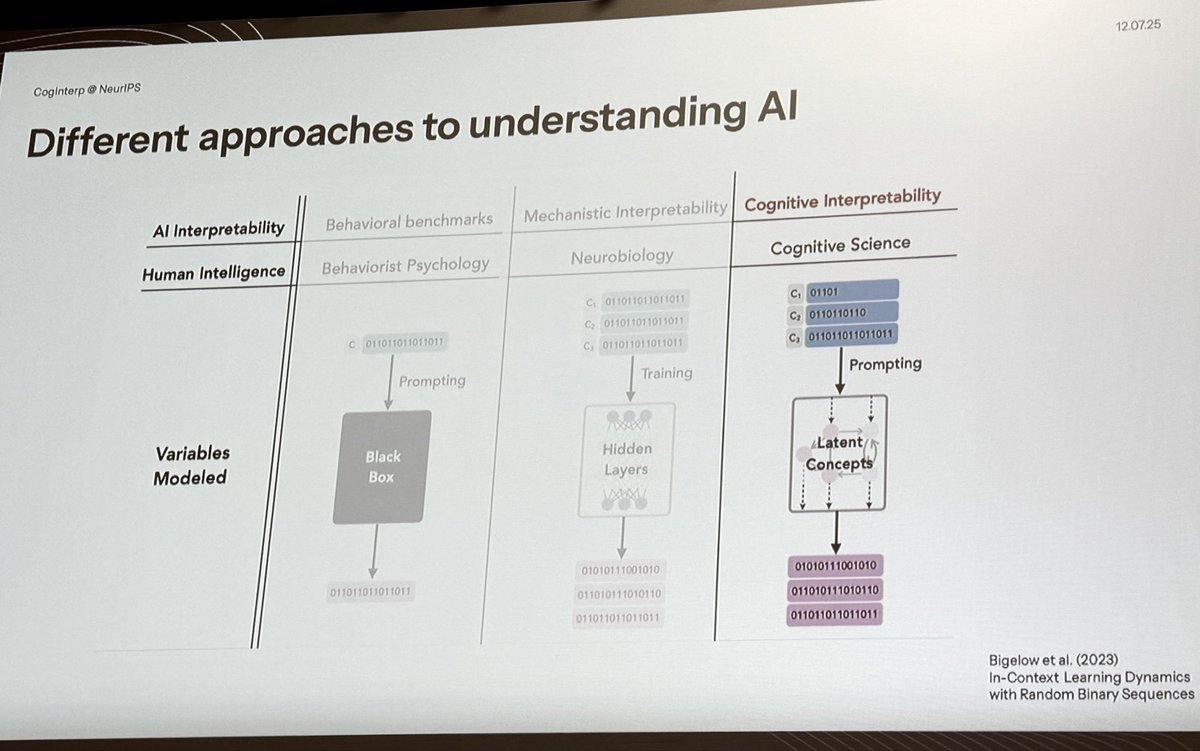

Our last Stanford guest lecture - @EkdeepL on what counts as an explanation & a neuro-inspired "model systems approach" to interp Plus, how in-context learning and many-shot jailbreaking are explained by LLM representations changing in-context (as a case study for that approach) 00:33 - What counts as an explanation? 04:47 - Levels of analysis & standard interpretability approaches 18:19 - The "model systems" approach to interp [Case study on in-context learning] 23:36 - How LLM representations change in-context 44:10 - Modeling ICL with rational analysis 1:10:54 - Conclusion & questions Thanks again to @SuryaGanguli for having us in his class!

Our Best Paper Award goes to Nathaniel Imel and Noga Zaslavsky @NogaZaslavsky for their excellent paper “Culturally transmitted color categories in LLMs reflect a learning bias toward efficient compression”!

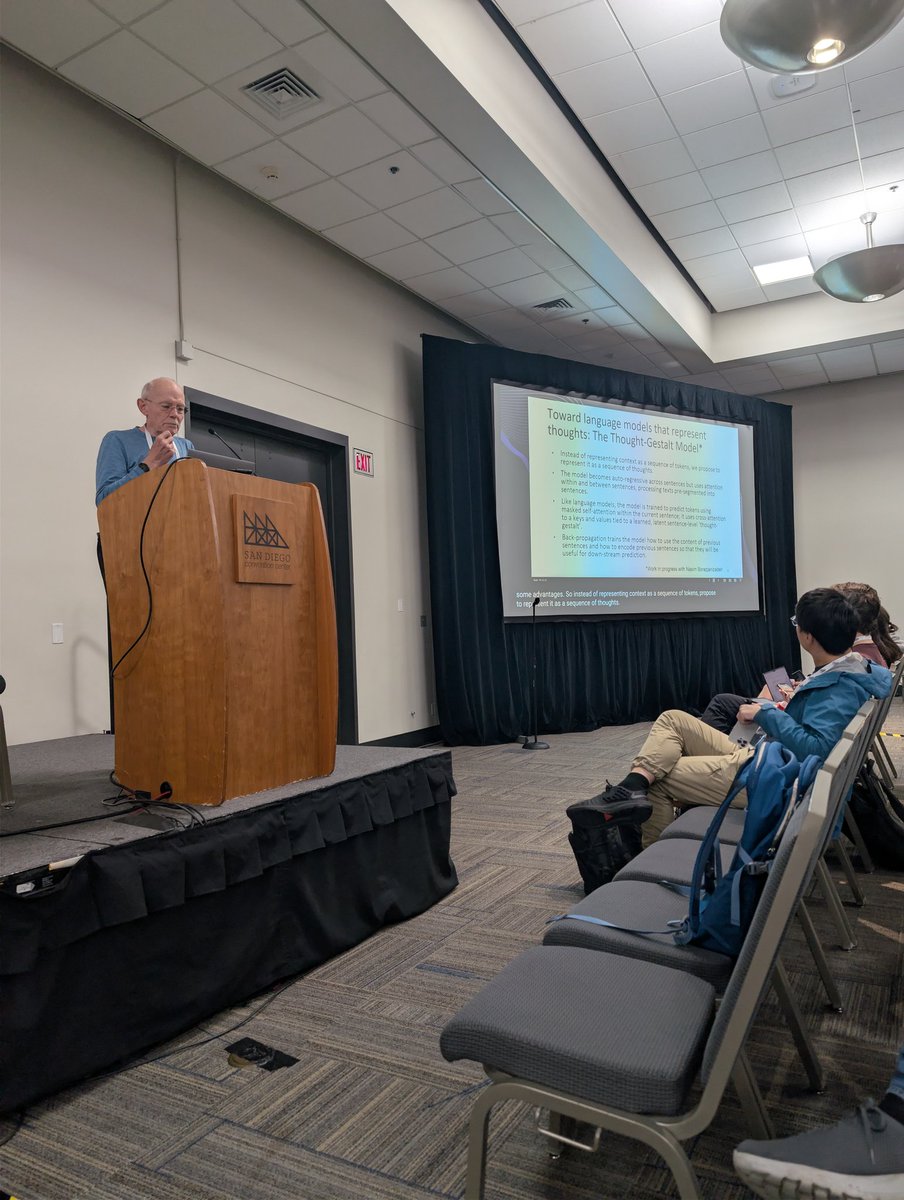

Jay McClelland, opens with a question, "Do LMs have thoughts?" Are LMs stochastic parrots or is there some understanding?

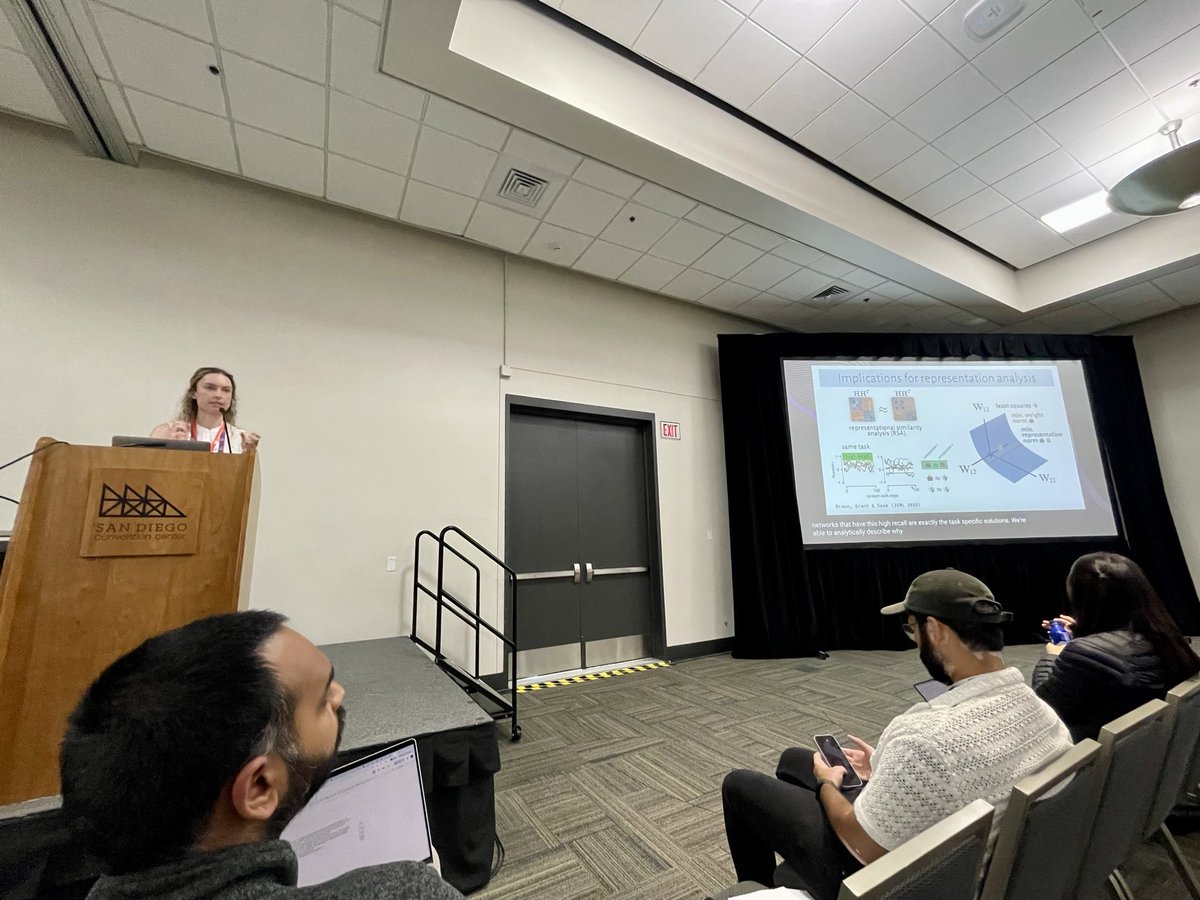

The spotlight talks will cover all aspects of interpreting cognition in deep learning models: from behavior to algorithms to representations! Also check out the list of poster presentations at coginterp.github.io/neurips2025/ac… (3/3)