Compusemble

2.4K posts

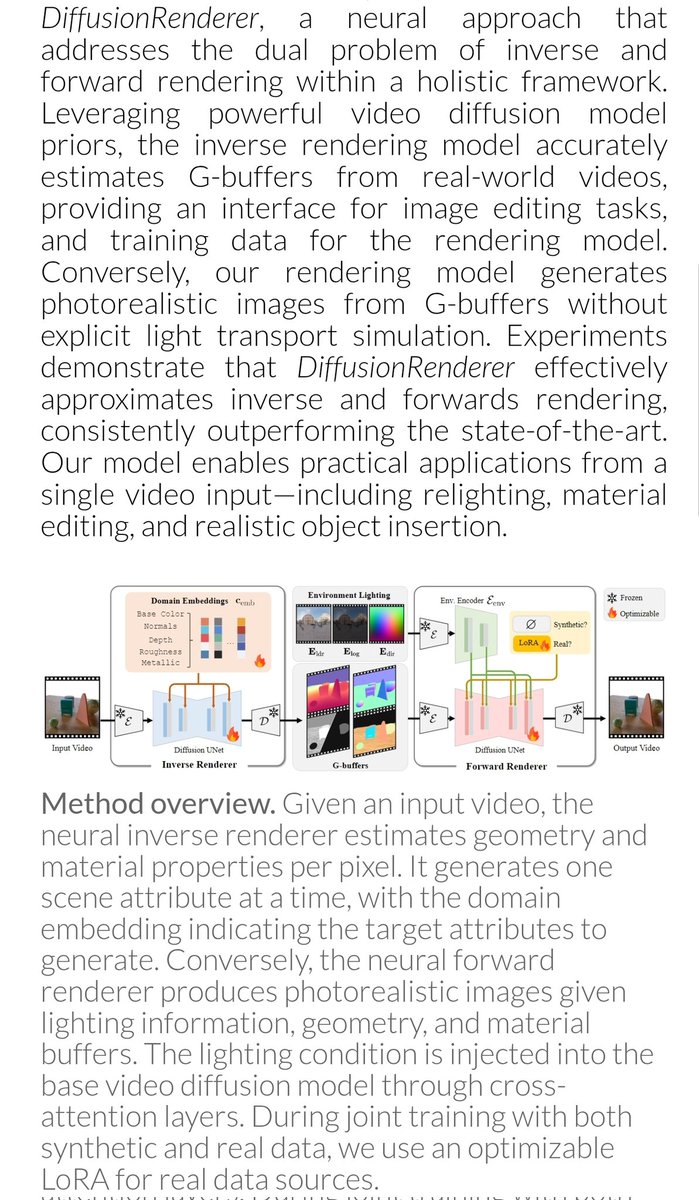

Meta also worked on DLSS5-like tech, which aims to turn rendered video into photorealistic video while anchoring it to the input's geometric structure and motion. Some examples of it in GTA-V in the thread below. Very cool tech. Also, full paper here: arxiv.org/pdf/2603.23462

DLSS 5 is all over the timeline, and for good reason. In my internship at @AIatMeta we had the same idea: use a video model as a learned second-stage renderer on top of game engines. In our paper RealMaster, we make synthetic video look real while preserving scene fidelity 👇

I spent some time looking into DLSS 5 rendering from the pure tech side - so ignoring the artistic debate for a second, I think it may already be showing early signs of something much more interesting: - Implicit Inverse Rendering

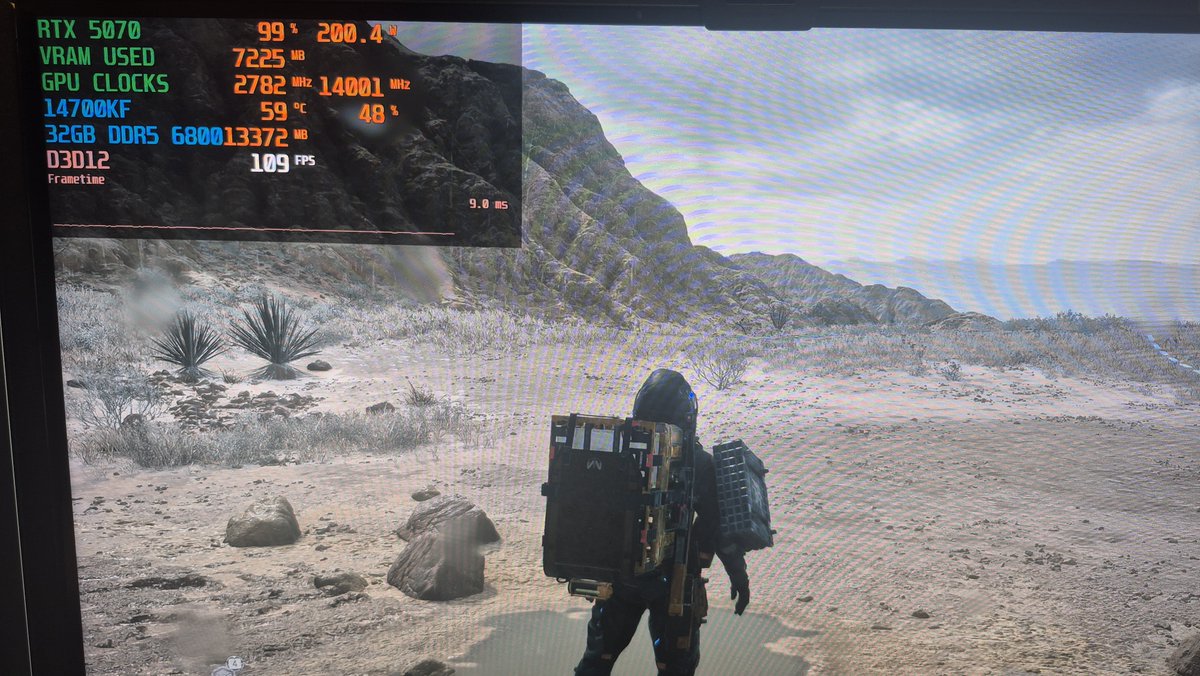

Saw an interesting Steam forum post from Nixxes regarding DEATH STRANDING 2: ON THE BEACH possible performance issues due to PCIe bandwidth, so I decided to measure it on my system using @CapFrameX, and I indeed saw quite a bit of PCIe traffic when moving around in the game.

Daniel got important clarifications from NVIDIA. TLDR: the "DLSS5 skeptical" were right about *everything*. 1⃣ It's a 2D AI Filter. Input is only color buffer & motion vectors. The model doesn't see geometry, lights, PBR properties, normals, anything🧵 youtu.be/D0EM1vKt36s

Just tried FSR Upscaling 4.1 in a few PC games. It’s based on the same neural network as the upgraded PSSR we released for PS5 Pro… and it looks stunning! Wonderful working with @jackhuynh and the @AMD team as we collaborate on AI graphics tech. Big win for Project Amethyst :-)