Glimor

3.7K posts

Glimor

@CryptoGlimor

Content Creator | Collab Manager Moderator - @QwertiAI | @Fuglysart | @AG_Protocol Building Trust Through Clarity And Consistency

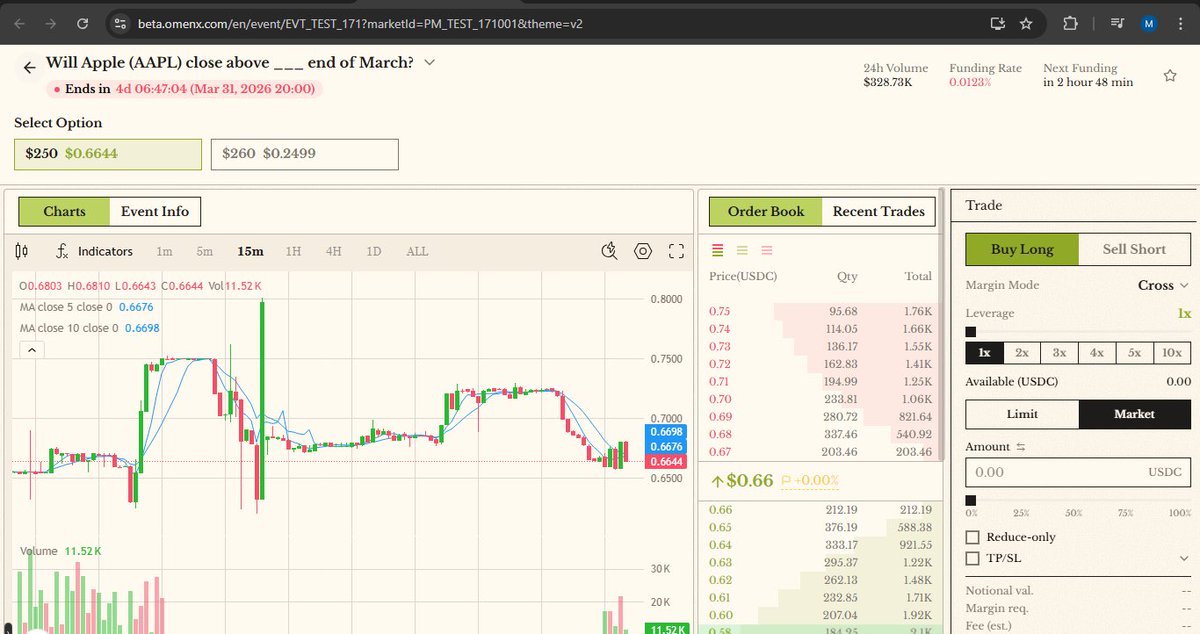

🚀 Volume exploding on @OmenX_Official — Trade the event, not the hype! Finland crushing Eurovision 2026 at $0.3546, Apple AAPL end of March calls going hot. 10x leverage, set stops, exit anytime perpetual markets, no expiry! Who’s loading up? 🔥

A few days ago, I was still unranked on Nasun I thought my posts weren’t being counted. But then the founder explained how the leaderboard actually works It’s not automated. Every participant is reviewed manually. Posts are collected, evaluated, and scored one by one. Which also means: There’s a delay. What you post today might take a few days to reflect. That changed how I looked at it. Checked today Now I’m ranked #451 with a 9.4 score 📈 So it wasn’t about being ignored. It was just part of the process. And more importantly: This system rewards • clarity • originality • real reach Not just volume. It’s slower but it makes the leaderboard feel more meaningful. Still early. But now I understand how to approach it better 👀 @Nasun_io #Nasun

. @RialoHQ Authentication flow explained Seed phrase anxiety has killed more crypto onboarding than any gas fee ever did. @RialoHQ is fixing that at the protocol level not as an afterthought. The authentication flow works exactly like your banking app you sign in with email, phone, or a social account, confirm a short code or push notification and you are in. ▪️No key management. ▪️No clipboard risks. ▪️No 3 AM panic About a lost hardware wallet. Under the hood account abstraction turns your identity into a policy rather than a single private key so losing a device does not mean losing your funds. You revoke the old device in settings and recover through the same email or phone route. Native 2FA is baked directly into the protocol, not bolted on by the frontend. For developers this means compliance and identity logic lives on-chain from day one, not scattered across brittle off-chain services. This is what Web3 login should have always looked like.

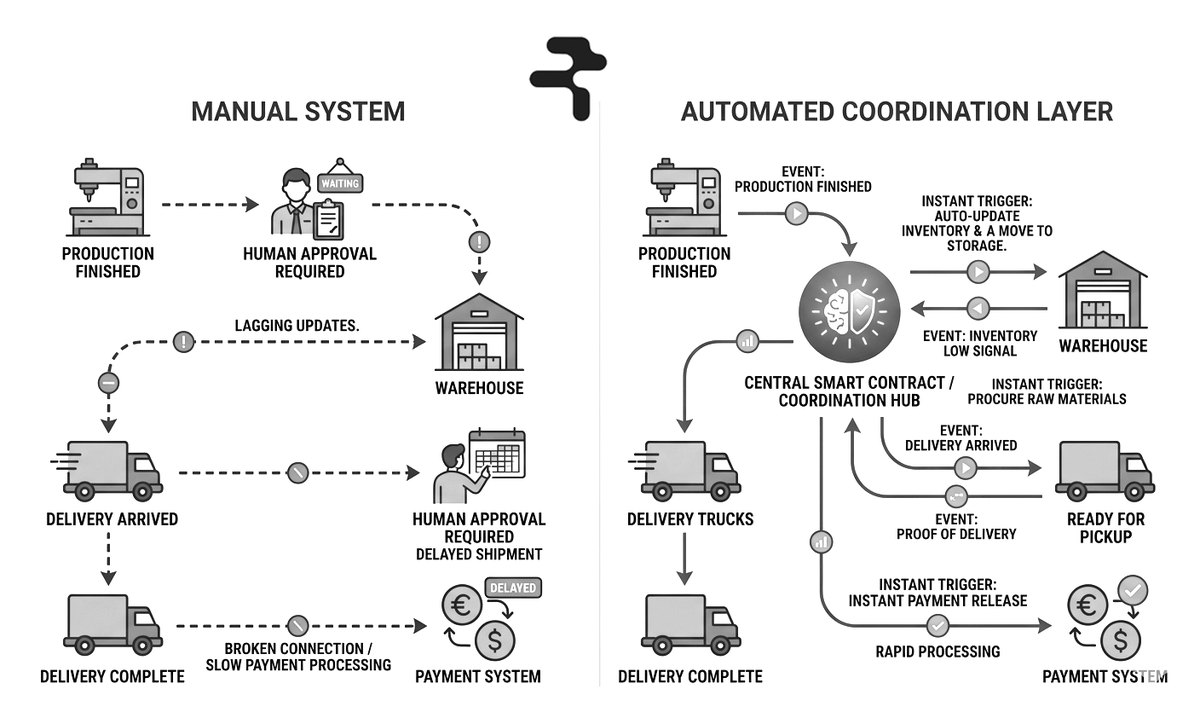

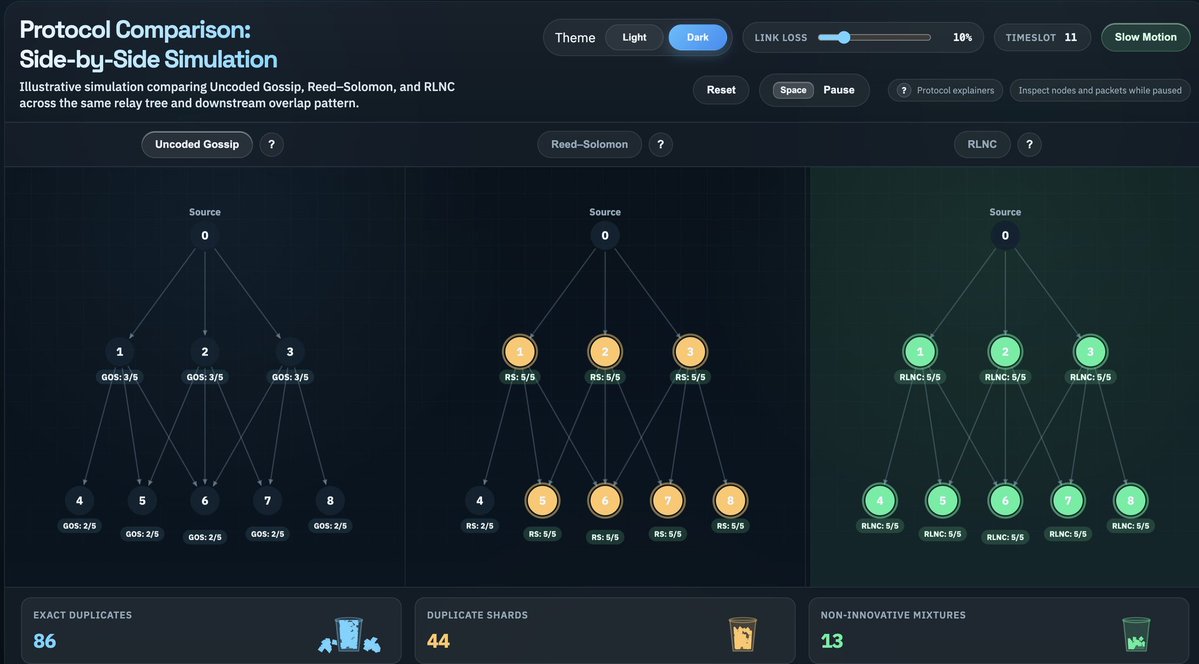

ʙʟᴏᴄᴋᴄʜᴀɪɴ ꜱᴄᴀʟɪɴɢ: ɪꜱ ᴄʜᴀɴɢɪɴɢ ᴛʜᴇ ᴄᴏᴅᴇ ᴛʜᴇ ᴏɴʟʏ ᴡᴀʏ? 1. Conventional Ideas and Alternatives (Observational Style) When we talk about blockchain scaling, we tend to revolve around a few specific ideas, new consensus mechanisms, radical changes to the layer-one architecture, or more complex smart contract structures. The main trend is that scaling means rebuilding the inside of the chain. This traditional line of thinking has recently been challenged by @get_optimum. Their question is simple: do we really need to change the core of the blockchain to scale? Their answer is, no. Instead of touching the chain’s core system, consensus, or smart contracts, they are creating an off-chain layer outside the chain: DeRAM (Decentralized RAM Layer). 2. Problem: Data Handling, Not TPS (Analytical Style) The complexity of scaling is not measured in transactions per second (TPS). The real hurdles are the speed of data movement, the efficiency of data handling, and the risk of system upgrades. While most projects are in a race to increase the number of transactions, Optimum's analysis is different. According to them, the disruption occurs in data coordination and data flow. Therefore, it is more effective to make the data layer smart and reliable than to change the entire architecture. 3. DeRAM: An infrastructure based on three pillars (Declarative Style) Optimum's solution is called DeRAM. It is basically an infrastructure layer, whose job is to bring reliability to data handling. This infrastructure is built on three fundamental principles. • Atomicity: No task can be stuck in a half-state. It will be completed, or it will not be completed. • Consistency: Ensuring that every part of the network sees the same state of the data. • Durability: Ensuring that data once stored is never lost. 4. Technical structure: Three-tiered structure (technology-centric style) DeRAM's working method is well-organized and layered. RLNC (Random Linear Network Coding) coding is used for data distribution, which divides the data into small pieces and distributes them accurately. To hold this data, there is a Flexnode network, which creates an elastic node structure, there is an opportunity to increase the number of nodes as needed. And decentralized storage is added to ensure data availability. Together, these three elements create an infrastructure where data is stable, consistent, and reliable. 5. Conclusion: Optimization, not Rebuild (Closing Message) The main point of @get_optimum is that the only way to scale is not to tear down the old system and build a new one. Their approach differs from the traditional narrative. It is possible to significantly increase performance by optimizing data flow and coordination at the infrastructure level, while leaving the main chain intact. Their work aims to show that the right optimization in the right place can often provide a more effective solution than a complete rebuild.