Dan Crewger

448 posts

@thsottiaux Not being able to scope chats to projects/workspaces. See github.com/openai/codex/i…

English

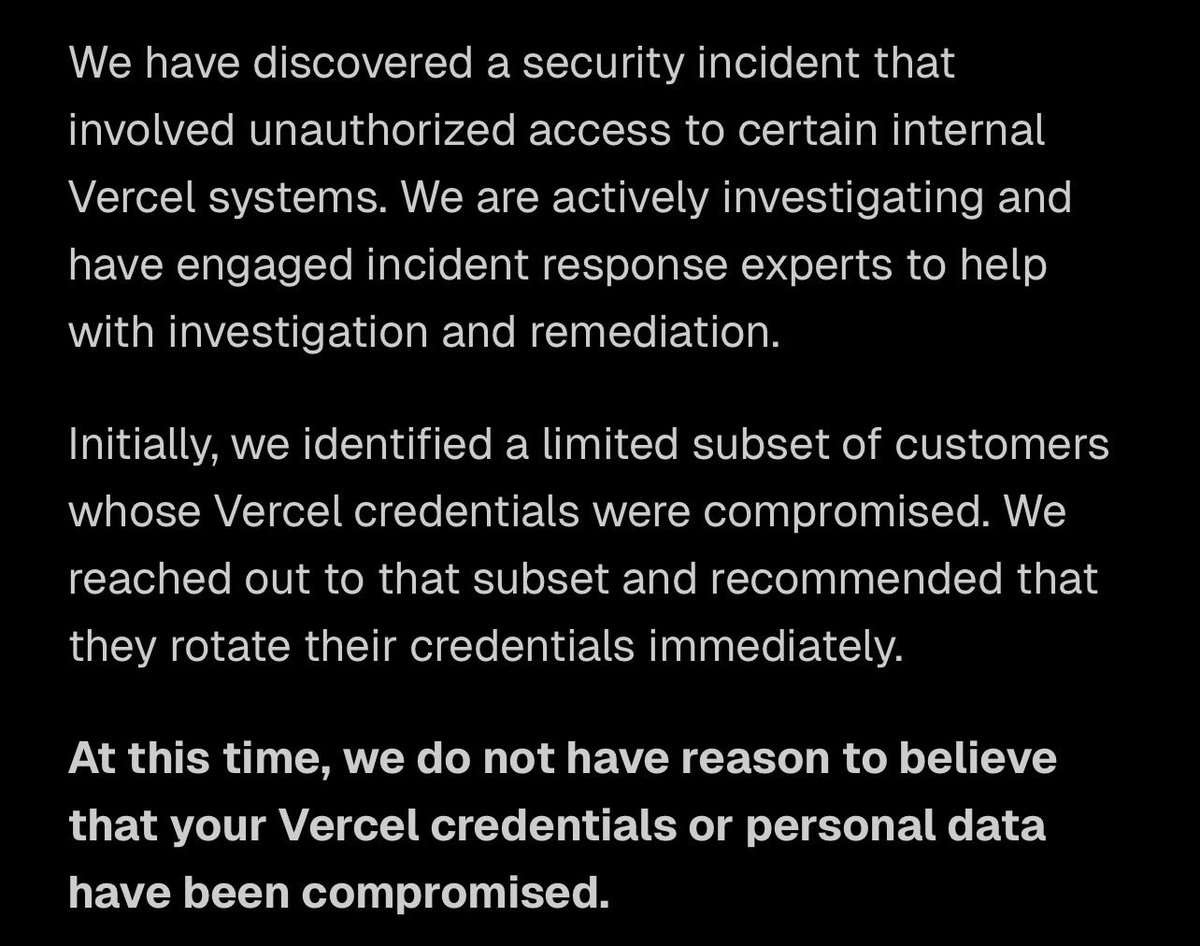

Dear @vercel,

Do hackers have ALL MY SHIT and do I have to spend all night fixing it or not?

I did not receive an email.

Can we please have some TRANSPARENCY here?

English

@trq212 Great idea! Will be an amazing resource for many! I'd love you to do some with people who have high level tech understanding but no coding skills (typical PM profile)

English

@CRSolomonWrites @OpenAI A lot of people asked for this. The fact that you can't imagine something not important to you being important to someone else fits the keep4o theme very well.

English

We’re updating our ChatGPT Pro and Plus subscriptions to better support the growing use of Codex.

We’re introducing a new $100/month Pro tier. This new tier offers 5x more Codex usage than Plus and is best for longer, high-effort Codex sessions.

In ChatGPT, this new Pro tier still offers access to all Pro features, including the exclusive Pro model and unlimited access to Instant and Thinking models.

To celebrate the launch, we’re increasing Codex usage for a limited time through May 31st so that Pro $100 subscribers get up to 10x usage of ChatGPT Plus on Codex to build your most ambitious ideas.

English

@lydiahallie Why don't YOU ship a fix that sets the compact window to 200k? You must be losing subscribers left and right. This is in your hands. A large portion of users won't read this message.

English

Digging into reports, most of the fastest burn came down to a few token-heavy patterns. Some tips:

• Sonnet 4.6 is the better default on Pro. Opus burns roughly twice as fast. Switch at session start.

• Lower the effort level or turn off extended thinking when you don't need deep reasoning. Switch at session start.

• Start fresh instead of resuming large sessions that have been idle ~1h

• Cap your context window, long sessions cost more CLAUDE_CODE_AUTO_COMPACT_WINDOW=200000

We're rolling out more efficiency improvements, make sure you're on the latest version.

If a small session is still eating a huge chunk of your limit in a way that seems unreasonable, run /feedback and we'll investigate

English

Thank you to everyone who spent time sending us feedback and reports. We've investigated and we're sorry this has been a bad experience.

Here's what we found:

Lydia Hallie ✨@lydiahallie

We're aware people are hitting usage limits in Claude Code way faster than expected. Actively investigating, will share more when we have an update!

English

@westerbamos @corbucorbu @lydiahallie Lol well he doesn't clear context windows because he doesn't have to pay...

English

@UzoPamela1 @lydiahallie Have the previous one produce a summary, feed that in. No other way. If Claude needs to know about something, that something consumes tokens.

English

@lydiahallie If I start a new session, then it's a whole new conversation.

Is it possible to do that while maintaining the flow of our previous conversation but in a new chat?

English

@lydiahallie Seems I have been doing a lot of this right, probably why I never hit the limits in unreasonable ways. However, on reddit users report a fresh chat with "Hi" ate sth like 11% with hardly any skills or MCPs. That can't really be explained by anything you shared can it?

English

@trq212 What I would LOVE is a combination of this and Codex Web. Having to run a mini pc at home feels very outdated (at least for coding).

English

@trq212 I went through the setup this weekend, let Claude guide me through it. Took forever, it felt like Claude wasn't fully aware of the guide and kept bumping into walls. Great feature though now that I got it to run. Already looking for mini PCs.

English

@nlw Love the show, but I disagree on something from the recent AI and jobs episode: efficiency and profitability is not a means to an end (fulfilling human desires) in shareholder capitalism. On an idealistic level, yes, but that's not how the system is actually set up.

English

@donaldtusk It’s getting ridiculous at this point. We’re sitting at the table trying to protect European interests while someone is literally live-texting the Kremlin from under the desk. If you’re that cozy with Moscow, why even stay in the EU? It’s pure sabotage

English

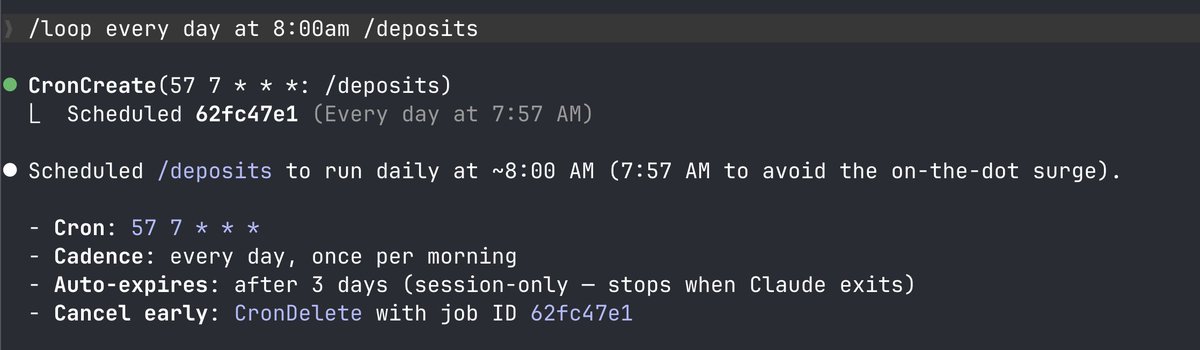

@svpino @dave_alive Does /loop work locally? I would have assumed it runs in the cloud so it doesn't matter if your computer is running or not.

English

@levelsio Honestly I'm perplexed by the webmcp hype. I'm a non-dev so maybe I just don't get it, but wouldn't it be much leaner to just embed proper API documentation for the APIs the website is already using, rather than implementing a second way to achieve essentially the same thing?

English

Thank god MCP is dead

Just as useless of an idea as LLMs.txt was

It's all dumb abstractions that AI doesn't need because AI's are as smart as humans so they can just use what was already there which is APIs

Morgan@morganlinton

The cofounder and CTO of Perplexity, @denisyarats just said internally at Perplexity they’re moving away from MCPs and instead using APIs and CLIs 👀

English

@steipete Meanwhile, all my codex conversations are stuck in thinking mode for the past hour.

English

Oh right i realize hell freezes over, we reached the point where app > cli

That combined with the speed increase also means less windows necessary, codex goes brrrr now! developers.openai.com/codex/app/

English

@peterthiel wrote this 12 years ago. Shows how crazy fast the world is changing, if even he couldn't fathom us being where we are now, a mere decade ago.

English