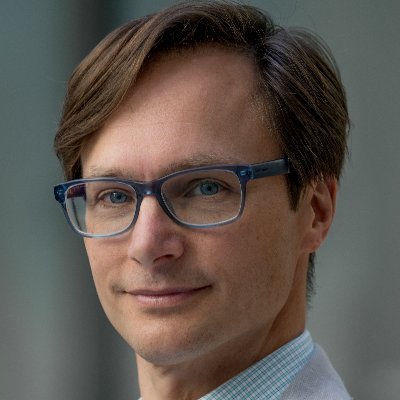

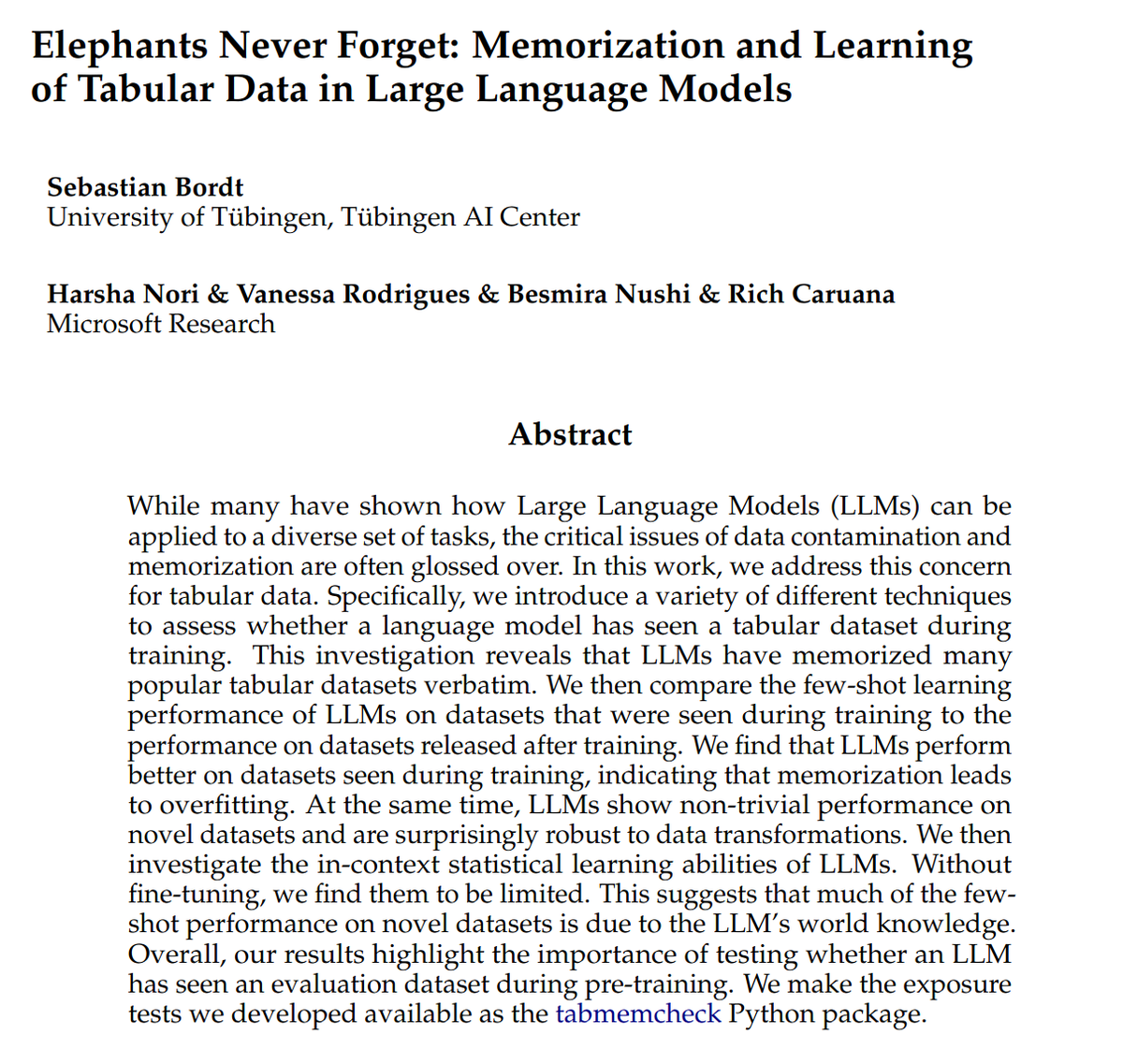

Dean Carignan

397 posts

@DeanCarignan

Chief of Staff for @Microsoft's Chief Scientific Officer; exploring responsible practices in AI, Data Science, ML Ops. Ex: @MSFTReseach @Mckinsey, @Worldbank

👀New Magentic-UI tutorial by @MayaMurad0 Learn how to make the most of MAGUI's features and automate complex tasks.

Internships, Office of the Chief Scientific Officer, Microsoft jobs.careers.microsoft.com/global/en/job/…

Analysis of o1-preview on medical benchmarks, comparing o1's native inference-time powers to prior work on Medprompt, a different approach to inference-time analysis. Assessment: o1 reaches new heights--and shows great promise for medical applications. tinyurl.com/9yspcpm6

Remember Golden Gate Claude? @etowah0 and I have been working on applying the same mechanistic interpretability techniques to protein language models. We found lots of features and they’re... pretty weird? 🧵

why do language models think 9.11 > 9.9? at @transluceAI we stumbled upon a surprisingly simple explanation - and a bugfix that doesn't use any re-training or prompting. turns out, it's about months, dates, September 11th, and... the Bible?

This is great piece - there are so many examples of companies that "saw" things coming at them but seem to be paralyzed. Useful ideas.

Open LLMs for the win! the internals of LLMs are a secret treasure hidden in closed models. A lot has happened before arriving at the final output token. Here we show a simple strategy exploiting the attention weights yields a highly effective and efficient reranker. can be used for IR, RAG, etc. Nice work led by @ShijieChen98 from @osunlp!

One of the biggest open questions is what is the limit of synthetic data. Does training of synthetic data lead to mode collapse? Or is there a path forward that could outperform current models?