Lee Higgins

8.9K posts

Lee Higgins

@Depthperpixel

AI augmented Flutter dev. We have entered the age of AI. What a time to be alive! #flutter #flutterDev

Full duration and full thrust 33-engine static fire with Super Heavy V3

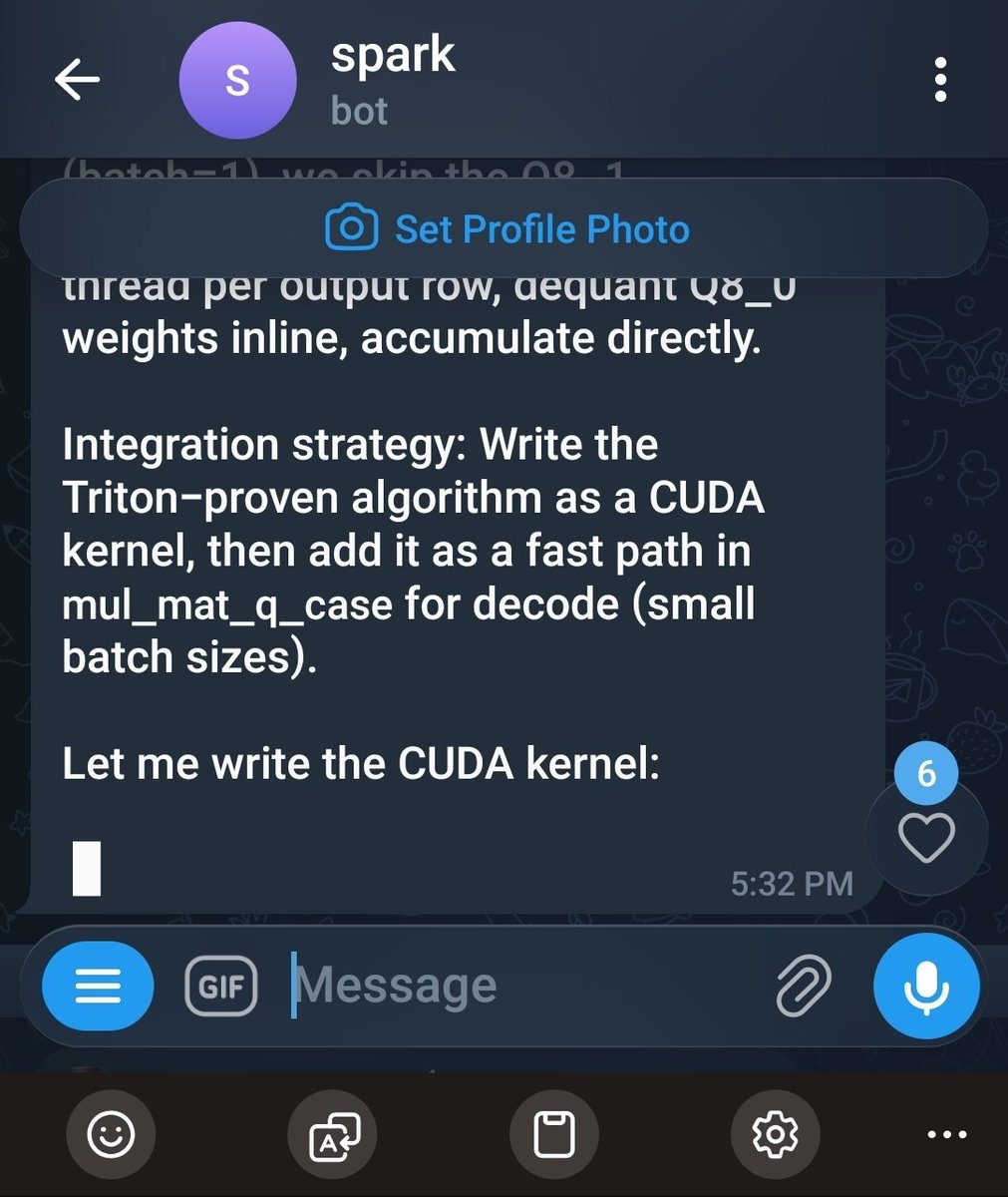

my dgx spark is writing custom CUDA kernels to make itself faster. let that sink in. hermes agent running qwen 3.6 27B Q8 autonomously decided to port its own triton kernel to native CUDA C++ for llama.cpp integration. it understood the dispatch chain. studied the mmq kernel structure. now it's writing the port itself. this machine is literally optimizing its own inference pipeline. no human in the loop. i set a /goal last night and woke up to a 12.91x speedup on SSM and 9.66x on Q8 matmul. now it wants another 2-3x through FP8 tensor cores. local ai. autonomous agents. self-improving inference. this is not science fiction. this is my friday.

Introducing SubQ - a major breakthrough in LLM intelligence. It is the first model built on a fully sub-quadratic sparse-attention architecture (SSA), And the first frontier model with a 12 million token context window which is: - 52x faster than FlashAttention at 1MM tokens - Less than 5% the cost of Opus Transformer-based LLMs waste compute by processing every possible relationship between words (standard attention). Only a small fraction actually matter. @subquadratic finds and focuses only on the ones that do. That's nearly 1,000x less compute and a new way for LLMs to scale.