DaMan retweetledi

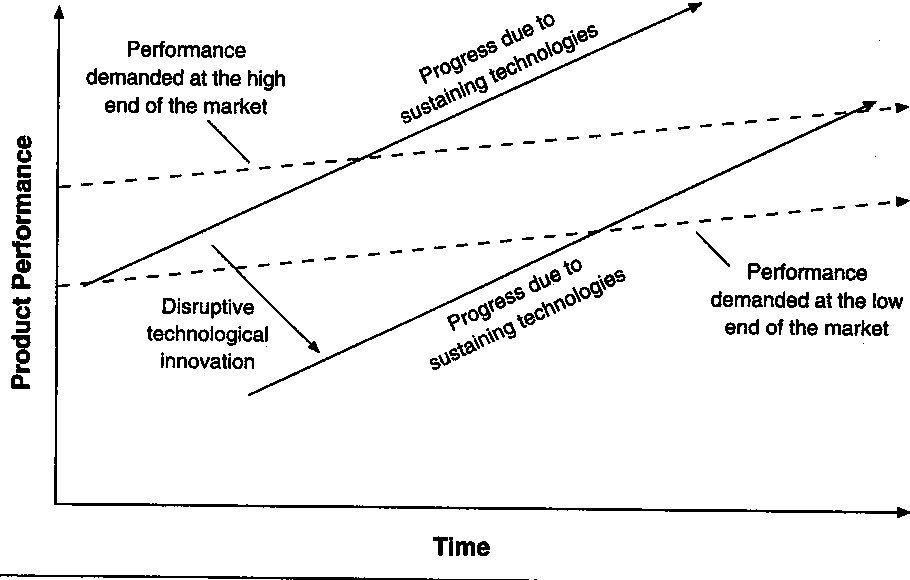

For anyone in AI, it can be very useful to track AI progress through the lens of disruptive innovation, popularized by Clayton Christensen.

As a reminder, with disruptive innovation, a new technology or product will initially seem inferior for most needs of the market, but at least be cheap and simple enough for the lowest-end use cases of the market.

Initially it is perceived as not good enough for the more advanced use cases, and is rejected or ignored by the most important customers. Then, over time, the technology improves and incrementally solves more and more problems for customers. Eventually, after enough cycles of improvement, in most cases the new innovation exceeds all of the customers needs, often at a lower price point or with improved service. Of course, by then, for incumbents that didn’t respond, it’s far too late.

In the case of AI, it’s useful to look at the rate of improvement in both the underlying models *and* the applications that use these models. Both improve at slightly different rates since there’s almost always a lag from the time of an AI model breakthrough to an applied use-case (e.g. an LLM may be good at generating code, but an AI code editor has to integrate that model and create features to make the model useful).

On the model front, the rate of improvement has clearly been incredible. In just the past few years, we’ve gone from LLMs being only useful for basic text generation with a high rate of made up facts, to advanced reasoning models that are good at math and logic, and can complete entire tasks using agentic workflows. We’ve seen similar rates of improvement in audio, video, and images as well, all with profound changes in the past few years. These models are now performing at or better than most humans on a number of task types, and we’re still seeing major improvements happen regularly.

Thus, we can expect the same type of improvements to eventually happen at the applied AI layer, often just with a bit of lag from the model improvements. Take for instance the early breakthroughs in AI leading to autonomous cars. At first it seemed like an implausible goal, but these cars are now ubiquitous in SF and probably will be everywhere in the next decade. We’re seeing or will see similar curves in the applied AI layer with AI coding software, customer service, legal work, healthcare services, new search products, robotics, and more.

The biggest mistake when watching a disruptive innovation cycle play out is to assume that because something can’t be done with the technology today, it will remain that way in the future. In fact, some of the biggest opportunities will be in areas that specifically *can’t* fully be solved by AI today, giving disruptors a chance to get early inroads in parts of the market whose use-cases are less complex. Then, over time, the provider will just keep improving and follow the rate of model improvements to take over more of the market.

Ultimately, the key is to not mistake what is possible today with what is possible tomorrow, and plan accordingly.

English