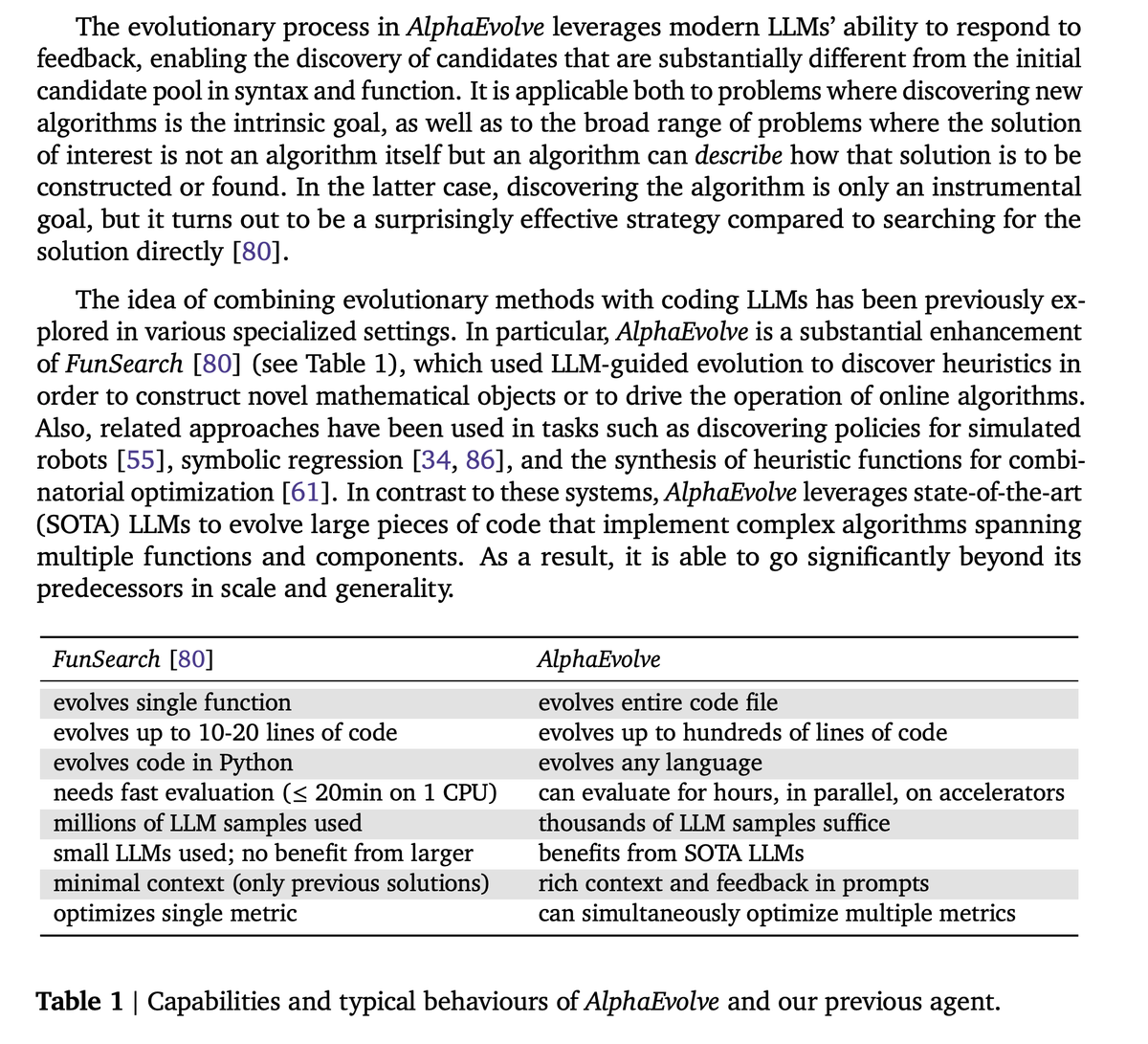

Hsing-Huan Chung

79 posts

Hsing-Huan Chung

@HsingHuan

PhD Student @ UT Austin

We raised $28M seed from Threshold Ventures, AIX Ventures, and NVentures (Nvidia's venture capital arm) —alongside 10+ unicorn founders and top AI researchers— to build reasoning models that generate real-time simulations and games. Models are bottlenecked by practical simulations that can act as Reinforcement Learning environments. Human self-expression is bounded by tools that let us create alternate realities. At Moonlake, we are building a future where anyone can create interactive worlds, bring their child-like wonder to life, learn within them, and most importantly, share experiences with people we care about. More in 🧵

Exploring task vectors: Not just for text LLMs learning new languages (arxiv.org/abs/2310.04799), but also helpful for speech models. Train with domain-specific synthetic data, then adapt using a task vector for real speech (arxiv.org/abs/2406.02925).