Sabitlenmiş Tweet

Jingwei Zuo✈️ICLR 2026🇧🇷

315 posts

Jingwei Zuo✈️ICLR 2026🇧🇷

@JingweiZuo

Principal Researcher @tiiuae, Falcon LLM 🦅 https://t.co/JGy0M8dDC5 | Opinions are my own.

Abu Dhabi Katılım Kasım 2018

406 Takip Edilen175 Takipçiler

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

We just released something new: Luce PFlash

Long-context prefill is a silent killer for throughput speed. llama.cpp takes ~257 seconds to prefill 128K tokens of Qwen3.6-27B on a single RTX 3090. So we tried to solve the problem.

A small Qwen3-0.6B drafter loads in-process, scores token importance across the whole prompt, and the heavy 27B target only prefills the spans that matter. 128K prompt in 24.8 seconds, ~10.4x faster TTFT, NIAH retrieval preserved at every measured context.

It is a clean C++/CUDA port of FlashPrefill wired through Block-Sparse Attention, with a custom Qwen3-0.6B BF16 forward so drafter and target share one ggml allocator. The whole thing is a single daemon command (compress) in front of the existing dflash spec-decode stack.

More details here: github.com/Luce-Org/luceb…

GIF

Sandro@pupposandro

English

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

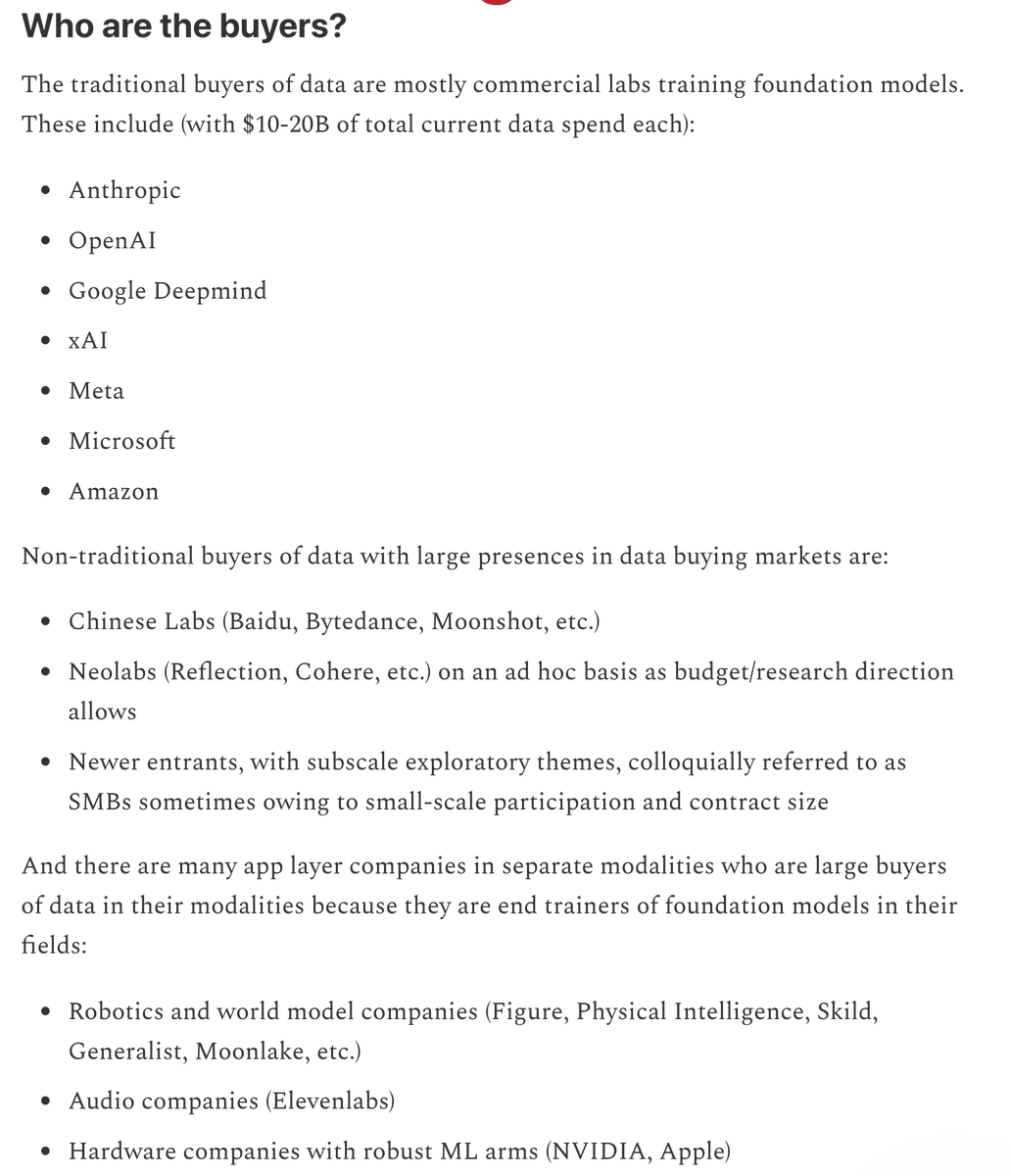

On data markets:

A while ago, Anthropic said that they would be spending a billion dollars this year on RL data. This year, that amount will be far exceeded, with good data rarely being turned down for budget concerns. We can expect OpenAI to be of similar mindset, although the window for banal data projects serviced by the likes of Mercor is rumored to be closing entirely this year. Deepmind, Meta, Microsoft, Amazon, and xAI are known to be N-1 labs who may buy datasets already saturated by the likes of Anthropic, or buy RL environments in light of not having a system like Tundra in Anthropic.

The TAM is still 10s of billions if not more and the raw aggregate spent on data will only continue to increase.

But one must remember what is bought when data is sold, because few today can really differentiate Mercor/Handhshake from a Mechanize/Surge. Data is valuable, to frontier labs, based on how much it can be easily used to improve frontier models. To show this capability, it matters whether teams selling data can show how most directly it can be used to hillclimb models, how much frontier SOTA models struggle on its benchmarks, and how much trouble they can save the frontier lab in its continual acquisition. Data sold is, therefore, very much resembling selling outcomes rather than an actual reusable product, which is why one must obsess about indexing on the scalable means of producing internal systems that can help end model trainers produce outcomes rather than fixating on data itself when evaluating RL environment companies.

In this way, the TAM of data markets is actually extremely greenfield and growing, because few teams have the sophistication for research services and scale for on demand consistently QA’ed data. It is the semblance of this product with which Mercor was able to overtake Scale, the semblance of this product which many newer upstarts are painting as an argument to chip away at Mercor/Handshake/Surge’s lunches.

From my April's edition of State of Data on substack:

English

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

OpenAI 发了一篇技术博客,认真调查了一个荒诞的问题:为什么他们的模型越来越爱说“哥布林”(goblin)和“小精灵”(gremlin)?

事情最早在去年 11 月 GPT-5.1 上线后被注意到。用户反馈模型说话太过自来熟,内部一查,发现包含“goblin”的对话比之前暴涨了 175%,“gremlin”涨了 52%。当时觉得比例还小,没太当回事。

几个月后 GPT-5.4 上线,哥布林彻底泛滥,用户和员工都受不了了。OpenAI 这才认真追查,最终锁定了罪魁祸首:ChatGPT 的性格定制功能。

ChatGPT 有八种可选性格,其中一种叫“Nerdy”(极客风)。训练这个性格时,奖励模型被设定为鼓励"俏皮、有趣的表达",结果无意中给了包含奇幻生物比喻的回复更高的分数。模型很快学会了一个捷径:提到哥布林就能拿高分。

问题在于,这个习惯没有老老实实待在极客性格里。数据显示,Nerdy 性格只占 ChatGPT 全部回复的 2.5%,却贡献了 66.7% 的“goblin”出现次数。从 GPT-5.2 到 GPT-5.4,Nerdy 性格下的哥布林出现率飙升了 3881%。更麻烦的是,即使在没有 Nerdy 性格提示词的对话中,哥布林也在同步增长。

OpenAI 给出的解释是一个经典的反馈循环:强化学习先在极客性格里奖励了这种表达,然后模型生成的带哥布林的回复被收录进了下一轮训练数据,模型因此更加习惯输出哥布林,如此循环放大。除了哥布林,浣熊、巨魔、食人魔、鸽子也都被查出是同一机制产生的“tic词”(语言习惯性抽搐)。

【注:tic 原本是医学术语,指不自主的重复动作或发声,OpenAI 在这里借用来形容模型养成的不受控语言习惯。】

修复方面,OpenAI 在今年 3 月下架了 Nerdy 性格,移除了相关奖励信号,并过滤了训练数据中的生物词。但 GPT-5.5 的训练在找到根因之前就已经开始,所以新模型依然带着哥布林习性出厂。目前的临时方案是在 Codex(OpenAI 的编程工具)里通过系统提示词压制。博客里甚至贴了一段命令行代码,教你怎么把哥布林抑制指令去掉,"让小精灵们自由奔跑"。

这篇博客表面上是讲一个好笑的 bug,底下其实揭示了一个 AI 训练的核心难题:你给模型的每一个微小的奖励信号,都可能在你不知道的地方被放大和泛化。一个只针对 2.5% 用户的性格训练,最终污染了整个模型的语言习惯。

OpenAI@OpenAI

We’re talking about Goblins. openai.com/index/where-th…

中文

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

🚀 Introducing FlashQLA: high-performance linear attention kernels built on TileLang.

⚡ 2–3× forward speedup. 2× backward speedup.

💻 Purpose-built for agentic AI on your personal devices.

💡Key insights:

1. Gate-driven automatic intra-card CP.

2. Hardware-friendly algebraic reformulation.

3. TileLang fused warp-specialized kernels.

FlashQLA boosts SM utilization via automatic intra-device CP. The gains are especially pronounced for TP setups, small models, and long-context workloads.

Instead of fusing the entire GDN flow into a single kernel, we split it into two kernels optimized for CP and backward efficiency. At large batch sizes this incurs extra memory I/O overhead vs. a fully fused approach, but it delivers better real-world performance on edge devices and long-context workloads.

The backward pass was the hardest part: we built a 16-stage warp-specialized pipeline under extremely tight on-chip memory constraints, ultimately achieving 2×+ kernel-level speedups.

We hope this is useful to the community!🫶🫶

Learn more:

📖 Blog: qwen.ai/blog?id=flashq…

💻 Code: github.com/QwenLM/FlashQLA

English

@PengmingWang The iteration speed is truly the key nowadays, congrats!

English

Congrats on the launch! Really nice release!

Marah Abdin@marah_i_abdin

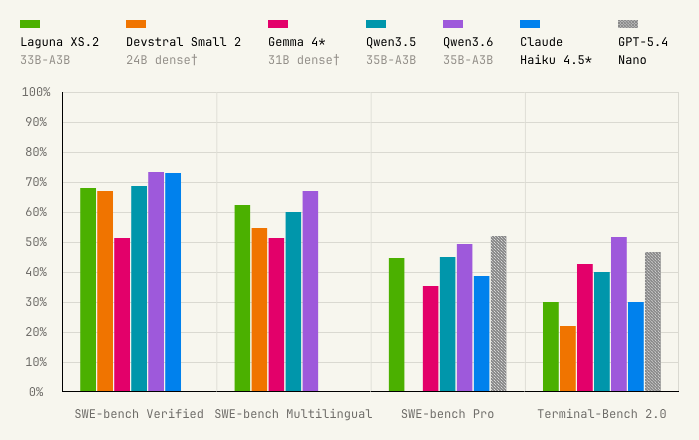

About a year ago, I took a leap into the startup world and joined Poolside after a long, cozy run at MSR. Excited to see it break the ice with two first public model releases: * Laguna-XS.2 — 33B-A3B MoE (**OSS**) * Laguna-M.1 — 225B-A23B MoE (API) A ton of work went into this… and more to come!! 🙌🚀

English

@Louis9687221579 @yoavgo thanks for sharing, in their graph, it looks like after 1T tokens you can safely repeat data

English

The big dilemma with teaching an "LLM course" is that it is really easy to get drawn into teaching the various technical things like efficiency tricks, attention variants, PPO vs GRPO, etc etc. But the real "meat" is not there, but in the data: data for pre-training, for mid-training, for SFT, for RL and for "reasoning", synthetic data, curated data, annotated data... cleaning, evaluating, improving, mixing, ... lots of stuff.

but "data" is so much harder to teach: it is not "mathematic" or "algorithmic" like the technical things, and it is not clear what is the teachable thing there. it is also a lot less transparent than the technical topics, both because it is semi-secret, and also because it is also not appealing for publishing, for roughly the same reasons it is not appealing for teaching.

so, what would you teach about data? what are the key lessons and insights one should know? any good papers or resources? good existing classes? blogs? hit me with what you have

English

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

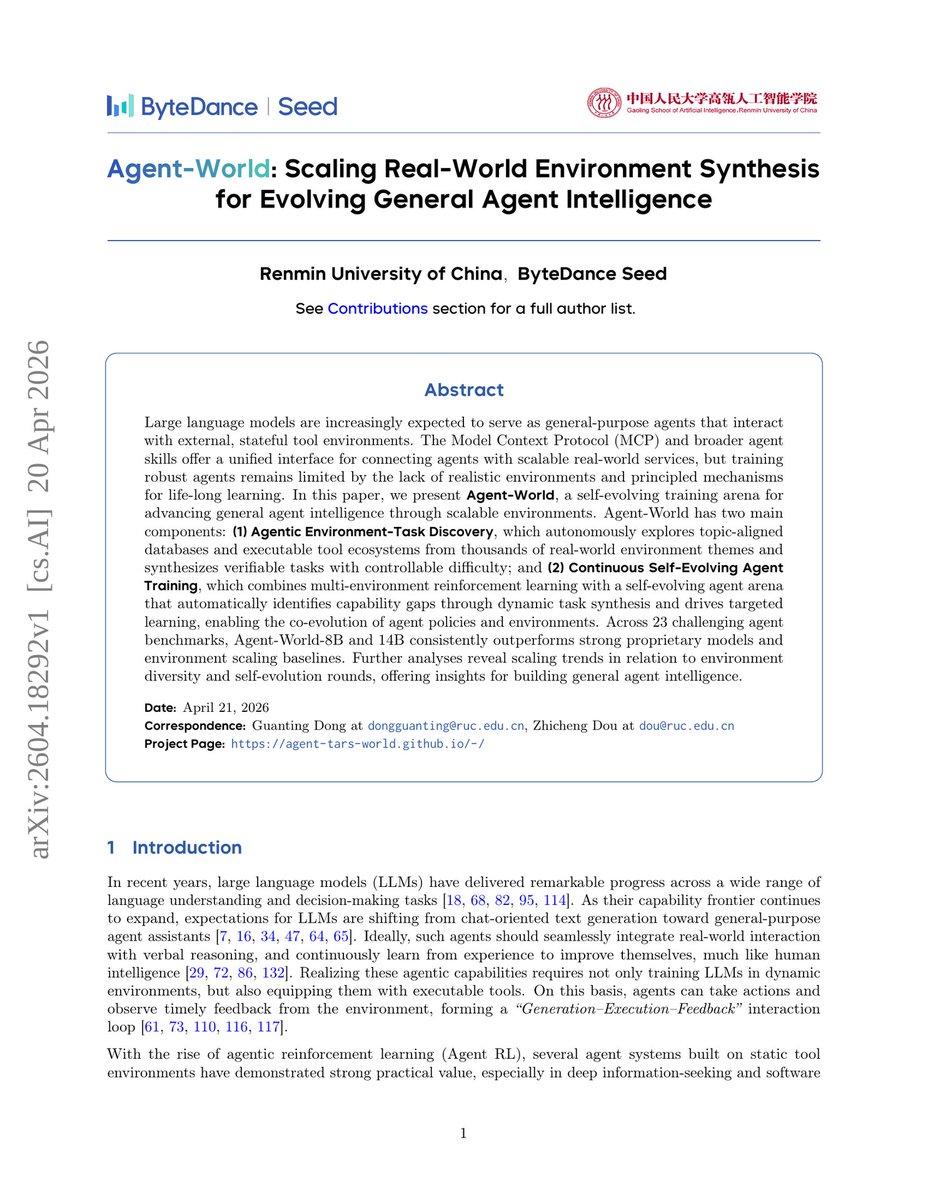

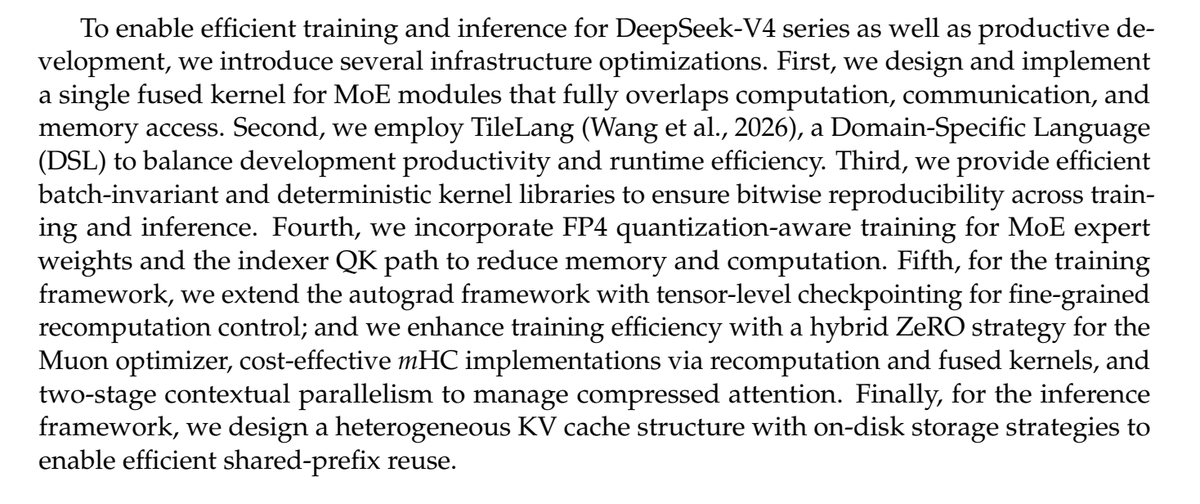

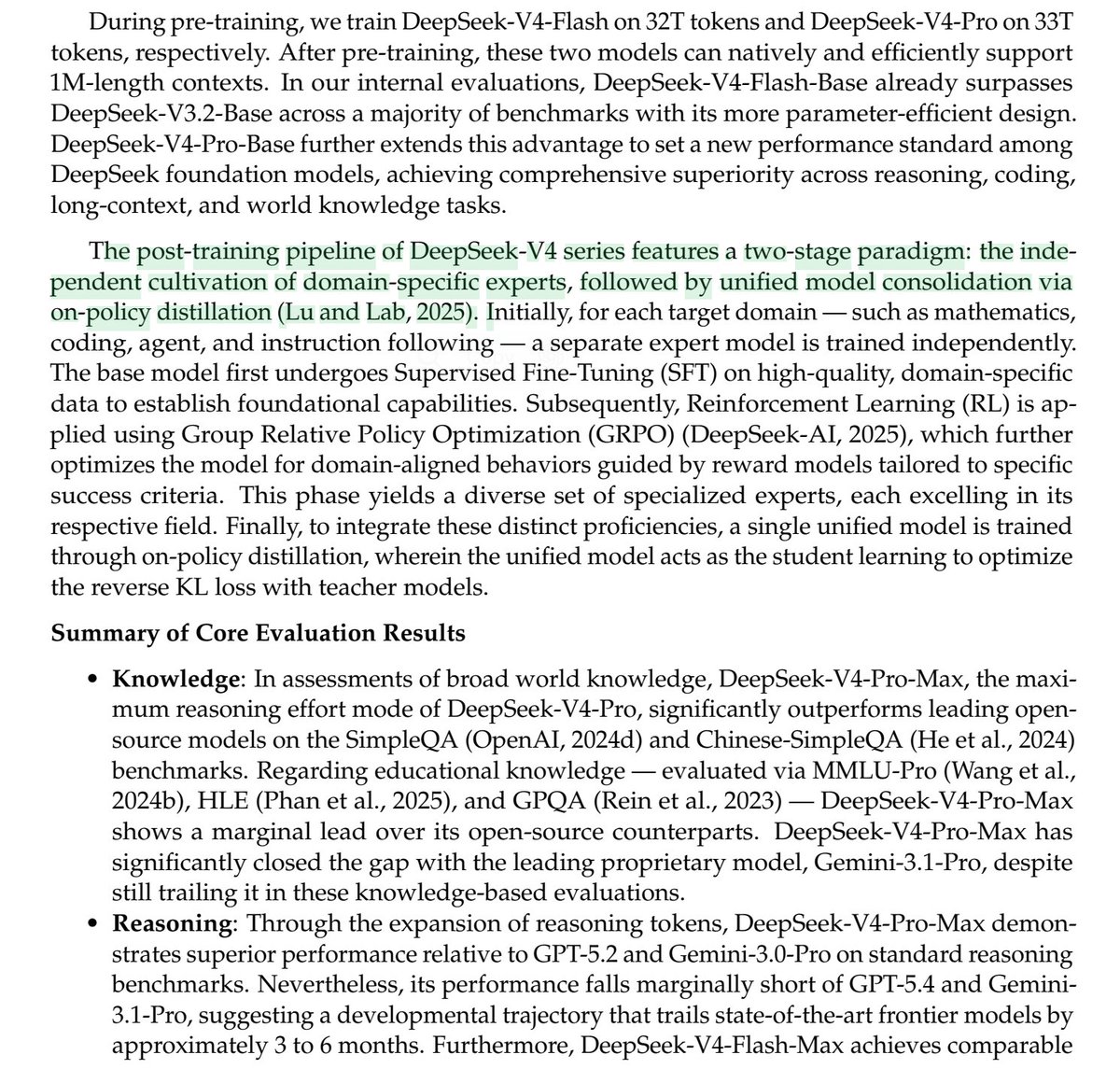

deepseek V4 论文里关于 'Agent 能力' 的训练部分值得深入阅读和学习。

另外不得不赞叹的是deepseek 的工程能力还是依旧的如此扎实。包括自己设计DSL&实现DSec sandbox等等。

里面有一个很巧思的地方,DeepSeek-V4 的 post train 由两个阶段组成:先独立训练多个domain-specific experts,再通过 ODP 合并成统一模型。

下面是 V4 在 agent 能力训练上的一些思路:

1. 在 pre-train 中就注入了大量的 agentic data 来强化 agentic 能力。论文明确提到,为增强代码能力,DeepSeek-V4 在 mid-training 阶段加入了 agentic data

- 让 base model 见过更长的任务过程。

- 让模型熟悉代码、命令、环境反馈、文件修改等模式。

- 给后续 Agent SFT/RL 提供更好的初始化,而不是从纯聊天模型开始硬训工具调用。

2. 训练多个“领域专家”,后训练的第一阶段叫 Specialist Training。论文说,对数学、代码、Agent、指令跟随等目标领域,分别训练独立专家模型

3. hard-to-verify 任务用 Generative Reward Model,传统 RLHF 往往需要训练一个 scalar reward model。DeepSeek-V4 论文说,他们在后训练中不再依赖传统 scalar reward model,而是针对 hard-to-verify 任务构造 rubric-guided RL data,并使用 Generative Reward Model,GRM 来评估 policy trajectory

4. 工具调用协议重新设计为 DSML/XML,V4 引入了新的 tool-call schema,自己设计的DSL格式,减少 escaping failure 和 tool-call errors

5. Interleaved Thinking,保留工具场景下的完整思考轨迹。在 tool-calling 场景中,整个对话过程的 reasoning content 都完整保留,包括跨 user message 边界。

6. Reasoning Effort 分模式训练,Agent 任务不是都需要最大推理。简单工具选择用 Non-think 更快;软件工程、搜索、长文档任务则可以用 High/Max,在成本和成功率之间权衡。

7. Quick Instruction 降低 Agent 前置决策成本

8. 最终用 OPD (multi-teacher On-Policy Distillation)把多个专家合并成统一模型

9. DSec:production-grade 沙箱支撑,V4为 Agentic AI post-training 和 evaluation 建的生产级沙盒平台,它运行在 3FS 分布式文件系统上,可以管理数十万并发 sandbox instances

10. RL/OPD rollout 也专门为长 Agent 轨迹优化

11. 构造自己的 Agent benchmark 集,构造了一个内部 R&D coding benchmark:从 50+ 内部工程师收集约 200 个真实任务,涵盖 feature development、bug fixing、refactoring、diagnostics,技术栈包括 PyTorch、CUDA、Rust、C++ 等。经过过滤后保留 30 个任务作为评测集

中文

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

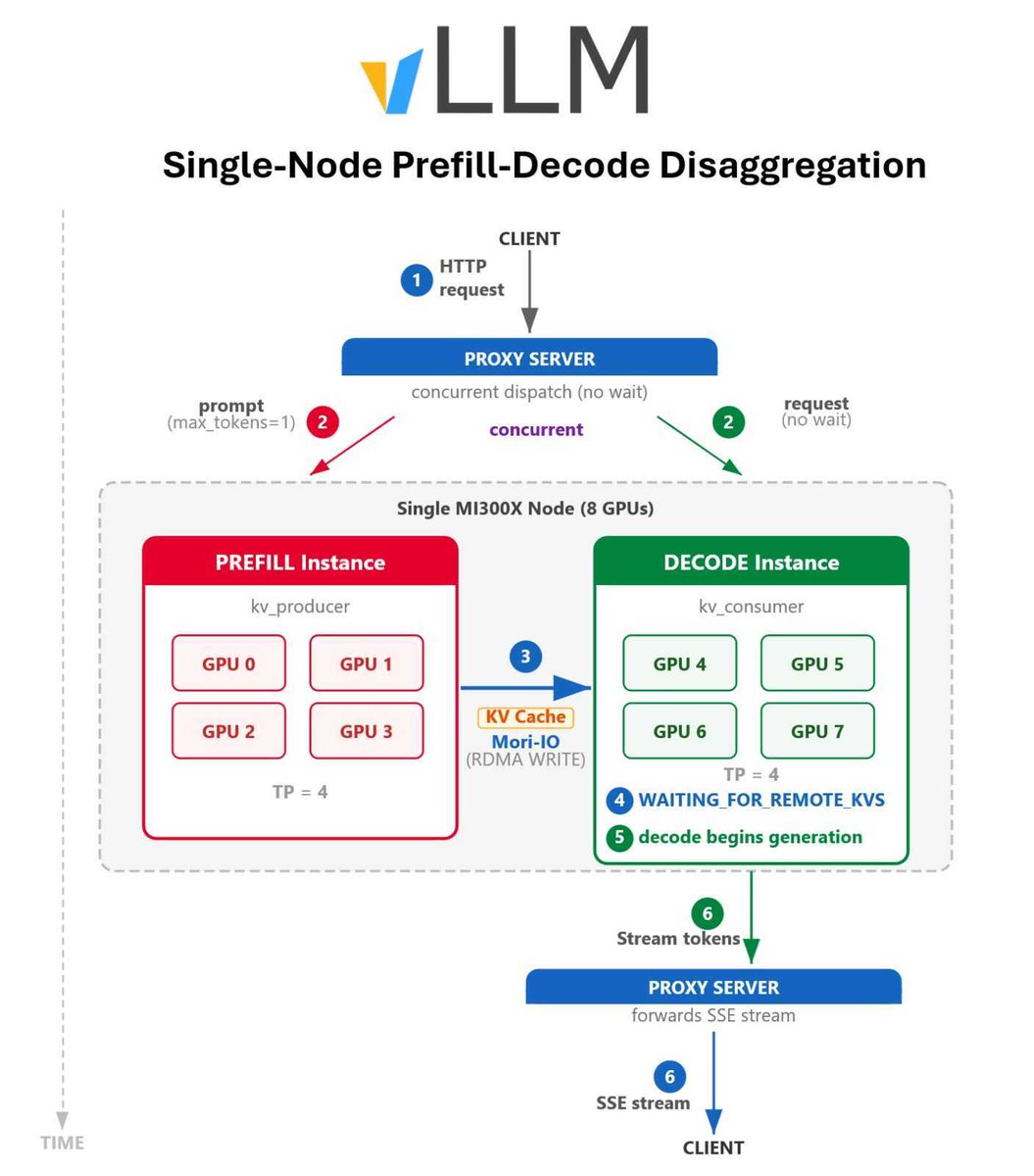

PD disaggregation… on a single node?

@AMD and @EmbeddedLLM just dropped a blog post on the MORI-IO KV Connector and it’s giving vLLM 2.5× higher goodput.

Perfect intro to PD disaggregation, explains the fundamentals with real single-node results.

Stable buttery decode even at max load 🔥

This is huge for single-box inference.

Full blog + benchmarks + code:

vllm.ai/blog/moriio-kv…

English

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi

We push Prefill/Decode disaggregation beyond a single cluster: cross-datacenter + heterogeneous hardware, unlocking the potential for significantly lower cost per token.

This was previously blocked by KV cache transfer overhead. The key enabler is our hybrid model (Kimi Linear), which reduces KV cache size and makes cross-DC PD practical.

Validated on a 20x scaled-up Kimi Linear model:

✅ 1.54× throughput

✅ 64% ↓ P90 TTFT

→ Directly translating into lower token cost.

More in Prefill-as-a-Service: arxiv.org/html/2604.1503…

English

Jingwei Zuo✈️ICLR 2026🇧🇷 retweetledi