Joe Garde

21.2K posts

Joe Garde

@JoeGarde

“The single biggest problem in communication is the illusion that it has taken place.” I'm a cross-pollinator of Ideas and technologies.

ANTHROPIC JUST EXPOSED HOW FAR BEHIND MOST FOUNDERS ARE IN BUILDING COMPANIES WITH AI AGENTS. Not a chatbot guide. Not a prompt tutorial. A full workshop on how to architect, build, and deploy AI agents that run your business operations autonomously. From the team that built Claude. For free. Here is what most people are missing about why this is different from every other AI workshop. Most workshops teach you how to use AI tools. This teaches you how to REPLACE business functions with them. Not replace individual tasks. Entire functions. Research. Content. Customer communication. Operations. Analytics. All running on agent systems that trigger autonomously, hand off between each other, and compound their output without a human initiating anything. The founders who attended Anthropic's enterprise briefings on this material are already building companies with 3 to 5 person teams that operate at the output level of 50-person organizations. Now the same workshop is public. Free. The gap between companies that understand how to architect multi-agent systems and companies that are still using AI as a chat tool is not closing. It is widening every single month. This workshop is the fastest path from the wrong side of that gap to the right one. Bookmark this and watch it this weekend. Follow @cyrilXBT for every Anthropic release that changes how companies are built.

Square just announced ManagerBot this week. What does it actually feel like to manage restaurants with an AI agent? I wrote about it. The morning routine. The out of stocks. The espresso machine. Talking to your data instead of hunting for it. tableandledger.com/blog/managerbo…

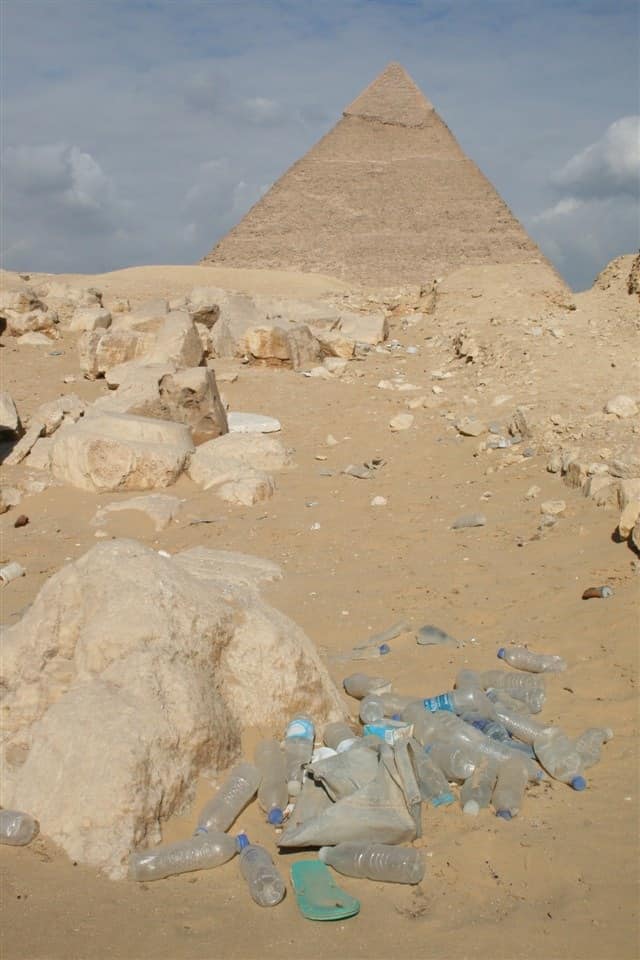

This is Gujjar Nullah in Karachi, Pakistan. A major storm water drain running through neighbourhoods, it carries sewage, runoff and heaps of garbage before joining the Lyari River and the Arabian Sea. Trash and encroachments choke the channel, making flooding and pollution worse, and clearing it remains a constant struggle.