John Baima

1.8K posts

John Baima

@JohnBaima

Dad, granddad, cyclist, software dude

Melania: The future of AI is personified. It will be formed in the shape of humans. Very soon, artificial intelligence will move from our mobile phones to humanoids that deliver utility. They fit well. Imagine a humanoid educator named Plato

Private equity owns almost 500 hospitals across the U.S. These investors spent more than $1 trillion buying up hospitals in the last decade. Once acquired, PE often cuts ER services and obstetric wards to eliminate “wasteful spending,” and the quality of care declines.

Every dollar earned below $184,500 a year has a Social Security tax of 12.4%. Everything after that cap is exempt. If we lift this cap on the wealthiest earners, Social Security would be fully funded till 2070. The cap should not exist.

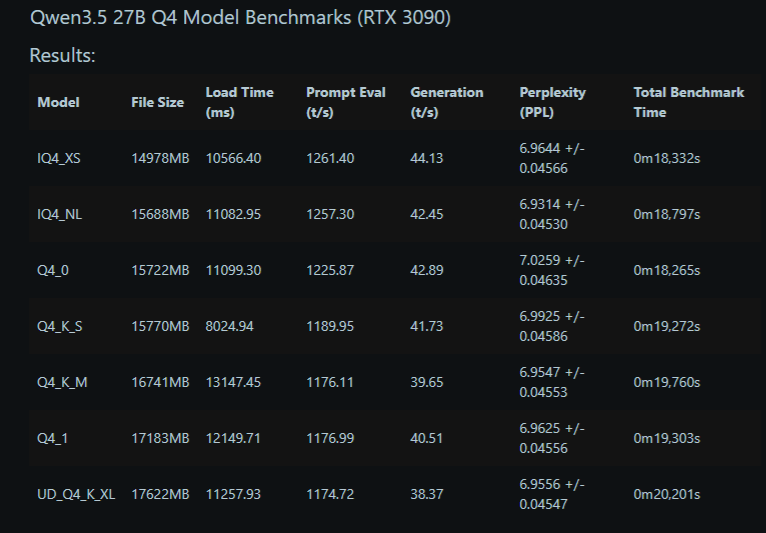

Prediction We will have Claude Code + Opus 4.5 quality (not nerfed) models running locally at home on a single RTX PRO 6000 before the end of the year

I don’t think people realize how much healthcare costs are driving big companies to fire and not hire. It costs them $30k per family, per year for premiums and care. Most of that goes to the massive, vertically integrated insurance companies that send weekly bills that no one reviews in details. And it doesn’t include the company overhead to deal with it all. It’s usually the 2nd largest expense after payroll. Which is insane It’s far easier to blame AI than it is to blame Healthcare costs. Want to increase jobs, wages and improve affordability for every American ? Break up the biggest insurance companies. Make divest non insurance companies. They don’t need thousands of subsidiaries. That’s how they game and abuse the system and increase costs for all of us. Call your senator and tell them to support the BreakUp Big Medicine Bill by @HawleyMO and @SenWarren.

It's time for a moratorium on the construction of new AI data centers. My press conference with @RepAOC. twitter.com/i/broadcasts/1…

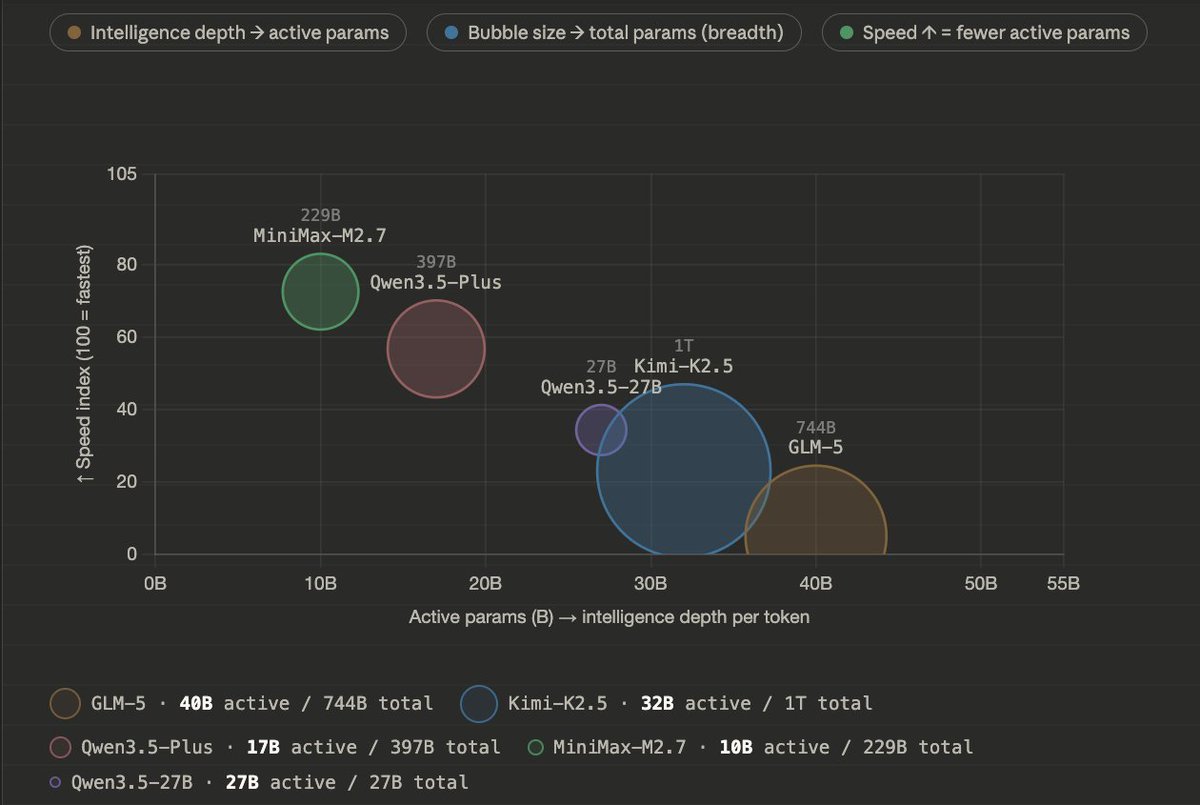

People are not lying when they say Qwen3.5-27B is incredibly capable. 1. Bubble size = total params - World Knowledge, Languages, Skills 2. X axis = active params - Raw Intelligence per token 3. Y axis = tokens/s - Speed of prefill and generation (decode) GLM-5 | 744B params | 40B active Kimi-K2.5 | 1T params | 32B active Qwen3.5-27B | 27B active params Qwen3.5-Plus | 397B params | 17B active MiniMax-M2.7 | 229B params | 10B active MoEs can store much more world knowledge, and breadth of information. For a Mixture-of-Expert, you can stack it up to 1T params, so you can give it 20 Trillion tokens or more of training data, it learns more. But during runtime, only a small portion of that gets activated. Taking MiniMax-M2.5 as an example: Only 10B are active at a time, so while you use it you get the speed and closer intelligence to nemotron-8B it's just MiniMax-M2.5 can know much more, and thus perform better.

@mcuban @IngGuthrie #MedicareForAll would resolve that issue. Healthcare should not be connected to employment.