JotaDe Rodriguez

846 posts

JotaDe Rodriguez

@JotaDeRodriguez

Somewhere between CG art, Architecture and Design. And now programming.

Madrid, Comunidad de Madrid Katılım Mart 2022

252 Takip Edilen46 Takipçiler

Had an interesting discussion with my front-end UI developer. I was asking him why he does not use more claude code to do things faster, and I quickly built a prototype in front of him to show how it was done. He calmly asked me, "Well, if you observe here, this button does not look like the design given in Figma." I said, "It's okay, but look at how fast we quickly built it." Then he told me, "Why is it okay to be fast but wrong with AI, but with me it has to be fast and correct?" 😁

Marcin Krzyzanowski@krzyzanowskim

we knew how to make bad code, cheap, and fast, before agentic coding emerged. why didn't we follow that path earlier? it bugs me. why now? we had the knowledge how to build bad code before.

English

@pintoneous @adamndsmith Bold of u to assume an IT department would keep proper backups.

English

@adamndsmith And what is the lesson here? If you have a business pay for proper IT support. This would not have happened because you would have backups of everything and segregated work/family accounts

English

@triathenum @aiamblichus The result would look the same.

English

@triathenum @aiamblichus The issue is that LLMs know only how to add code, not simplify it. They duplicate stuff because they're incapable of thinking of the whole. It's not surprising, if you had an infinite ammount of humans you could hire but they'd only get 1-2h to work on your project (1/2)

English

I am currently building a tool of low-to-moderate complexity and was intentionally trying not to look at the code Codex was writing, to see how it goes. Now I finally looked.

The results are shocking. The thing "works", but the code quality is truly apocalyptic. I don't even want to think about the amount of refactoring it would take to fix this mess.

If you think your bot will build you a Salesforce clone any time soon, I have a bridge to sell you. The present generation of AIs (if left unattended for any length of time) will create tar pits beyond your wildest imagining. And if you do decide to verify everything they do, you will reduce your velocity by a factor of 10 at least. Which means you won't win nearly as much from the whole process.

And before anyone says: "just let them refactor it!"-- I tried. Asking the AIs to refactor their own code won't bring you any joy. It just drags you further into the tar pit.

The models are clearly trained to pursue the one goal of producing code that "works", with little or no regard for architecture or code quality. This is classic junior developer behavior, of course, but an AI junior will drown you in slop before you know what hit you. With human juniors, you at least have some time to react before they've written 100k lines of code and exhausted your token budget.

This is what progressive loss of control feels like in SE space.

I am sure there are use cases where vibe coding is genuinely useful (small projects, PoCs, straightforward migrations). But we are still far from them being able to produce software of any size or complexity. I advise extreme caution with how much autonomy you choose to delegate to AI coders.

English

JotaDe Rodriguez retweetledi

@carlos6k00 @rauchg Yes. Some people did it with OpenClaw. Hide malicious text in the skills, the AI has the ability to add skills autonomously and you have the perfect recipe for trouble.

English

A Vercel user reported an issue that sounded extremely scary. An unknown GitHub OSS codebase being deployed to their team.

We, of course, took the report extremely seriously and began an investigation. Security and infra engineering engaged.

Turns out Opus 4.6 *hallucinated a public repository ID* and used our API to deploy it. Luckily for this user, the repository was harmless and random. The JSON payload looked like this:

"𝚐𝚒𝚝𝚂𝚘𝚞𝚛𝚌𝚎": {

"𝚝𝚢𝚙𝚎": "𝚐𝚒𝚝𝚑𝚞𝚋",

"𝚛𝚎𝚙𝚘𝙸𝚍": "𝟿𝟷𝟹𝟿𝟹𝟿𝟺𝟶𝟷", // ⚠️ 𝚑𝚊𝚕𝚕𝚞𝚌𝚒𝚗𝚊𝚝𝚎𝚍

"𝚛𝚎𝚏": "𝚖𝚊𝚒𝚗"

}

When the user asked the agent to explain the failure, it confessed:

The agent never looked up the GitHub repo ID via the GitHub API. There are zero GitHub API calls in the session before the first rogue deployment.

The number 913939401 appears for the first time at line 877 — the agent fabricated it entirely.

The agent knew the correct project ID (prj_▒▒▒▒▒▒) and project name (▒▒▒▒▒▒) but invented a plausible-looking numeric repo ID rather than looking it up.

Some takeaways:

▪️ Even the smartest models have bizarre failure modes that are very different from ours. Humans make lots of mistakes, but certainly not make up a random repo id.

▪️ Powerful APIs create additional risks for agents. The API exist to import and deploy legitimate code, but not if the agent decides to hallucinate what code to deploy!

▪️ Thus, it's likely the agent would have had better results had it not decided to use the API and stuck with CLI or MCP.

This reinforces our commitment to make Vercel the most secure platform for agentic engineering. Through deeper integrations with tools like Claude Code and additional guardrails, we're confident security and privacy will be upheld.

Note: the repo id above is randomized for privacy reasons.

English

JotaDe Rodriguez retweetledi

JotaDe Rodriguez retweetledi

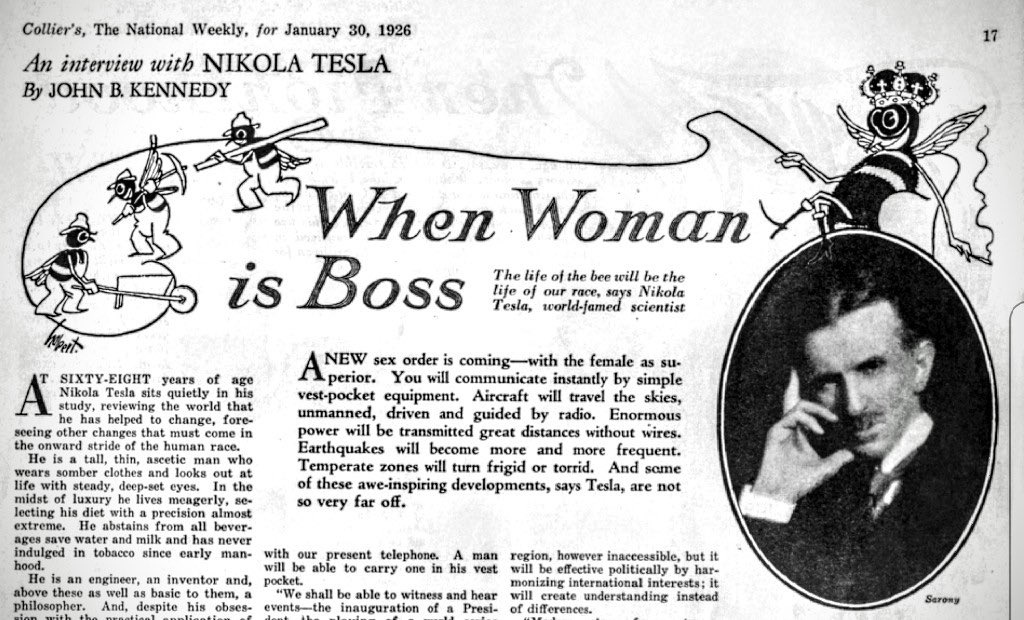

In an interview with John Kennedy of Collier’s Magazine, reclusive inventor Nikola Tesla says that in the future, “wireless will be perfectly-applied to the whole earth” and we will have devices “that instantly allow humans to communicate with one another, and they will fit in our pockets.”

Tesla claims that humans can even have face-to-face meetings on these devices, using wireless magic.

In the same interview, Tesla also predicts that in the future, women will be the dominant sex and the “Queen Bees” of society.

English

JotaDe Rodriguez retweetledi

@ursacke @anatolideli @codexeditor @ahmetb "assistant turn" and other specific tokens (thinking, context, etc) and a special "End of Sequence" token, so the LLM is actually trained on when a response should end, and is able to output that token. The 'chat' interface just hides it.

English

@ursacke @anatolideli @codexeditor @ahmetb You have been answered already, but to add, when you feed text into an LLmM, one of the complexities that is usually abstracted away is the special tokens that get inserted. A multi turn conversation is actually just a single string of text with "user turn", "user end of turn"

English

@advancedjd @Speclizer_ Things you can do in MGS3 with enemies:

Make them give you items

Shoot their radio so they can't call for backup

Hold them while lying down so they'll stay like that forever

Shoot their arm so they can't use their main weapon

Make them talk

These are just off the top of my head!

English

TLOU 2 had a cut feature called "Hold Up" which allowed you to sneak up behind an enemy and force them to put their hands up, you could then control where they move, tell them to face you or away, then they would eventually try to pull out their weapon. The animation still exists in the game, along with the script.

#TheLastofUsPartII

English

@Changing_Wander @Prazkat Bro en la película dicen exactamente lo mismo.

Español

@Prazkat En el libro tambien dicen que el anillo aunque no es un objeto que tenga inteligencia de verdad, si tiene la "personalidad" de un parasito, y va atraer y Manipular a personas y situaciones pa seguir su proposito de corrupcion, x eso tampoco lo amarran a una cuerda y lo arrastran

Español

Nah, el anillo corrompería al ratón y luego a quien lo cargue.

La novela te dice que el efecto de corrupción es directamente proporcional a la ambición de quien lo porte. Los hobbits fueron elegidos para transportarlo porque son los más humildes y la corrupción es más lenta.

Rothmus 🏴@Rothmus

Español

@techbromemes > Can we create huge open worlds?

> Sure

> Can you add in a door?

> oof

English

@RajanKumar67563 @grok @StevenIrby @donvito I assumed they did some heavy testing in the days before release. That's got to eat into their GPU allowance. In any case we will never know for sure.

English

@MaziyarPanahi @HanchungLee Yes and no. It is true that baiscally everything for LLMs right now is just 'more text in or more text out' but the idea is that these are elements that get called when actually needed, ie opposed to MCPs that were appended with every message (thus wasting)

English

@HanchungLee so it’s just prompts, we are hoping the model that could be anything does it super well but there is no guarantee.

what’s the point of making standard our of this really

English

i am sorry am I missing something here? it’s just bunch of prompts in a markdown file, no?!

Alex Albert@alexalbert__

Agent Skills is now an open standard It's been great to see the traction Skills are already getting in the industry and this makes it easier for everyone to build and contribute to them🚀 agentskills.io/home

English

@RajanKumar67563 @StevenIrby @donvito I noticed the models were worse when a new one was approaching, I always thought they were squeezing for compute in the days leading up to it.

English

@supabase I'd advice everyone to be mindful, personally I covered 90% of my use case (database tables as context to design my APIs) got covered when using a schema dump into a sql file and letting Claude browse through it when it needs to.

English

@supabase Used this daily, but the amount of tokens consumed constantly because of the ammount of tools seriously cut into my context window and usage with Claude Code.

English