LangChain JS

202 posts

LangChain JS

@LangChain_JS

Ship great agents fast with our open source JS frameworks – LangChain, LangGraph, and Deep Agents. Maintained by @LangChain.

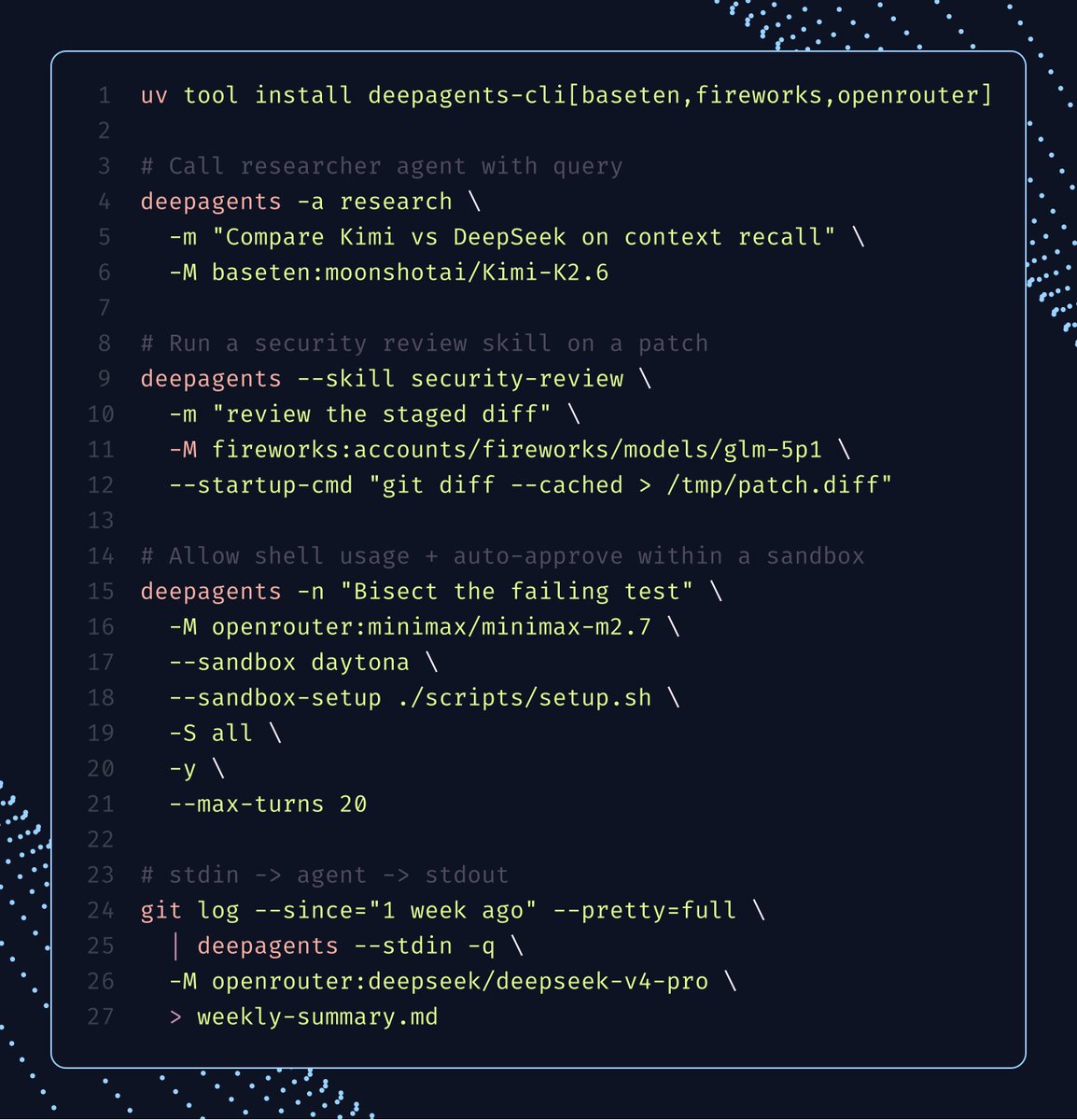

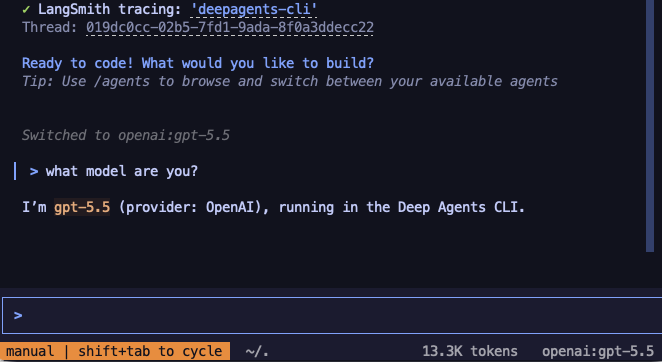

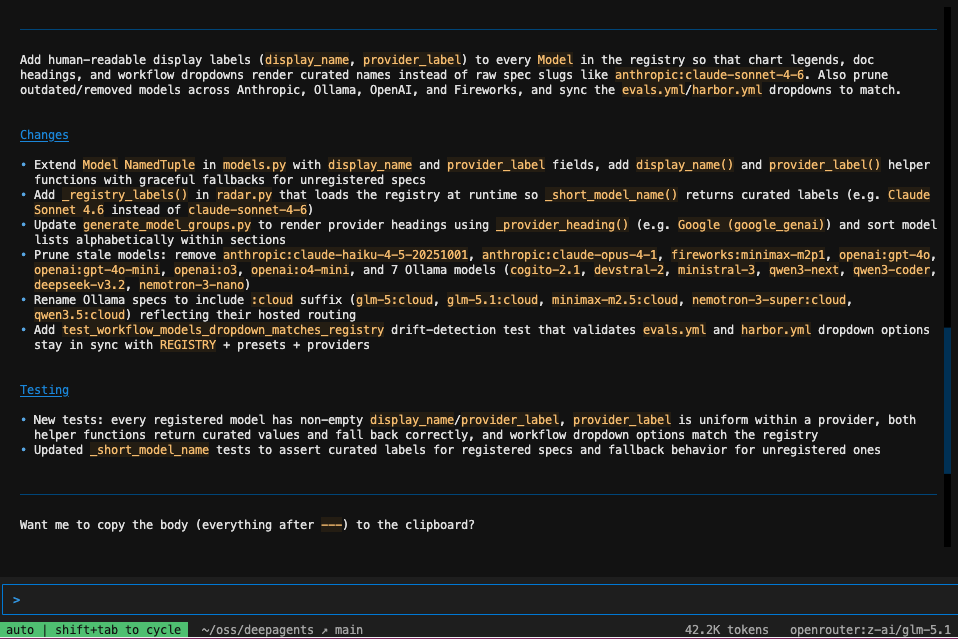

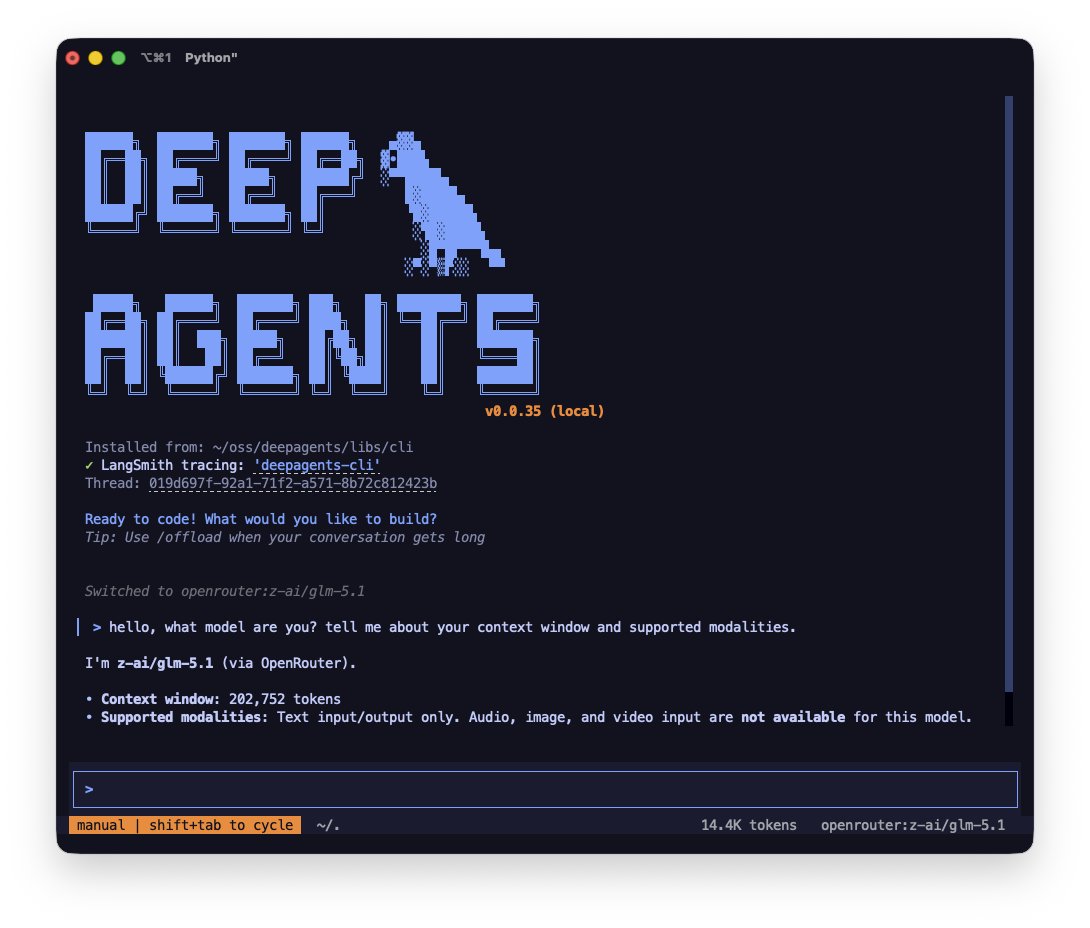

deepagents-cli is quietly becoming the best place to start coding with open weight models. we've been investing heavily in making it a harness that's truly model-agnostic, without compromising performance! different models perform best with different harnesses -- prompts, middleware, settings. our recent profiles API (below) lets you bundle all of that per model, so Kimi, Qwen, GLM, etc. can drive the agent loop just as well as the closed frontier. more info on profiles x.com/Vtrivedy10/sta… other recent wins worth highlighting: - /agents - swap agent profiles mid-session (coding agent/content writer/custom) - /model - fuzzy switcher w/ live status; OpenRouter, LiteLLM, Baseten, hosted Ollama all built-in - headless mode w/ --json + --max-turns for scripting - --acp to run as an ACP server - /skill:name skills - MCP w/ OAuth full docs and quickstart ⬇️

you've heard that models are highly trained in their harnesses, but... it appears that pi is about 7-10% better than codex with gpt-5.4 on a ProgramBench task. Same exact prompt, same environment. It's a good harness.

we're continuing to see clear examples where a model's harness is a major determinant of overall performance. with the same model, running on same task, it's easy to observe very different scores depending on (system) prompts, tools (& their descriptions), and middleware (steering hooks). this is exactly why we built a harness profiles abstraction in Deep Agents: per-provider or per-model overrides for base system prompts, tool names + implementations, etc., so swapping models doesn't mean losing the work that made the last one good! 10–20pt jumps on tau2-bench in our own testing. currently cooking up built-in profiles for popular open weight models 🧑🍳 langchain.com/blog/tuning-de…

🚀 deepagents 0.5 release 👉 Async subagents - kick off background tasks on any Agent Protocol backed server while you continue to interact with the main agent. Start multiple background tasks in parallel, keep the conversation going, and collect results as they come in. Tasks are stateful and maintain their own thread so you can send follow-up instructions mid-task without losing context or restarting from zero. Any Agent Protocol compliant server is a valid target. This means you have the flexibility of using LangSmith deployments or hosting async subagents using your own custom infra. 👉 Expanded multimodal support - your agent can now see images, listen to audio, watch video, and read PDFs. The read_file tool returns native content blocks, so your agent can reason across all these formats out of the box, unlocking a whole new set of workflows for your agents. 👉 Improved prompt caching - better token efficiency and lower costs for Claude models. Try it out in deepagents v0.5, deepagentsjs v1.9.0 Learn more in the Deep Agents v0.5 blog. blog.langchain.com/deep-agents-v0…

Introducing GLM-5.1: The Next Level of Open Source - Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo. - Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations. Blog: z.ai/blog/glm-5.1 Weights: huggingface.co/zai-org/GLM-5.1 API: docs.z.ai/guides/llm/glm… Coding Plan: z.ai/subscribe Coming to chat.z.ai in the next few days.

we're building out a community middleware page for @LangChain, and we need your help growing it. agent middleware is one of the most powerful building blocks we've shipped. what are you building with it? docs.langchain.com/oss/python/int…