Lester Kim retweetledi

Lester Kim

326 posts

Lester Kim

@LesterCKim

AI Engineer | Helping B2B teams ship fast w/ AI | ex Head of Eng @balconytech | ex-SWE @MagicEden @krakenfx | AB Physics @Harvard

Astoria, NY Katılım Eylül 2013

330 Takip Edilen178 Takipçiler

@claudeai When will this be available for members of the Team plan?

English

In our era, where AI agents can build for us, there is ONE AI skill that is the highest leverage:

Human alignment

How effectively can you understand the system?

How do you ensure and verify it does what the human wants?

How do you scale human judgment and taste in a way that methodically measures the success of the system?

This is the core, most important skill of AI engineers, designers, and product managers.

English

How do you attract the best engineering talent when you're on a tight budget and growing fast?

Hire at least one strong senior engineer and more high-potential junior engineers.

The senior will cost you, but she can be incredibly high-leverage if she can spot and attract great talent and create an environment that allows the juniors to onboard quickly and build on strong foundations.

To attract the outstanding senior engineer, give her the opportunity to really grow, to take on a lot of ownership in how she works, and to contribute to company strategy.

The very best senior engineers are technical business partners, so if you're really tight on salary budget, be extra generous with equity.

English

@JiujitsuOtter It’s misleading and idk why he’s doing that but belt colors are ultimately not that important. I’ve seen white belts with grappling experience give brown belts a tough time.

English

If you have felt your engineering velocity fall over time, almost without fail, the primary culprit is:

Tests

That's more important than clean implementation code or clean architecture diagrams.

If you have brittle tests, little to no branch coverage, hard-to-read tests, you're going to pay for it with reduced velocity that compounds like debt (hence "technical debt").

Instead of hiring more engineers who will add more tech debt, up-skill the existing engineers on better testing. That may mean bringing on someone to shift the culture of the engineering team.

The first stage will be how to stop adding tech debt. Then, it will be about paying it off.

AI is insufficient for this because you will still need a skilled engineer's judgment to evaluate the SKILL.md files.

If tests and evals are holding your engineering team back from getting the most out of AI coding agents, please feel free to reach out.

English

When I was at @yext, many of us read Zero To One by @peterthiel. There were many gems from that book. One that stood out to me was an interview question Thiel used to ask:

What important truth do very few people agree with you on?

This is surprisingly difficult to answer because you need to have deep domain expertise to ground your truth and also be intellectually courageous and confident to believe and act on that truth.

I have come up with my own answers to that question after many years. Here are a few:

1. There is a tremendous arbitrage opportunity in the junior-level labor market. Most tech companies should be primarily hiring highly intelligent, ambitious, proactive fresh grads. @joinHandshake has the right idea, although the UX can be improved.

2. The overwhelming majority of engineers, PMs, and designers will fill completely different roles in their next jobs. Those who remain will drastically increase their productivity as a result of this.

3. Almost everyone in big tech (e.g. @Google) needs to leave for an early-stage startup right now. The upside in terms of learning and impact is so much higher. This was true before AI, but it's even more true now. The golden handcuffs are an illusion. You need to be investing in your skills more than anything else right now if you want to maximize your lifetime impact and unlock your full career potential.

What are your answers?

English

@zuess05 If you go deep enough, you will find limitations in LLMs, so deep specialists will still be around. This is true in engineering, real estate, government, and more.

English

Serious question.

For decades, the standard advice was to "pick a niche" and become a highly paid specialist.

Now Claude has the combined knowledge of every specialist on earth, instantly available for $20 a month.

What exactly are we supposed to tell kids to major in when every technical skill is just a prompt away?

English

Lester Kim retweetledi

AI-native software engineering teams operate very differently than traditional teams. The obvious difference is that AI-native teams use coding agents to build products much faster, but this leads to many other changes in how we operate. For example, some great engineers now play broader roles than just writing code. They are partly product managers, designers, sometimes marketers. Further, small teams who work in the same office, where they can communicate face-to-face, can move incredibly quickly.

Because we can now build fast, a greater fraction of time must be spent deciding what to build. To deal with this project-management bottleneck, some teams are pushing engineer:product manager (PM) some teams are pushing engineer:product manager (PM) ratios downward from, say, 8:1 to as low as 1:1. But we can do even better: If we have one PM who decides what to build and one engineer who builds it, the communication between them becomes a bottleneck. This is why the fastest-moving teams I see tend to have engineers who know how to do some product work (and, optionally, some PMs who know how to do some engineering work). When an engineer understands users and can make decisions on what to build and build it directly, they can execute incredibly quickly.

I’ve seen engineers successfully expand their roles to including making product decisions, and PMs expand their roles to building software. The tech industry has more engineers than PMs, but both are promising paths. If you are an engineer, you’ll find it useful to learn some product management skills, and if you’re a PM, please learn to build!

Looking beyond the product-management bottleneck, I also see bottlenecks in design, marketing, legal compliance, and much more. When we speed up coding 10x or 100x, everything else becomes slow in comparison. For example, some of my teams have built great features so quickly that the marketing organization was left scrambling to figure out how to communicate them to users — a marketing bottleneck. Or when a team can build software in a day that the legal department needs a week to review, that’s a legal compliance bottleneck. In this way, agentic coding isn’t just changing the workflow of software engineering, it’s also changing all the teams around it.

When smaller, AI-enabled teams can get more done, generalists excel. Traditional companies need to pull together people from many specialties — engineering, product management, design, marketing, legal, etc. — to execute projects and create value. This has resulted in large teams of specialists who work together. But if a team of 2 persons is to get work done that require 5 different specialities, then some of those individuals must play roles outside a single speciality. In some small teams, individuals do have deep specializations. For example, one might be a great engineer and another a great PM. But they also understand the other key functions needed to move a project forward, and can jump into thinking through other kinds of problems as needed. Of course, proficiency with AI tools is a big help, since it helps us to think through problems that involve different roles.

Even in a two-person team, to move fast, communication bottlenecks also must be minimized. This is why I value teams that work in the same location. Remote teams can perform well too, but the highest speed is achieved by having everyone in the room, able to communicate instantaneously to solve problems.

This post focuses on AI-native teams with around 2-10 persons, but not everything can be done by a small team. I'll address the coordination of larger teams in the future.

I realize these shifts to job roles are tough to navigate for many people. At the same time, I am encouraged that individuals and small teams who are willing to learn the relevant skills are now able to get far more done than was possible before. This is the golden age of learning and building!

[Original text: deeplearning.ai/the-batch/issu… ]

English

The programming language matters.

@unclebobmartin and @JMMind asked AI to create an app in four different languages, and one was clearly the winner in terms of speed and correctness.

Same prompt. Same harnesses. Same executable specifications (i.e. features). Same model. Same tool. Same computer.

You can check it out here: cleancoders.com/episode/agenti…

For most web apps, I recommend going with TypeScript, not because it's pretty (it isn't), but LLMs are much better at producing TypeScript than most other languages (e.g. Rust) due to the massive amounts of training data.

English

@pavelhegler You should charge when you can. Not necessarily either or.

English

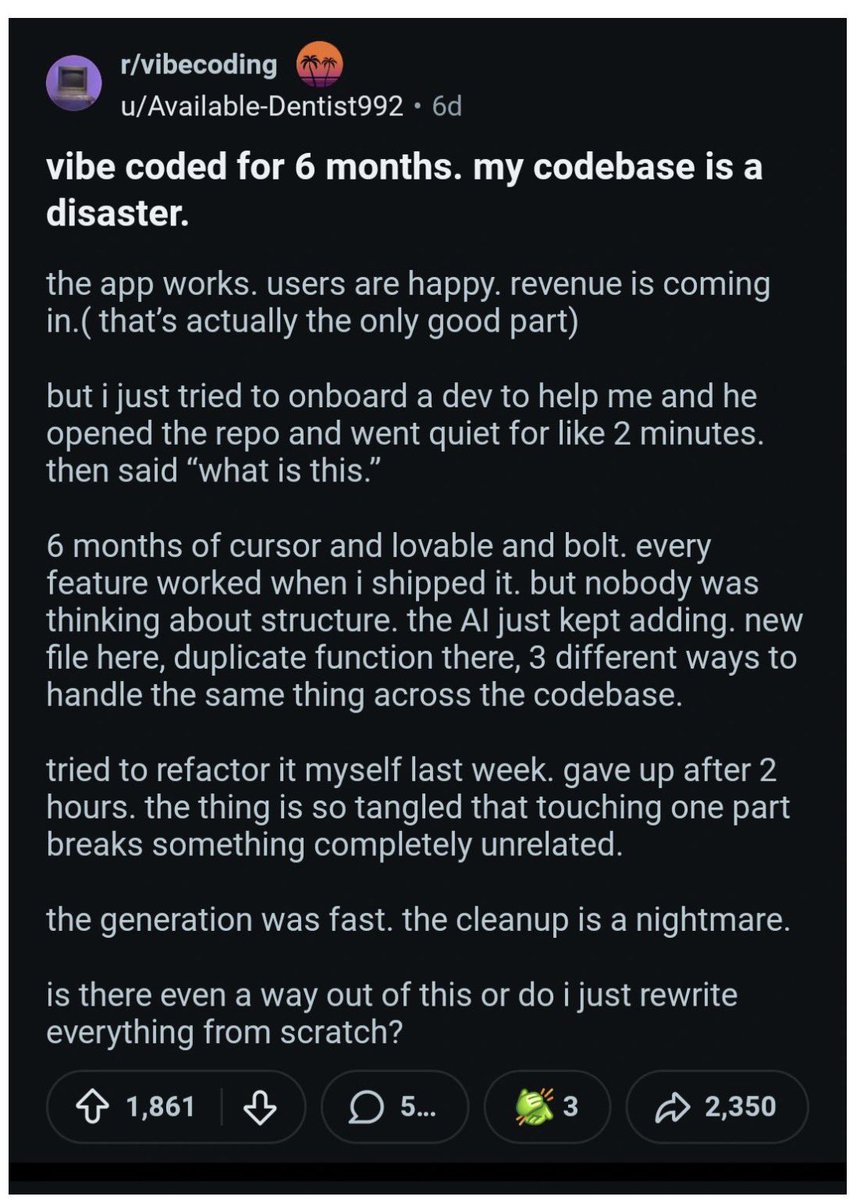

Vibe-coding personal tools or non-commercial, fun projects is one thing, but to build production-grade, commercial software for businesses is a totally different matter. To do the latter, your engineers should be:

- Writing comprehensive, readable tests as specifications

- Architecting appropriate service boundaries and interfaces

- For AI applications and workflows, setting up and updating evaluation metrics in CI and also monitoring production data samples

AI makes software engineering even more important than before. There's a difference between hacking on one-off, throwaway prototypes and building scalable, malleable software systems to serve real customers in a competitive domain.

English

@SirReggieNoble @ComedianMark @ted_ryce @AJA_Cortes With just a bit of BJJ, I bet you give most people a tough time, certainly enough to not want to mess with you again or even risk starting a fight.

English

@ComedianMark @ted_ryce @AJA_Cortes I wrestled for 12 years and I do BJJ (not poorly) and I can confidently say you are wrong. A wrestler with no BJJ experience is getting beat badly. Wrestling teaches a bunch of habits that are a death sentence in an actual fight that one has to unlearn.

English

The C-suite at every company is thinking about how to get all employees to use AI more. Many are tracking token burn to see who is AI-engaged, and those who have low token counts are on the chopping block.

So what do you do with stragglers? Terminate them and undermine trust within your company? Keep them and get left behind?

Ideally, you can keep your people, and they dive into AI by up-skilling. Eventually, everyone will use AI constantly, so I would start by positively inspiring them to see how AI will benefit them.

However, you need to have tough conversations with folks that there are standards and expectations at the company that can only be met by combining their skills with AI.

For example, if your software engineers are not using AI coding agents like Claude Code, Codex, or Cursor to generate 98+% of their code, they are underperforming and need to address that NOW.

Not only are laggards underperforming, but they create an environment where others think that's acceptable.

At the very least, it's important that every NEW hire is extremely AI-forward and creates an AI-native culture. That is an effective way (especially in leadership roles) to create change.

English

If you want to kick off a complex task for Claude Code, Cowork, or Codex, don't treat it like your typical throw-away prompt. Instead,

ITERATE ON IT.

That first prompt really matters, so make sure that you reference all relevant files.

Create a project that improves a prompt to make it superhuman and throw your initial prompt in there.

ALWAYS read the revised prompt. You will want to make modifications and likely even iterate on it as you learn more and come across more reference material.

Even then, for long tasks, the final output won't be perfect, but you can at least learn so that you can continue refining your prompts based on the performance of the agents.

English

Adopting AI does not mean that everything will improve. AI AMPLIFIES. The people with the most leverage are:

Those who know how to use AI effectively PLUS are exceptional in their domain.

The engineer who never writes tests will creates a ton of legacy code that will burden the team and AI agents.

The engineer who ensures the AI agent writes tests for all business logic is able to scale herself and compound the productivity of the company.

Takeaway: Continue to upskill in your domain as well as learn deeply about AI to supercharge yourself.

English

@ivars_auzins @unclebobmartin @jtregunna A key part to improving will be defining what “better” entails in clear, measurable terms.

English

@unclebobmartin @jtregunna Let's not forget we're still in the early years of AI agents - they'll become better.

English

He's not wrong, but it's also not as clear cut.

Assemblers are deterministic automations.

Compilers are deterministic automations.

LLMs are non-deterministic automations.

So sure, an LLM can write code faster than you, but also, you must make sure it's correct. You can use deterministic tooling for things like syntax, some semantics, but it's tricky to automate review, so don't.

Uncle Bob Martin@unclebobmartin

Assemblers were faster at writing binary than humans were. Compilers were faster at writing assembly than humans were. AIs are faster at writing compiled languages then humans are. Deal with it. There's still plenty left for you to do.

English

For teams working on legacy code that want to get the benefits of AI coding agents like Claude Code and Codex, there is one thing that you MUST do:

Automated testing

That will unlock your ability to pay off technical debt in the long term (i.e. via migrations) and have AI agents work safely on your codebase. The boost in productivity will not match those of greenfield projects, but you will still get the benefit of having some boost, even 10%, in engineering productivity.

For post-product-market-fit, the key is verification. Tests allow you to scale the verification as AI agents allow you to produce more code.

English

AI is compressing implementation. So product teams are now bottlenecked at the edges.

Pre-product-market-fit: the bottleneck is figuring out what to build.

Post-product-market-fit: the bottleneck is proving the software actually works.

That means the highest-leverage engineers are no longer just coders.

They are people who can:

1. define behavior clearly

2. encode it in tests

3. build harnesses around AI-generated code

A 4-person team like this will outship a traditional 12-person product org rrelying on product → design → engineering → QA handoffs.

The leverage is now at the edges: specification at the start, validation at the end.

Which of your handoffs still add value, and which ones are just legacy org design?

English