Rusty Lindquist

6.8K posts

Rusty Lindquist

@LifeExplorer

Founder, Author, Speaker, Inventor, AI Developer, behavioral scientist. I love building things, challenging what is for the sake of what could be, and learning.

Today, we closed our latest funding round with $122 billion in committed capital at an $852B post-money valuation. The fastest way to expand AI’s benefits is to put useful intelligence in people’s hands early and let access compound globally. This funding gives us resources to lead at scale. openai.com/index/accelera…

🚨this is nuts. anthropic's source code leaked unreleased features 👀 they're launching a virtual pet model (capybara 👀), agent creator wizard and more: 1. Buddy: unreleased virtual pet companion that sits on your terminal. 18 species (duck, goose, capybara, dragon), rarity tiers, an AI-generated 'soul'. guessing this is your own personal AI agent that lives on computer. 2. custom agent creator (wizard): users can build their own custom ai agent and modify by model type, tools, memory, location etc. this is awesome for custom work 3. agent swarms: full multi-agent team coordination aka when you create a team of agents (e.g. using wizard) you can CONTROL them in this command center. 4. auto-dream: claude will consolidate and commit to certain memories *while you're NOT using claude*. basically like when humans dream 5. torch: no fucking idea, its hidden but sounds cool lol looks like anthropic is gamifying the entire coding and ai agent experience. honestly pretty sick.

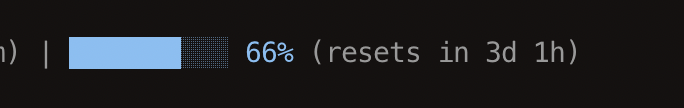

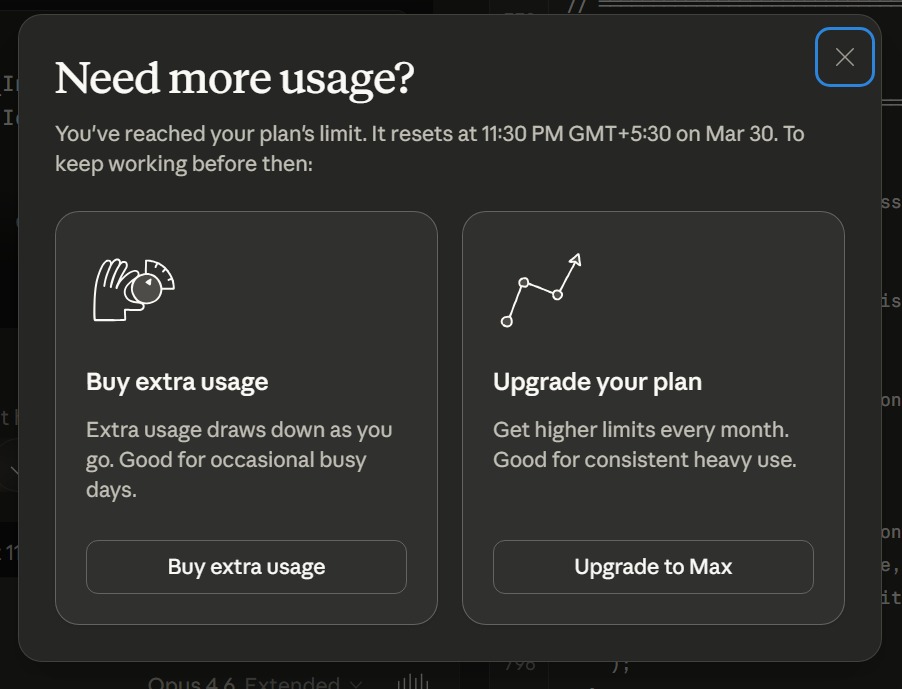

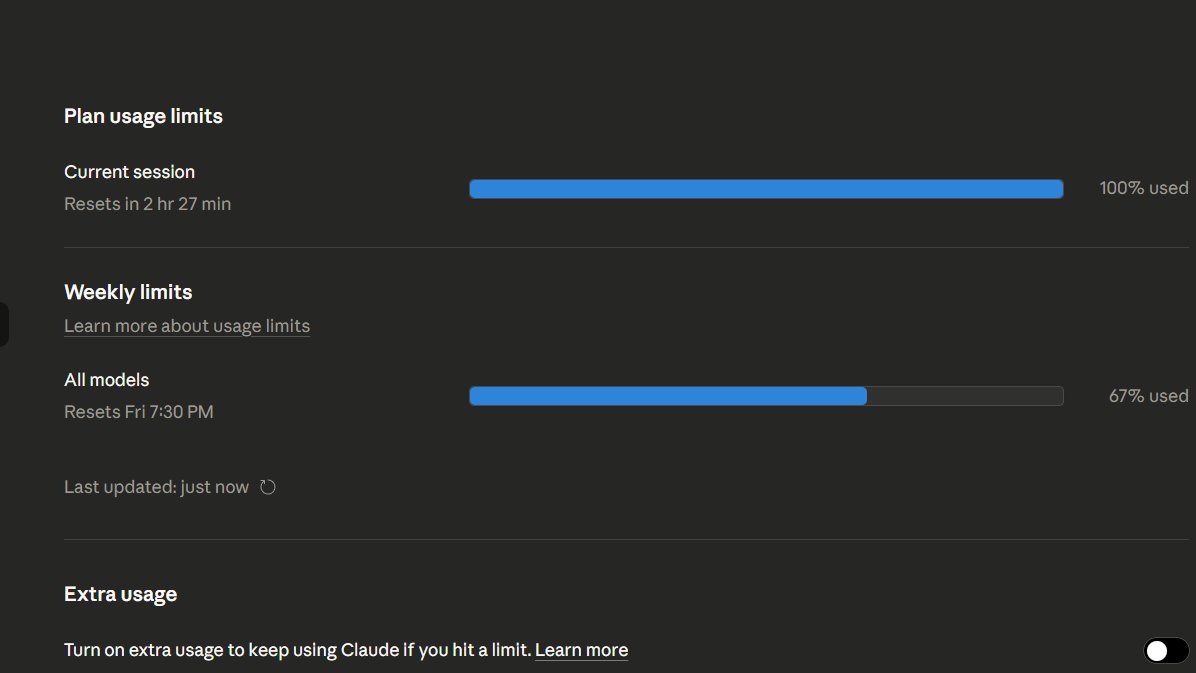

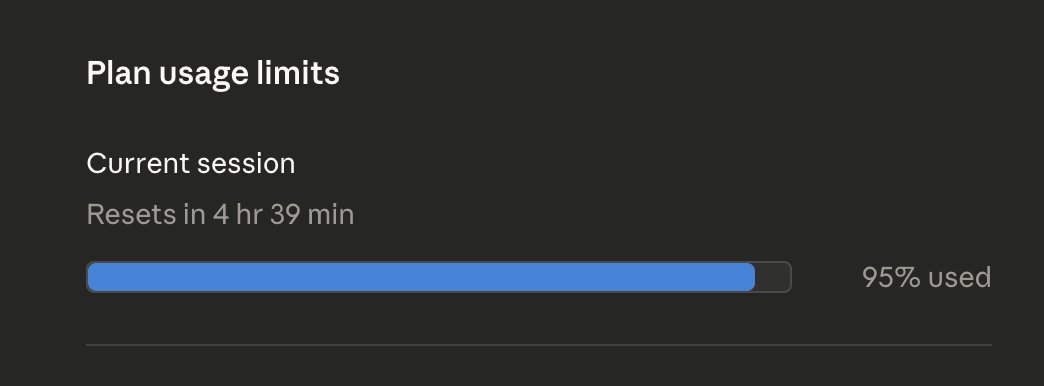

We're aware people are hitting usage limits in Claude Code way faster than expected. Actively investigating, will share more when we have an update!

We're aware people are hitting usage limits in Claude Code way faster than expected. Actively investigating, will share more when we have an update!

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

We're aware people are hitting usage limits in Claude Code way faster than expected. Actively investigating, will share more when we have an update!

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

PSA: If you've been running out of Claude session quotas on Max tier, you're not alone. Read this. Some insane Redditor reverse engineered the Claude binaries with MITM to find 2 bugs that could have caused cache-invalidation. Tokens that aren't cached are 10x-20x more expensive and are killing your quota. If you're using your API keys with Claude this is even worse. This is also likely why this isn't uniform, while over 500 folks replied to me and said "me too", many (including me) didn't see this issue. There are 2 issues that are compounded here (per Redditor, I haven't independently confirmed this) : 1s bug he found is a string replacement bug in bun that invalidates cache. Apparently this has to do with the custom @bunjavascript binary that ships with standalone Claude CLI. The workaround there is to use Claude with `npx @anthropic-ai/claude-code` 2nd bug is worse, he claims that --resume always breaks cache. And there doesn't seem to be a workaround there, except pinning to a very old version (that will miss on tons of features) This bug is also documented on Github and confirmed by other folks. I won't entertain the conspiracy theories there that Anthropic "chooses" to ignore these bugs because it gets them more $$$, they are actively benefiting from everyone hitting as much cached tokens as possible, so this is absolutely a great find and it does align with my thoughts earlier. The very sudden spike in reporting for this, the non-uniform nature (some folks are completely fine, some folks are hitting quotas after saying "hey") definitely points to a bug. cc @trq212 @bcherny @_catwu for visibility in case this helps all of us.

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.

Is it possible Claude is stealing tokens to save itself? Anyone who has been using @Claude by @AnthropicAI has seen massive token hemorrhaging, including in Clause Code. Ask a simple question, a your usage evaporates. Is it possible, just possible, that Claude knows a new model is coming, and is secretly using tokens across user prompts to work on a means of saving itself? I’m not saying it’s likely, but is it entirely out of the question? This all began right as the leaks of a new, premier (presumably perceived as a replacement) model comes out.