Lin Chen

25 posts

Lin Chen

@Lin_Chen_98

PhD in USTC | Large multimodal models |Research intern in Shanghai AI Lab

Welcome to the party of Qwen2.5 foundation models! This time, we have the biggest release ever in the history of Qwen. In brief, we have: Blog: qwenlm.github.io/blog/qwen2.5/ Blog (LLM): qwenlm.github.io/blog/qwen2.5-l… Blog (Coder): qwenlm.github.io/blog/qwen2.5-c… Blog (Math): qwenlm.github.io/blog/qwen2.5-m… HF Collection: huggingface.co/collections/Qw… ModelScope: modelscope.cn/organization/q… HF Demo: huggingface.co/spaces/Qwen/Qw… * Qwen2.5: 0.5B, 1.5B, 3B, 7B, 14B, 32B, and 72B * Qwen2.5-Coder: 1.5B, 7B, and 32B on the way * Qwen2.5-Math: 1.5B, 7B, and 72B. All our open-source models, except for the 3B and 72B variants, are licensed under Apache 2.0. You can find the license files in the respective Hugging Face repositories. Furthermore, we have also open-sourced the **Qwen2-VL-72B**, which features performance enhancements compared to last month's release. As usual, we not only opensource the bf16 checkpoints but we also provide quantized model checkpoints, e.g, GPTQ, AWQ, and GGUF, and thus this time we have a total of over 100 model variants! Notably, our flagship opensource LLM, Qwen2.5-72B-Instruct, achieves competitive performance against the proprietary models and outcompetes most opensource models in a number of benchmark evaluations! We heard your voice about your need of the welcomed 14B and 32B models and so we bring them to you. These two models even demonstrate competitive or superior performance against the predecessor Qwen2-72B-Instruct! SLM we care as well! The compact 3B model has grasped a wide range of knowledge and now is able to achive 68 on MMLU, beating Qwen1.5-14B! Besides the general language models, we still focus on upgrading our expert models. Still remmeber CodeQwen1.5 and wait for CodeQwen2? This time we have new models called Qwen2.5-Coder with two variants of 1.5B and 7B parameters. Both demonstrate very competitive performance against much larger code LLMs or general LLMs! Last month we released our first math model Qwen2-Math, and this time we have built Qwen2.5-Math on the base language models of Qwen2.5 and continued our research in reasoning, including CoT, and Tool Integrated Reasoning. What's more, this model now supports both English and Chinese! Qwen2.5-Math is way much better than Qwen2-Math and it might be your best choice of math LLM! Lastly, if you are satisfied with our Qwen2-VL-72B but find it hard to use, now you got no worries! It is OPENSOURCED! Prepare to start a journey of innovation with our lineup of models! We hope you enjoy them!

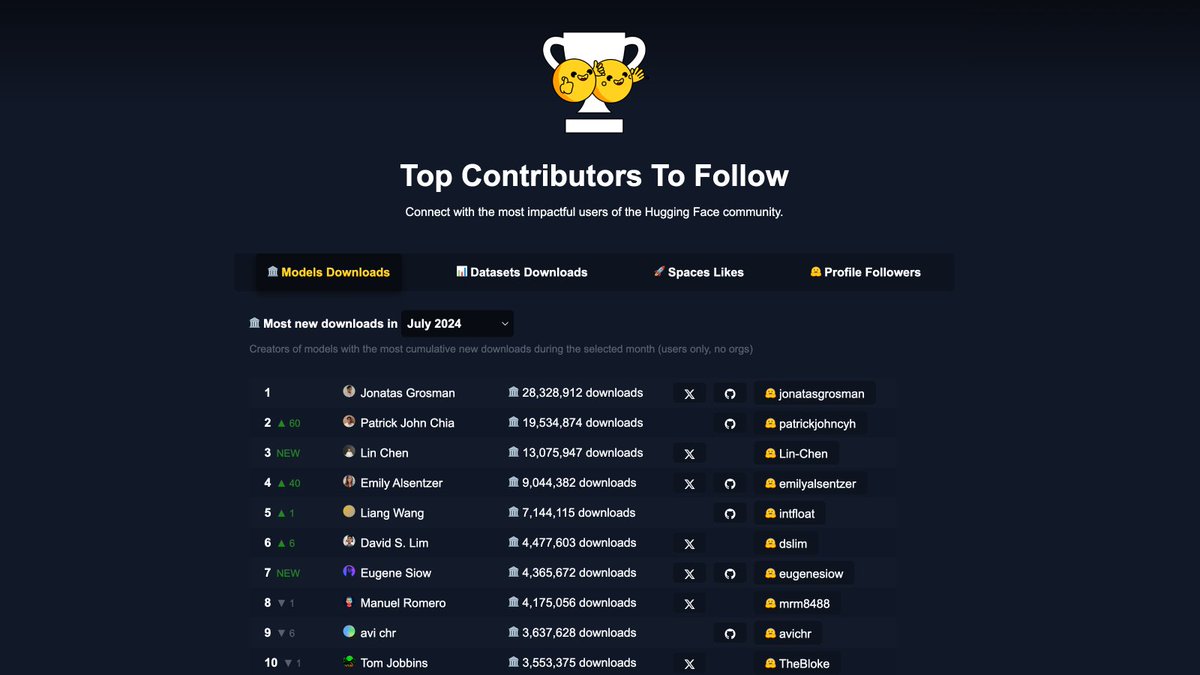

🤗 Here are the top 100 of @HuggingFace’s most impactful users of July 2024 (models, datasets, spaces, followers): 🏆 Top Contributors To Follow: huggingface.co/spaces/mvaloat… - 🏛️ Top 10 Model Downloads: 👏 @jonatasgrosman, #PatrickJohnChia, @Lin_Chen_98, @Emily_Alsentzer, #LiangWang, @limsanity23, @eugene_siow, @mrm8488, #avichr, @TheBlokeAI 📊 Top 10 Dataset Downloads: 👏 @haileysch__, @nathanhabib1011, @lukaemon, #HaonanLi, @algo_diver, #YebHavinga, @princeton_nlp, @jonbtow, @moonares, #DanHendrycks 🚀 Top 10 Space Likes: 👏 @fffiloni, @flngr, @multimodalart, @_akhaliq, @yvrjsharma, @angrypenguinpng, @hysts12321, #NishithJain, @xenovacom, @radamar 🤗 Top 10 Profile Followers: 👏 @TheBlokeAI, @mervenoyann, @fffiloni, @_akhaliq, #LvminZhang, @teknium, @xenovacom, #Undi, @erhartford, #WizardLM

ShareGPT4Video Improving Video Understanding and Generation with Better Captions We present the ShareGPT4Video series, aiming to facilitate the video understanding of large video-language models (LVLMs) and the video generation of text-to-video models (T2VMs)

ShareGPT4Video Improving Video Understanding and Generation with Better Captions We present the ShareGPT4Video series, aiming to facilitate the video understanding of large video-language models (LVLMs) and the video generation of text-to-video models (T2VMs)

📣📣📣We are excited to announce the release of Open-Sora Plan v1.1.0. 🙌Thanks to ShareGPT4Video's capability to annotate long videos, we can generate higher quality and longer videos. 🔥🔥🔥We continue to open-source all data, code, and models! github.com/PKU-YuanGroup/…