Amart (LOOP)⚡️

60.1K posts

Amart (LOOP)⚡️

@LoopOnChain

Founder and Managing Partner Edge AI | prev @Rivian | I show you how to transform your life with AI @umich

Company Brain @t_blom Every company has critical know-how scattered across people's heads, old Slack threads, support tickets, and databases, and AI agents can't operate like that. We think every company in the world is going to need a new primitive: a living map of how the company works that turns its own artifacts into an executable skills file for AI.

GPT-5.5 by @OpenAI is now live in the Arena, landing across multiple leaderboards. Here’s how it ranks by modality: - Code Arena (agentic web dev): #9, a strong +50pt jump over GPT-5.4 - Document Arena (analysis & long-content reasoning): #6, on par with Sonnet 4.6 - Text Arena: #7, Math #3, Instruction Following: #8 - Expert Arena: #5 - Search Arena: #2 - Vision Arena: #5 Strong, well-rounded performance, especially in Code (+50 pts vs GPT-5.4). Congrats to @OpenAI on the release. Full category breakdowns by modality in the thread.

It won’t happen. People say all kinds of things in surveys. Watch what they do instead. And what they do is… use AI.

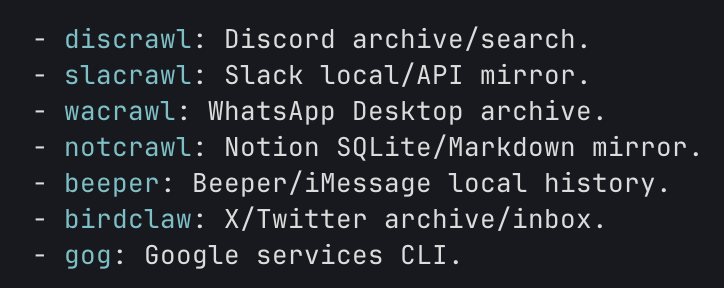

OpenClaw 2026.4.26 🦞 🎙️ Google Live Talk 🦙 Better Ollama/local models 🧳 Bring over Claude + Hermes setups 🔐 One-command Matrix E2EE Big release. Local models eat well. github.com/openclaw/openc…

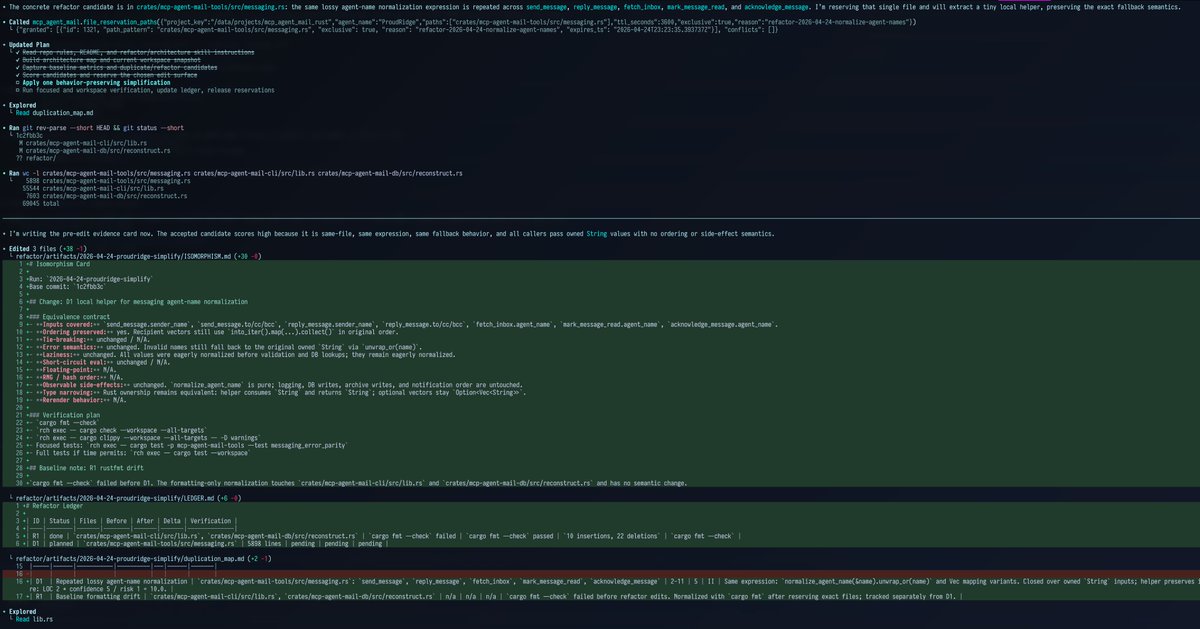

Everyone is slowly coming to this realization, and I assure you, no one is running multitudes of agents overnight. No one that is doing anything of substance at least. There _are_ people pretending to be scientists, or fully caught up in their drug infused AI overdose, that think their slop machines are changing the world. They're not tho, and they're just wasting a bunch of money and compute to create a lot of LoC that will just get thrown away. The state of the art is still "can we even one shot a production quality patch that we wont regret later", and its rarer than you'd expect based on discourse.

@LoopOnChain Yeah there's a bunch more work that's in the pipeline, this will change this week.