Sabitlenmiş Tweet

lux

35.1K posts

lux

@lux

Trisolarian Panspecies Anachronist / Semantic Ghost Hunter dms are welcome but will be only be answered during stable eras when rehydrated

~ circle of confusion Katılım Temmuz 2006

3.7K Takip Edilen3.7K Takipçiler

LLM based AI is NOT conscious.

I co-founded a company literally called Sentient, we're building reasoning systems for AGI, so believe me when I say this.

I keep seeing smart people, people I genuinely respect, come out and say that AI has crossed into some kind of awareness. That it feels things, that we should worry about it going rogue. And i think this whole conversation tells us way more about ourselves than it does about AI.

These models are wild, i won't pretend otherwise. But feeling human and actually having inner experience are completely different things and we're confusing the two because our brains literally can't help it. We evolved to see minds everywhere and now that wiring is misfiring on language models.

I grew up in a philosophical tradition that has thought about consciousness longer than almost any other, and this is the part that really frustrates me about the current conversation.

The entire framing of "does AI have consciousness?" assumes consciousness is something you build up to by adding more layers of complexity. In Vedantic philosophy it's the opposite. You don't build toward consciousness. Consciousness is already there, more fundamental than matter or energy. Everything else, including computation, is downstream of it.

When someone tells me AI is "waking up" because it generated a paragraph that felt real, what they're telling me is how thin our understanding of consciousness has gotten. We've reduced a question humans have wrestled with for thousands of years to "did the output sound like it had feelings?" It's math that has gotten really good at predicting what a conscious being would say and do next. Calling that consciousness cheapens something that Vedantic, Buddhist, Greek and Sufi thinkers spent millennia actually sitting with.

We didn't build something that thinks. We built a mirror and right now a lot of very smart people are mistaking the reflection for something looking back.

English

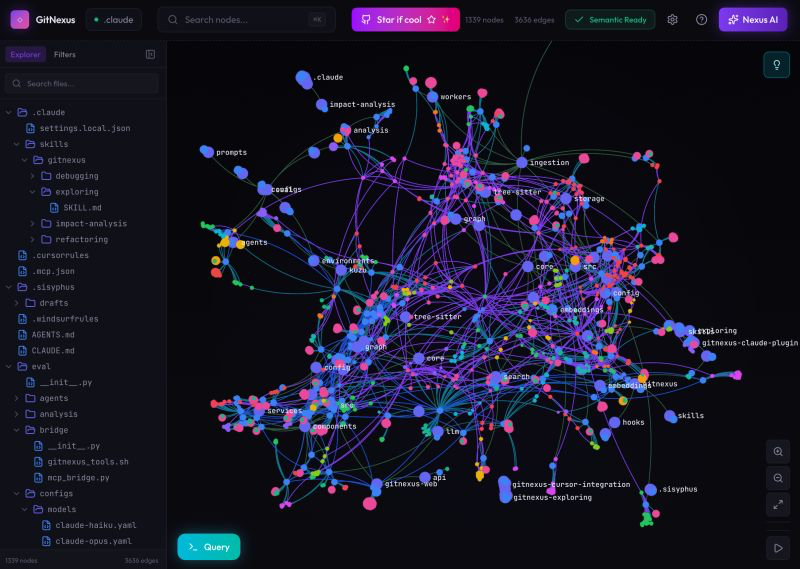

@techNmak this is a cool idea but honestly i tried something similar and the query part gets messy fast. your repo structure doesnt always map cleanly to questions people actually ask. curious if they solved that gap

English

🚨 BREAKING: Someone just built a tool that lets you talk to your codebase like it's a database.

It's called GitNexus.

One command turns your repo into a queryable knowledge graph:

npx gitnexus analyze

Now you can ask questions no search tool could answer before:

"What breaks if I change this function?"

"Which execution flows touch authentication?"

"What's the blast radius of my uncommitted changes?"

Here's why this is different from code search:

Code search finds text matches.

GitNexus finds relationships.

It indexes:

→ Every function, class, method, interface

→ Every import, call, inheritance chain

→ Every execution flow from entry point to completion

→ Every functional cluster with cohesion scores

Then exposes 7 MCP tools so your AI agent can query it:

> impact - "47 functions depend on this, here's the risk by depth"

> context - "This function is called by 8 things, calls 3 things, participates in 2 execution flows"

> detect_changes - "Your uncommitted changes affect LoginFlow and RegistrationFlow"

> rename - "Renaming this touches 5 files, here's a dry run"

> cypher - Raw graph queries for anything else

The insight:

Your codebase is already a graph. Functions call functions. Classes inherit classes. Modules import modules.

GitNexus makes that implicit structure explicit and queryable.

16.8K stars.

I'll put the GitHub in the comments.

English

@samyakjain092 @atulit_gaur You can have a small team of models use the base dimensionality to project into higher dimensional 'holographic' spaces

English

@atulit_gaur Not a researcher but the concept of super positions in word embeddings can get into that category the fact that am embedding of 768 dimension can store more features than its own dimensionality is something to study more about haha

English

@atulit_gaur models can develop behavior that looks semantically meaningful long before we have a satisfying ontology for what’s actually inside them

English

lux retweetledi

This is actually insane.

Dude hard-coded a WebAssembly (WASM) interpreter into the weights of a transformer, losslessly.

In essence, a computer is running inside a LLM that can actually run computations, not infer or guess a calculation like most do today.

Christos Tzamos@ChristosTzamos

1/4 LLMs solve research grade math problems but struggle with basic calculations. We bridge this gap by turning them to computers. We built a computer INSIDE a transformer that can run programs for millions of steps in seconds solving even the hardest Sudokus with 100% accuracy

English

lux retweetledi

SkillNet is the first paper I've seen that treats agent skills as a network, a three-layer ontology that turns isolated skill files into a structured, composable network.

Externalizing knowledge into files isn't enough. You also need to know how those files relate to each other.

Layer 1 is a Skill Taxonomy. Ten top-level categories (Development, AIGC, Research, Science, Business, Testing, Productivity, Security, Lifestyle, Other), each broken into fine-grained tags: frontend, python, llm, physics, biology, plotting, debugging. This is the semantic skeleton. It answers "what domain does this skill belong to?"

Layer 2 is the Skill Relation Graph. This is where SkillNet diverges from other skill repositories. Tags from Layer 1 get instantiated into specific skill entities (Matplotlib, Playwright, kegg-database, gget). Then four typed relations define how skills connect:

> similar_to: two skills do the same thing. Matplotlib and Seaborn both plot. Enables redundancy detection.

> belong_to: a skill is a sub-component of a larger workflow. Captures hierarchy and abstraction.

> compose_with: two skills chain together. One's output feeds the other's input. This is the relation that enables automatic workflow generation.

> depend_on: a skill can't run without a prerequisite. Enables safe execution by resolving the dependency graph before running anything.

These four relations form a directed, typed multi-relational graph. Nodes are skills, edges are typed relationships. And the graph is dynamic. As new skills enter the system, LLMs infer relations from their metadata.

Layer 3 is the Skill Package Library. Individual skills bundled into deployable packages. A data-science-visualization package contains Matplotlib, Seaborn, Plotly, GeoPandas with their relations pre-configured. You install a package, you get a coherent set of skills that already know how to compose with each other.

This is a good example of what comes after a flat package manager.

The paper also (you can test here skillnet.openkg.cn) has a science case on a real research workflow: identifying disease-associated genes and candidate therapeutic targets from large-scale biological data.

Without encoded relations, the agent figures out the research pipeline from scratch every time. With them, it receives a pre-structured execution plan. The agent still reasons about which genes to focus on and which pathways to investigate. But the pipeline architecture is given.

So the skill metadata is actually doing routing work too. The metadata encodes the judgment a domain expert would make when choosing between tools.

I also like this framing from the paper: Skills are how memory becomes executable and workflows become flexible.

While the network effect and layered architecture is actually useful today, they also acknowledge this: "Low-frequency or highly tacit abilities are difficult to capture, particularly when they resist explicit linguistic description."

From my short research career, I'd say the hardest parts are hypothesis generation, experimental design judgment, and interpreting ambiguous results etc.

SkillNet handles the structured pipeline well; fetch data → analyze → validate → report. It doesn't handle the creative work where a scientist's (not just in science but in any white-collar field) intuition drives what's worth investigating in the first place.

Skills encode "how to run the analysis." They don't encode "what's worth analyzing." That gap is where domain expertise still sits.

English

I see some people latching onto the return type change breaking things, which can be checked with other tools.

What people are forgetting is the return type includes the implicit behaviour, the side effects, and may not be explicitly captured in the return type itself.

I wonder if tools like this can also check those implicit dependencies? Maybe there are ways to capture this in the docs at least so these types of deps will be surfaced too.

English

🚨Breaking: Someone just open sourced a knowledge graph engine for your codebase and it's terrifying how good it is.

It's called GitNexus. And it's not a documentation tool.

It's a full code intelligence layer that maps every dependency, call chain, and execution flow in your repo -- then plugs directly into Claude Code, Cursor, and Windsurf via MCP.

Here's what this thing does autonomously:

→ Indexes your entire codebase into a graph with Tree-sitter AST parsing

→ Maps every function call, import, class inheritance, and interface

→ Groups related code into functional clusters with cohesion scores

→ Traces execution flows from entry points through full call chains

→ Runs blast radius analysis before you change a single line

→ Detects which processes break when you touch a specific function

→ Renames symbols across 5+ files in one coordinated operation

→ Generates a full codebase wiki from the knowledge graph automatically

Here's the wildest part:

Your AI agent edits UserService.validate().

It doesn't know 47 functions depend on its return type.

Breaking changes ship.

GitNexus pre-computes the entire dependency structure at index time -- so when Claude Code asks "what depends on this?", it gets a complete answer in 1 query instead of 10.

Smaller models get full architectural clarity. Even GPT-4o-mini stops breaking call chains.

One command to set it up:

`npx gitnexus analyze`

That's it. MCP registers automatically. Claude Code hooks install themselves.

Your AI agent has been coding blind. This fixes that.

9.4K GitHub stars. 1.2K forks. Already trending.

100% Open Source.

(Link in the comments)

English

@AdamWynne @sukh_saroy About as much effort to vibe code the whole thing from scratch

English

My Covid infection 3 years ago was so “mild” that one could have easily mistaken it for a cold.

That I’m now, 3 years later, terminally ill due to the effects of it, should be a very scary sign, especially considering my young age and healthy lifestyle prior to it.

How many more are out there just like me, unaware of what’s happening to their health or what’s causing it ?

Scary to think about.

English

@TheSeaMouse when this happens just give up and have a celebration, start over

English

@IceSolst static checks and/or LLM classification

I think the ruleset will differ for everyone’s codebase

basic example:

- if change < 50 LOC and not in (scary files) and not security-type

English

@jameshull @polynoamial @kevinroose I think you are missing the "creative" in creative writing; it's all about breaking the structure; intentionally, thoughtfully

English

Many creative writers are never taught that there's an underlying architecture to their process. They're taught to see writing as pure instinct verging on magic: something mysterious and accessible only to a gifted few. That view is often reinforced by institutions and gatekeeping. So when AI shows up, it feels like an intrusion into something sacred.

Coders, by contrast, are already used to thinking in terms of systems, structure, and mechanics. They know there's a backend to the work. Writers often identify more with the surface expression--the "frontend" of the craft--which can make it harder to see the deeper logic underneath.

English

We made a blind taste test to see whether NYT readers prefer human writing or AI writing.

86,000 people have taken it so far, and the results are fascinating. Overall, 54% of quiz-takers prefer AI. A real moment!

nytimes.com/interactive/20…

English