TheStuffOfStars

340 posts

I use AI to do research (i.e., find things to read, explain parts of academic papers I find ambiguous or confusing), transcribe interviews, generate pushback on my column thesis, suggest trims when I'm over my word count, sharpen podcast interview questions, and perform a final fact check on columns and editorials. But mostly it's compressing the ancillary tasks to the main job: reading, thinking, and writing.

I agree this is self-evident. Seems there’s a deeper disagreement here about what needs explaining. One side defines consciousness as qualia (subjective experience from the inside). The other defines consciousness as something information processing. Which side you take frames the whole debate.

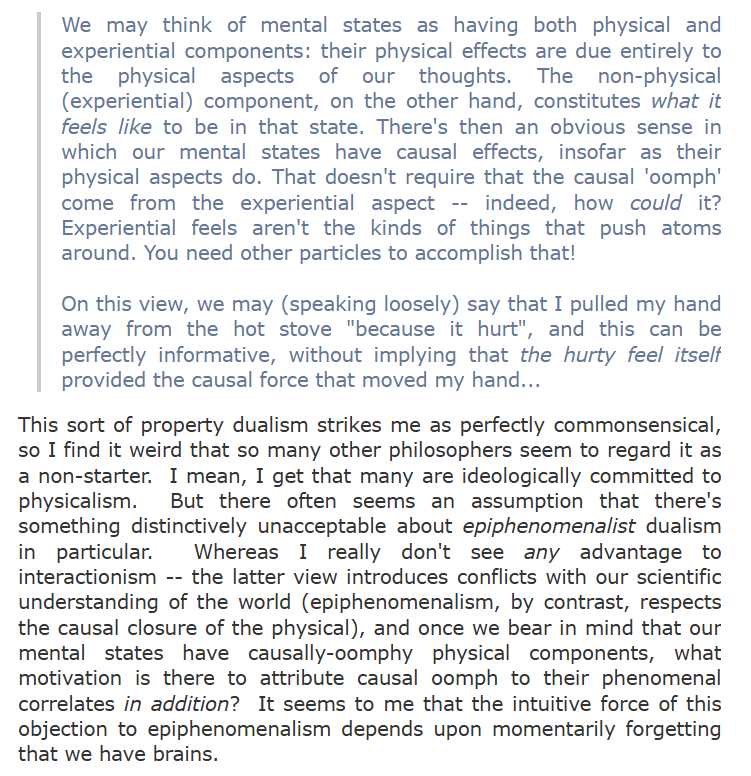

Epiphenomenalism seems like the very worst option in philosophy of mind, because it preserves the absolute worst of dualism and physicalism. Qualia aren't physical, but we have no epistemic access to them at all. Every debate about qualia hasn't been caused at all by either participant having qualia, but they're also there separate from the physical world. I meet a lot of people smarter than me who entertain epiphenomenalism and I have yet to hear an argument for how I could even even consider it in the first place.

As someone who has been experimenting with GPT-3 for over a year now, some of the skepticism astounds me. It’s unbelievably powerful - and at the same time, not at all unique, as subsequent developments with LLMs have demonstrated.

Will be accepting these at the exit

"dont eat animals cuz its bad for the envoirement" >proceeds to use ai