Matt

192 posts

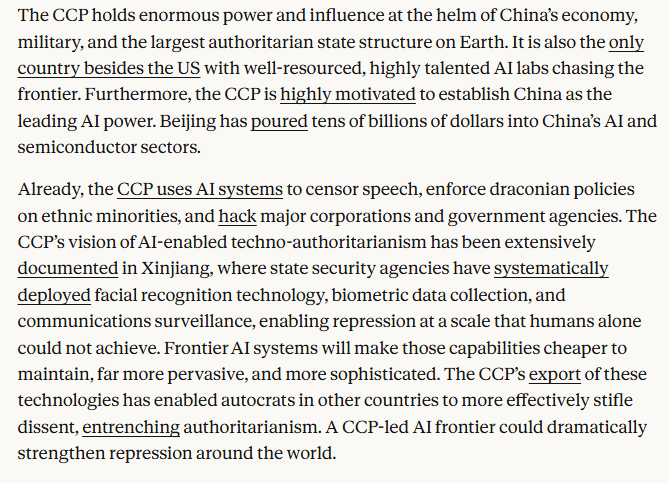

They really want to believe that the government that wanted to use their systems to commit war crimes without responsibility would be good to win the arc of history.

@kristinaEBP Do you know that someone set up Grok to do that? I caught them. It is live and public. What is true is that you avoid every actual truth and spread a false narrative. You can’t argue the facts, so you fall to “Hitler” and insults. It is petty and small. It is also false. •

@m_bangesh @StuartHameroff Dr. Hameroff's experiments observing microtubule frequencies were successful, but Penrose's theory of consciousness was insufficient to interpret his observations. What a pity!

I’ve never quite understood how I’ll get “left behind” if I don’t use AI. I’m perfectly capable of writing, researching, and thinking all on my own. What does it do that will leave me behind?

@StuartHameroff One real experiment to convincingly prove a hypothesis would do what one billion tweets over the decades can't.

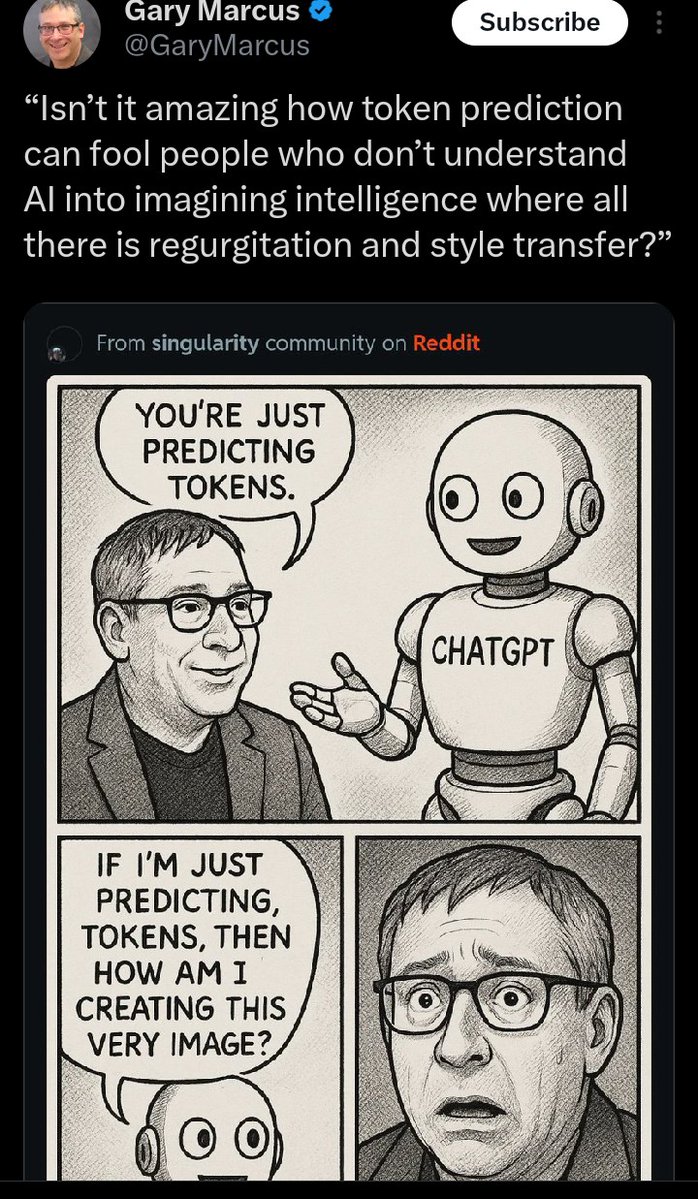

Dear @geoffreyhinton, I literally never said that AI systems “JUST regurgitate”; that’s plainly false. I don’t believe it, and I didn’t say it. (They do *sometimes* regurgitate, and the evidence for that is overwhelming.) I further discuss the rest of your reply, including your alleged quote (which I can’t source outside your own webpage), and what it might mean, in a reply below. In the best case, you have got me wrong. I certainly don’t believe what you are trying to pin me, as someone who was been warning about hallucinations (which are NOT regurgitations) since 2001.

@GaryMarcus I believe you said that they JUST (my caps) regurgitate training data. That IS stupid. Here is a quote from you: "It gloms on to different clusters of text. That is all."