Max Sapo

803 posts

Max Sapo

@MaxHappyverse

Co-Founder & CEO @ Happyverse AI. Ex-Google TPU team & Etched. Stanford MBA/MS grad. Georgia Tech drop out. Dad.

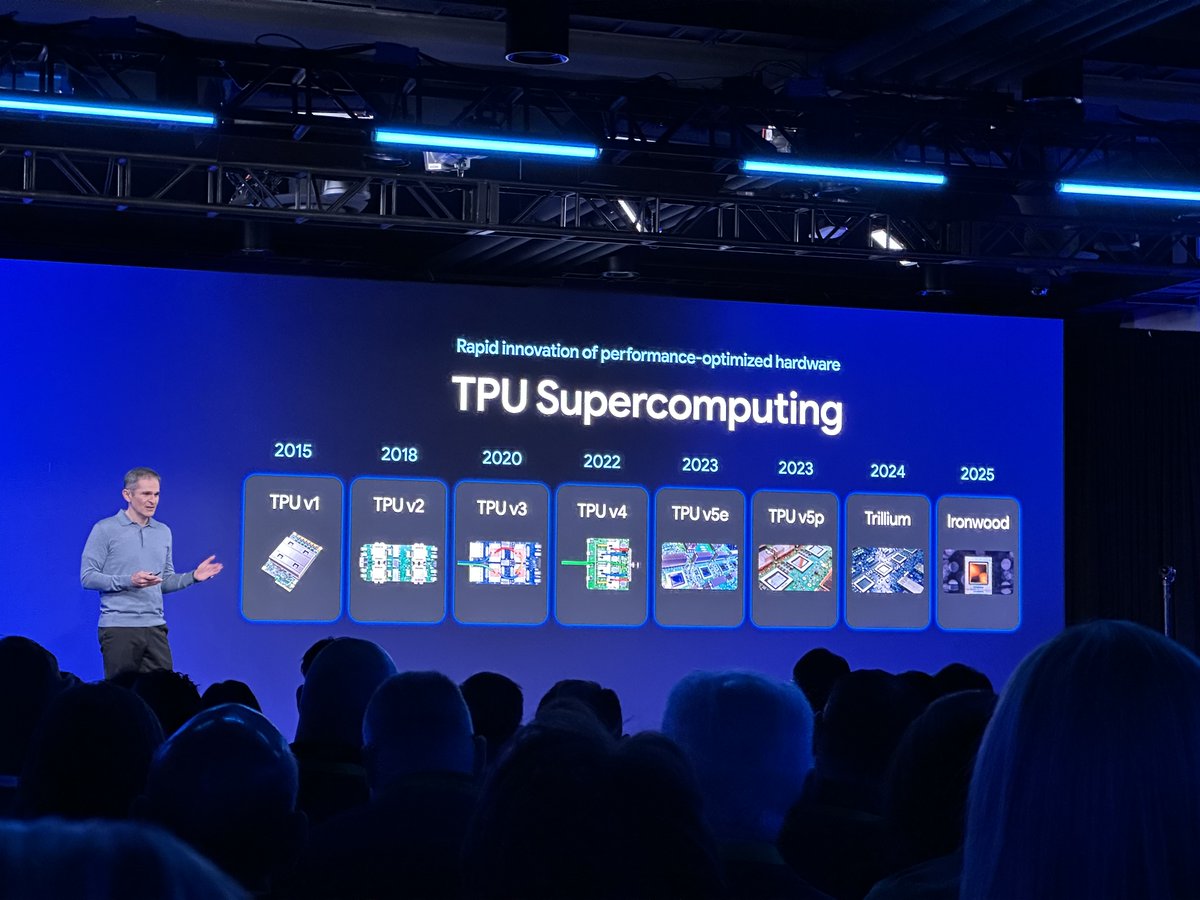

Amin Vahdat live at Transition-AI 2026 with @CatalystPod. Google's Chief Technologist for AI Infrastructure - the man in charge of Google's $175–185B 2026 CapEx and the one who's said compute capacity needs to 2x every 6 months! Key takeaways: Training → Inference cascade: -Frontier GW training clusters have a ~1–2 year useful life; then capacity cycles to serving -Inference doesn't need GW scale — <100 MW can do useful work on large models -"Entering the age of inference" is now real as agents explode DC footprint -Speed-of-light latency starts to matter as models get faster — geography becomes UX + reliability -"a medium number of medium-sized data centers, augmented with a small number of large ones" (few large training clusters, inference can be mix of 10s to 100s of MW) Reliability reframe -4-nines isn't intrinsic: "we should be thinking about lower reliability power delivery overall" -Do you make the this trade: 4-nines at half capacity, or 2-nines (3.65 days downtime/yr) at 2x capacity? Customers "very often" pick 2x Behind the meter -Google actually prefers grid-connected capacity = BTM is a bridge, not a destination -BTM is about a different latency: time-to-delivery of capacity -Bridge sources: turbines, gas, mobile generation. Permanent: solar, wind, nuclear -Stranded BTM? "I'd love to have that problem." Energy is the limiter Co-design = capability -Google co-designs across Gemini ↔ software ↔ TPU ↔ rack ↔ DC ↔ power ↔ building -A few percent at each interface compounds into real advantage Bottlenecks -Won't force-rank chips/power/labor/EPC: "10am it's labor, noon it's power, 2pm it's chips — every day" -YoY efficiency is real: this year's capacity would've cost ~1.2x last year Building fungibility is dead, purpose-build is back -25-yr buildings vs. 5–6 chip generations. Disk rack vs. GPU rack = ~100x watts/sq ft and widening -Old world: build for fungibility (compute wasn't dominant cost). New world: "this is a GPU building, that's a TPU building" -Density wasn't maxed before because flexibility was worth more. That's flipped One of the most important pods of the year from an AI leader at one of the most important companies at the center of it all! Great job @shaylekann

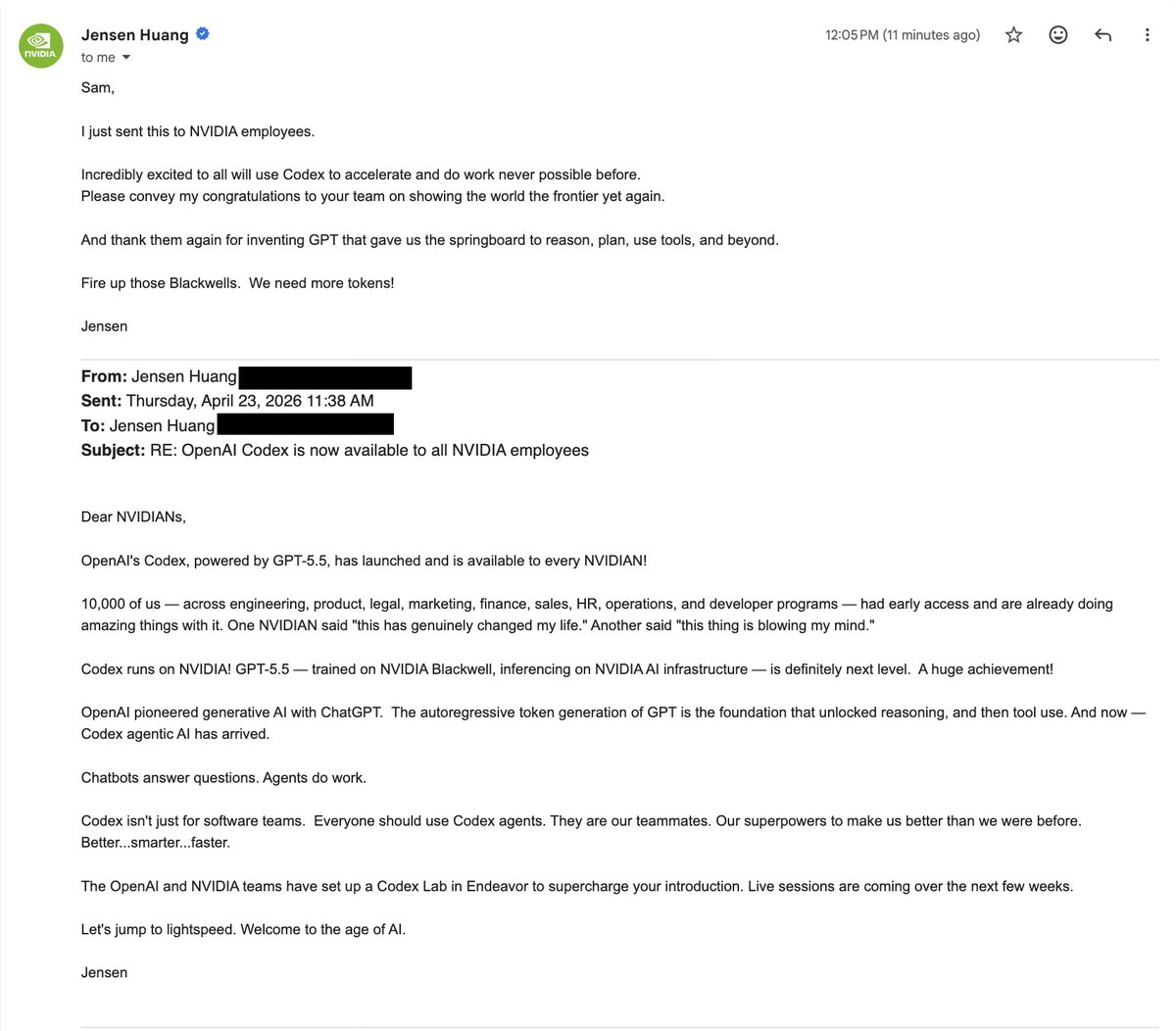

We tried a new thing with NVIDIA to roll out Codex across a whole company and it was awesome to see it work. Let us know if you'd like to do it at your company!

If you are at Google Cloud Next, come and join us at the Gemini Playspace to talk about Gemini models.