Mecka

106 posts

Mecka

@MeckaAI

The Data Platform For Robotics Dba Mecka AI

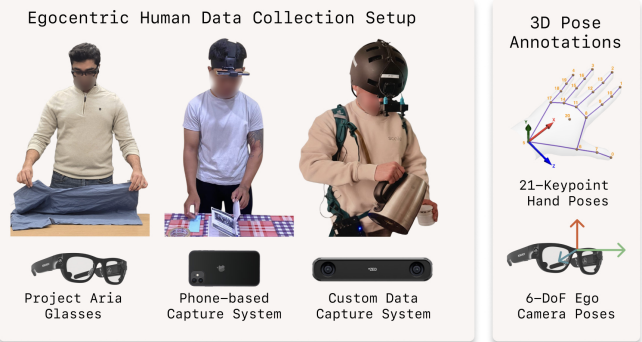

Introducing EgoVerse: an ecosystem for robot learning from egocentric human data. Built and tested by 4 research labs + 3 industry partners, EgoVerse enables both science and scaling 1300+ hrs, 240 scenes, 2000+ tasks, and growing Dataset design, findings, and ecosystem 🧵

Introducing EgoVerse: an ecosystem for robot learning from egocentric human data. Built and tested by 4 research labs + 3 industry partners, EgoVerse enables both science and scaling 1300+ hrs, 240 scenes, 2000+ tasks, and growing Dataset design, findings, and ecosystem 🧵

Mecka is excited to power EgoVerse: a growing ecosystem for robot learning from egocentric human data Proven across 4 leading research labs, EgoVerse data consistently boosts robot performance We release the tools for anyone to collect data, inference/train, and contribute! 🧵

excited to announce i've joined my dear friend @jasontheutopian to build @MeckaAI robotics will be the next trillion dollar market and we're building the infrastructure to make it happen more to come soon 👀