We have a new position paper on "inference time compute" and what we have been working on in the last few months! We present some theory on why it is necessary, how does it work, why we need it and what does it mean for "super" intelligence.

nathan lile

1.6K posts

@NathanThinks

ceo/cofounder @ https://t.co/bDd3J4Lmzf hiring in SF 🌁 scaling synthetic reasoning. recurrent rabbit hole victim. nothing great is easy.

We have a new position paper on "inference time compute" and what we have been working on in the last few months! We present some theory on why it is necessary, how does it work, why we need it and what does it mean for "super" intelligence.

Qwen+RL = dramatic, Aha! Llama+RL = quick plateau Same size. Same RL. Why? Qwen naturally exhibits cognitive behaviors that Llama doesn't Prime Llama with 4 synthetic reasoning patterns & it matched Qwen's self-improvement performance! We can engineer this into any model! 👇

LoRA makes fine-tuning more accessible, but it's unclear how it compares to full fine-tuning. We find that the performance often matches closely---more often than you might expect. In our latest Connectionism post, we share our experimental results and recommendations for LoRA. thinkingmachines.ai/blog/lora/

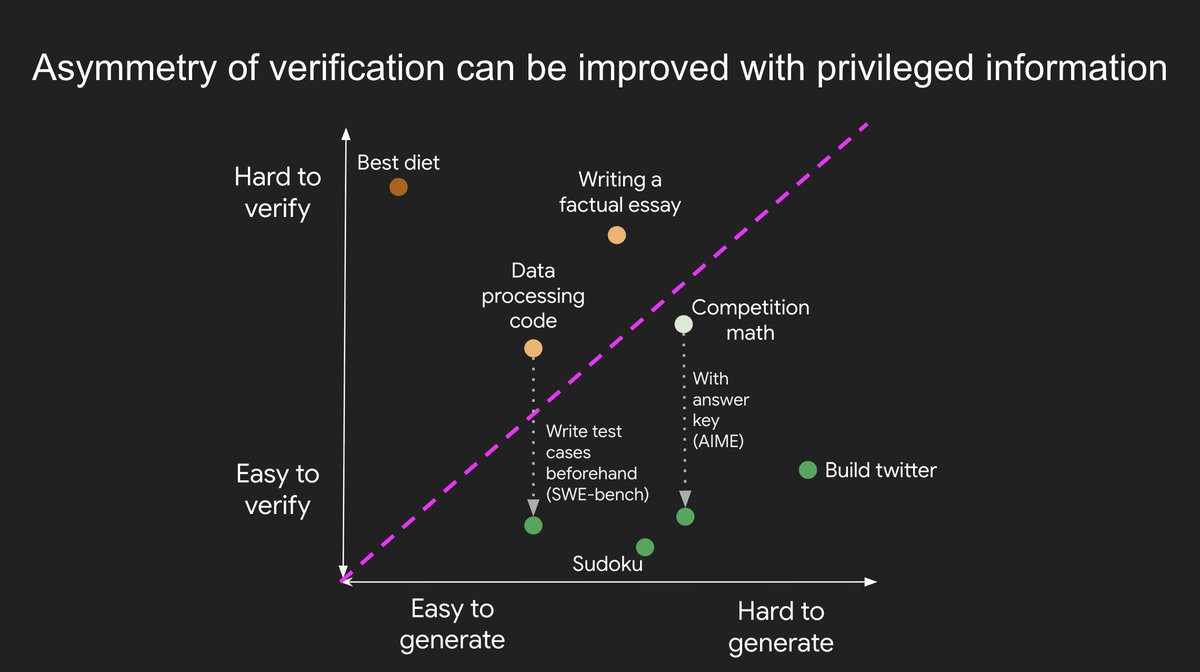

Scalable oversight is pretty much the last big research problem left. Once you get an unhackable reward function for anything then you can RL on everything.

Earlier this year we partnered with SynthLabs (synthlabs.ai), a post-training research lab, to generate a 351 billion token synthetic dataset 10x faster and 80% cheaper. Read more in our case study: sutro.sh/case-studies/s…

At the beginning of the study, developers forecasted that they would get sped up by 24%. After actually doing the work, they estimated that they had been sped up by 20%. But it turned out that they were actually slowed down by 19%.

this is the Kimi K2 base model's attempt to complete an unfinished version of my blogpost

blocked it because of this. No hate on the timeline please!

What if models could learn which problems _deserve_ deep thinking? No labels. Just let the model discover difficulty through its own performance during training. Instead of burning compute 🔥💸 on trivial problems, it allocates 5x more on problems that actually need it ↓

Our new method (ALP) monitors solve rates across RL rollouts and applies inverse difficulty penalties during RL training. Result? Models learn an implicit difficulty estimator—allocating 5x more tokens to hard vs easy problems, cutting overall usage by 50% 🧵👇1/10