Neo

837 posts

Neo

@Ne0xf

I work 4 @Minima_Global - You won't find TA or FA here, just some memes and bullish tweets

4 months ago I wrote the first line of zkRune for a hackathon. The idea was simple: what if you could prove something about yourself without revealing the thing itself? That hackathon ended. I kept building. What started as a demo turned into real infrastructure. Every week I pushed it further, from "cool concept" toward something you could actually ship in production. The proof generation runs entirely in your browser. No server ever sees your data. That wasn't a shortcut. It was the whole point. Along the way it grew into things I didn't plan on day one. A Telegram bot that gates a whale chat with ZK proofs instead of screenshots. An embeddable widget where one script tag gives any site private verification. A mobile app. A developer portal. A trust model that honestly tells you which circuits are production-grade and which aren't. The moment that changed everything: deploying the Groth16 verifier on Solana mainnet. That's when it stopped being a project and started being infrastructure. Then came on-chain balance attestation. Balance proof used to rely on user input. Now it pulls your real token balance from Solana RPC and signs it. Self-asserted became chain-verified. 296 commits later, here's what zkRune is today: 14 ZK circuits. SDK. CLI. Verify API. Embeddable widget. Mobile app. Telegram bot. Trusted setup ceremony completed. Merkle tree membership proofs. Developer docs with full trust model. From hackathon to privacy infrastructure. Still building. zkrune.com

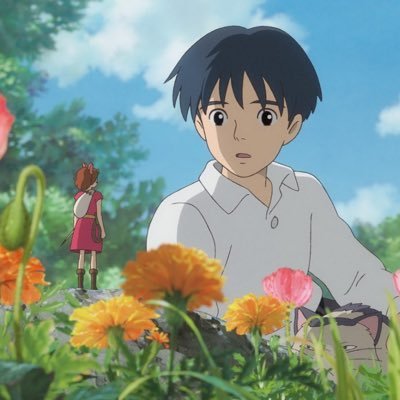

Our student engineers have carried out the world’s first demo of a 'blockchain black box’ on a drone. It records flight data on a secure digital ledger so it's actions can be verified and trusted. Read more: southampton.pulse.ly/xerj9wruyl @Minima_Global | @Siemens | @Arm

An important, and perenially underrated, aspect of "trustlessness", "passing the walkaway test" and "self-sovereignty" is protocol simplicity. Even if a protocol is super decentralized with hundreds of thousands of nodes, and it has 49% byzantine fault tolerance, and nodes fully verify everything with quantum-safe peerdas and starks, if the protocol is an unwieldy mess of hundreds of thousands of lines of code and five forms of PhD-level cryptography, ultimately that protocol fails all three tests: * It's not trustless because you have to trust a small class of high priests who tell you what properties the protocol has * It doesn't pass the walkaway test because if existing client teams go away, it's extremely hard for new teams to get up to the same level of quality * It's not self-sovereign because if even the most technical people can't inspect and understand the thing, it's not fully yours It's also less secure, because each part of the protocol, especially if it can interact with other parts in complicated ways, carries a risk of the protocol breaking. One of my fears with Ethereum protocol development is that we can be too eager to add new features to meet highly specific needs, even if those features bloat the protocol or add entire new types of interacting components or complicated cryptography as critical dependencies. This can be nice for short-term functionality gains, but it is highly destructive to preserving long-term self-sovereignty, and creating a hundred-year decentralized hyperstructure that transcends the rise and fall of empires and ideologies. The core problem is that if protocol changes are judged from the perspective of "how big are they as changes to the existing protocol", then the desire to preserve backwards compatibility means that additions happen much more often than subtractions, and the protocol inevitably bloats over time. To counteract this, the Ethereum development process needs an explicit "simplification" / "garbage collection" function. "Simplification" has three metrics: * Minimizing total lines of code in the protocol. An ideal protocol fits onto a single page - or at least a few pages * Avoiding unnecessary dependencies on fundamentally complex technical components. For example, a protocol whose security solely depends on hashes (even better: on exactly one hash function) is better than one that depends on hashes and lattices. Throwing in isogenies is worst of all, because (sorry to the truly brilliant hardworking nerds who figured that stuff out) nobody understands isogenies. * Adding more _invariants_: core properties that the protocol can rely on, for example EIP-6780 (selfdestruct removal) added the property that at most N storage slots can be changedakem per slot, significantly simplifying client development, and EIP-7825 (per-tx gas cap) added a maximum on the cost of processing one transaction, which greatly helps ZK-EVMs and parallel execution. Garbage collection can be piecemeal, or it can be large-scale. The piecemeal approach tries to take existing features, and streamline them so that they are simpler and make more sense. One example is the gas cost reforms in Glamsterdam, which make many gas costs that were previously arbitrary, instead depend on a small number of parameters that are clearly tied to resource consumption. One large-scale garbage collection was replacing PoW with PoS. Another is likely to happen as part of Lean consensus, opening the room to fix a large number of mistakes at the same time ( youtube.com/watch?v=10Ym34… ). Another approach is "Rosetta-style backwards compatibility", where features that are complex but little-used remain usable but are "demoted" from being part of the mandatory protocol and instead become smart contract code, so new client developers do not need to bother with them. Examples: * After we upgrade to full native account abstraction, all old tx types can be retired, and EOAs can be converted into smart contract wallets whose code can process all of those transaction types * We can replace existing precompiles (except those that are _really_ needed) with EVM or later RISC-V code * We can eventually change the VM from EVM to RISC-V (or other simpler VM); EVM could be turned into a smart contract in the new VM. Finally, we want to move away from client developers feeling the need to handle all older versions of the Ethereum protocol. That can be left to older client versions running in docker containers. In the long term, I hope that the rate of change to Ethereum can be slower. I think for various reasons that ultimately that _must_ happen. These first fifteen years should in part be viewed as an adolescence stage where we explored a lot of ideas and saw what works and what is useful and what is not. We should strive to avoid the parts that are not useful being a permanent drag on the Ethereum protocol. Basically, we want to improve Ethereum in a way that looks like this:

Sui Mainnet is currently experiencing a network stall, and the Sui Core team is actively working on a solution. Be aware that dApps such as Slush or SuiScan may not be available, and transactions may be slow or temporarily unable to process at this time. Updates will be shared as soon as they are available.