Noel Cabral

373 posts

Noel Cabral

@NoelCabralBlog

Business developer and blogger who found my passion in writing about business, AI technology and finance following a rewarding career in the U.S. Navy.

sent this to the team today everything great comes from being able to delay gratification for as long as possible and it feels like we're collectively losing our ability to do that

Tomorrow we will unveil the all new vibe coding experience in @GoogleAIStudio, the team has spent 4 months rebuilding it all from scratch and smoothing out rough edges to help everyone bring their ideas to life. This is a big step forward, but just the start : )

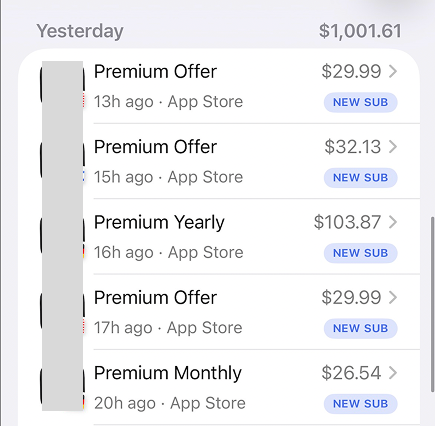

Day 1 of building my SaaS in public. The idea: ThinkBoard, a dialed-down alternative to Poppy AI. First, let me say this: I'm a Poppy user myself and I absolutely love it. Been using it across multiple parts of my business for months. The tool is genuinely incredible and does exactly what it promises. But here's the thing. Poppy is doing $6M ARR with a massive user base, but they're leaving an entire market behind: → $399/year minimum → No free trial → No monthly plan I spent hours reading reviews on Reddit, G2, and review sites. Same complaint everywhere: "Love the concept but hate the commitment" "Want to try it for a month first" "Not dropping $400 without testing it" "Pricing killed this immediately for me" These aren't people who don't want the product. They're people getting priced out before they even start. So I'm building ThinkBoard for everyone who clicked on Poppy and immediately left when they saw the pricing. Same core value: → Free trial (no credit card) → Monthly option → Affordable annual plan This is an underserved segment with proven demand. The market already exists. I'm just building the version they're actually asking for. Next step: Finalizing the core features. I'm NOT building a clone. Just a lean version that solves the main problem without all the enterprise bloat. Documenting everything here. Follow along.

Software isn’t merely technical work anymore. It’s creative. Introducing Replit Agent 4. The first AI built for creative collaboration between humans and agents. Design on an infinite canvas, work with your team, run parallel agents, and ship working apps, sites, slides & more.