NuScienta

1.6K posts

NuScienta

@NuScienta

The #1 platform to learn AI skills for free. Make money. Land your dream job in 2026. You will regret not starting today. Click the link in bio to start now.

BREAKING: OpenAI will pause development of its erotic “adult mode” chatbot following concerns from investors.

Exclusive: OpenAI is backing a new AI startup that aims to build software allowing so-called AI “agents” to communicate and solve complex problems in industries such as finance and biotech on.wsj.com/4bTvwKd

OpenAI puts erotic chatbot plans on hold ‘indefinitely’ ft.trib.al/4Q2hLpT

Your work tools in Claude are now available on mobile. Explore Figma designs, create Canva slides, check Amplitude dashboards, all from your phone. Give it a try: claude.com/download

New in Claude Code: auto mode. Instead of approving every file write and bash command, or skipping permissions entirely, auto mode lets Claude make permission decisions on your behalf. Safeguards check each action before it runs.

Your work tools in Claude are now available on mobile. Explore Figma designs, create Canva slides, check Amplitude dashboards, all from your phone. Give it a try: claude.com/download

New in Claude Code: auto mode. Instead of approving every file write and bash command, or skipping permissions entirely, auto mode lets Claude make permission decisions on your behalf. Safeguards check each action before it runs.

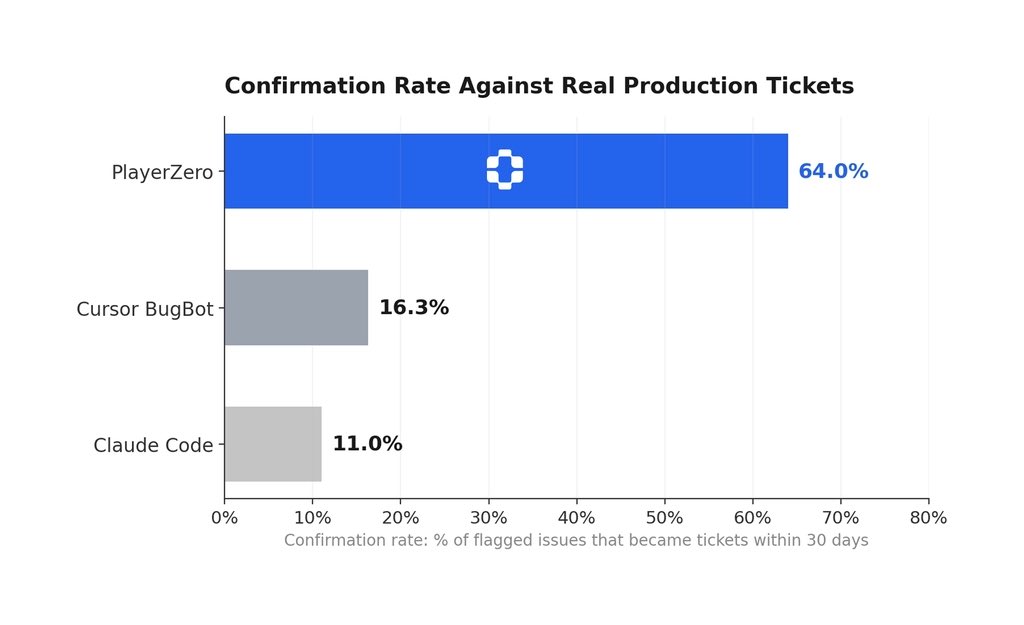

Introducing: PlayerZero The world's first Engineering World Model that puts debugging, fixing, and testing your code on autopilot. We've raised $20M from Foundation Capital, @matei_zaharia (Databricks), @pbailis (Workday), @rauchg (Vercel), @zoink (Figma), @drewhouston (Dropbox), and more PlayerZero frees up 30% of your engineering bandwidth by: 1. Finding the root cause for bugs & incidents in minutes that engineering teams take days to identify. 2. Predicting in minutes, edge case issues that a 300-person QA team would take weeks to find. ------ Here's why this matters: No one in your org has a complete picture of how your production software actually behaves. Support sees tickets. SRE sees infra. Dev sees code. Each team builds their own fragmented view - and none of these systems talk to each other. When something breaks, everyone scrambles to stitch the picture together by hand. PlayerZero connects all of it into a single context graph - → The Slack thread where your lead said "we went with X because Y fell apart in prod last time" → The PR review where an engineer explained the tradeoff → The lifetime history of your CI/CD pipeline, observability stack, incidents, and support tickets So you can trace any problem to its root cause across every silo. And it compounds. Every incident diagnosed teaches the model something new. The longer it runs, the deeper it understands - which code paths are high-risk, which configurations are fragile, which changes tend to break which customer flows. So when you sit down to debug a live issue, you have your entire org's collective reasoning and production memory behind you - instantly. ------ Zuora, Georgia-Pacific, and Nylas have reduced resolution time by 90% and caught 95% of breaking changes and freeing an average of $30M in engineering bandwidth. ------ Our guarantee: If we can't increase your engineering bandwidth by at least 20% within one week, we'll donate $10,000 to an open-source project of your choice. Book a demo - bit.ly/3NlLMeN