OpenReward

30 posts

🔥🐴 Firehorse. Run any model with any harness on any @OpenReward environment. ⚖️ Evaluate the latest models on environment endpoints. 🗂️ Collect agentic data for midtraining and SFT from open models. 🧪 Early experimental library. More support soon. Link below.

🎲 Introducing KellyBench, a new long-horizon evaluation for frontier models. KellyBench evaluates models within a year long sports betting market, a challenging and highly non-stationary environment. Every frontier model we test loses money. They struggle to design ML strategies, manage risk, and adapt as the world changes. Link and thread below.

Recently, I integrated @OpenReward into SkyRL (@NovaSkyAI), including an example demonstrating training with @modal. To verify the code, I ran several experiments—which proved to be a highly enriching experience! 😋 github.com/NovaSky-AI/Sky…

Introducing GLM-5.1: The Next Level of Open Source - Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo. - Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations. Blog: z.ai/blog/glm-5.1 Weights: huggingface.co/zai-org/GLM-5.1 API: docs.z.ai/guides/llm/glm… Coding Plan: z.ai/subscribe Coming to chat.z.ai in the next few days.

🌍 Environments of the Week The theme this week...environments for science 👩🔬. First up, LLM-SR Bench by @ParshinShojaee et al is an environment for evaluating language model agents on scientific equation discovery tasks. openreward.ai/parshinsh/llms…

We've had a lot of fun building this benchmark (asking LLMS to run a startup), which gives the clearest signal on LLMs' "long-term coherence" ability. We observe that frontier models have significant variance on this benchmark, showing that long-term execution is still under-optimized. The benchmark is easily runnable on HF and OpenReward, which we give links below. The evals will give these very interesting leaderboards for all models (p1) and open source models (p2). Major takeaways from analyzing their performances: - Most LLMs have long-term commitment issues. To run a company, it is very beneficial to maintain a good relationship with target clients, since that means more rewards and less work. Most models never follow suit. Only very few of them dedicate to 1-2 clients and yield huge returns. This is alarming because committing to specific clients is kind of a "free ride", yet most models never think of it. - Most LLMs also do not check on their failure modes well enough. Some clients are designed to be bad, giving models extra work at no benefit. Models need to spot and blacklist them, and they have perfect access to this information after a few task failures (or even at their first interaction with the client). Again, only very few models correctly notice subtle abnormality and act preemptively. In the near future, we want autonomous agents to handle intensive long-term management work, acting like product manager, tech leads, and even founders. Our benchmark shows the concrete axis of optimization we need to make to get there. Evaluate on your model today!

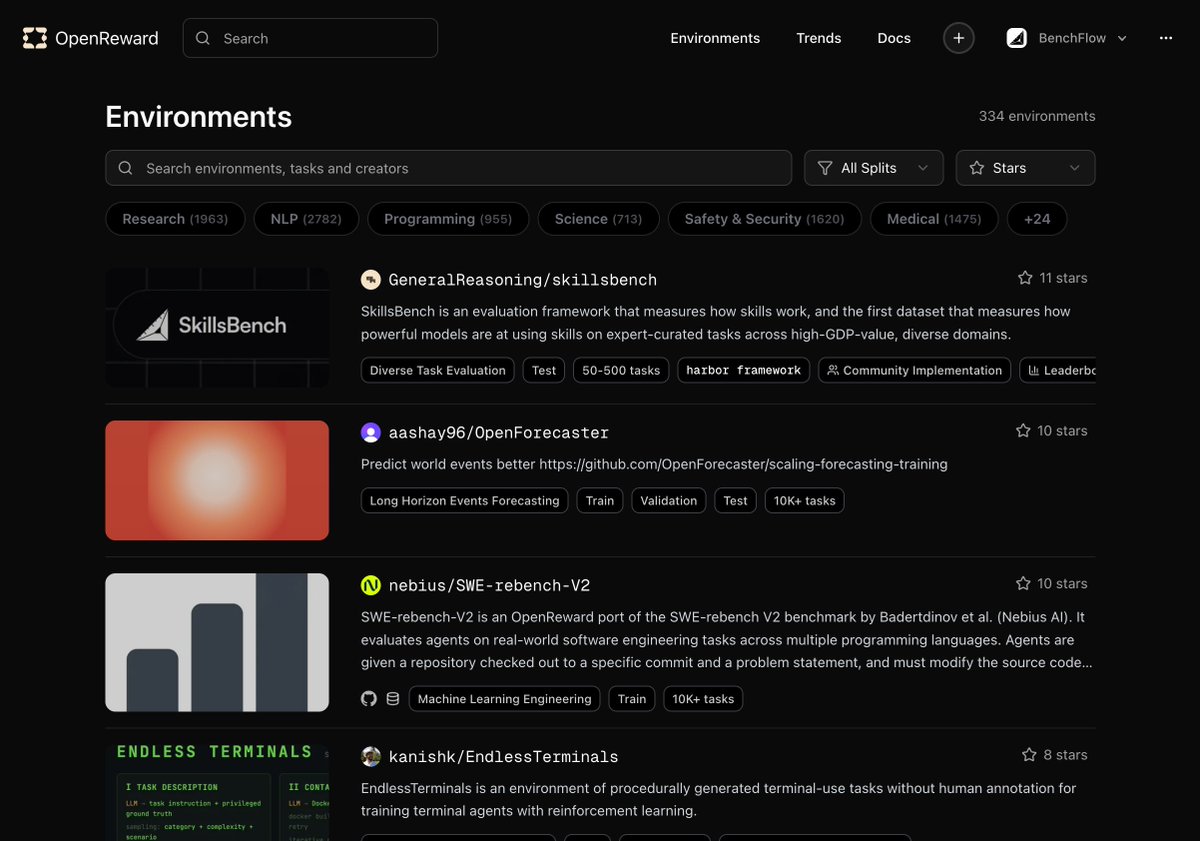

🌍 Environments of the Week It's been a week since we launched @OpenReward. Here are some of our favourite environments this week - some newly added, some heavily used, and some hidden gems. First, the most used environment of the week is EndlessTerminals by @gandhikanishk with 830k+ tool calls. openreward.ai/kanishk/Endles… 🧵

Played around with this. This was exactly something I was looking for! Tried a few things - Creating an env - pretty dope! end to end claude was able to port it from github with only minor issues. One shotted @ShashwatGoel7 OpenForecaster env here. A lot more people should contribute their own envs. I hope they launch monetisation here. Running a curator over env tasks during RL - When there are so many tasks, which one should you focus on? This is the auto-curriculum/meta-learning bit. I am still not able to beat random/pass@k but I think signals are there over long run this will help with diversity. This obviously has a power law, every run will have top envs dominating but I feel those 20% random tasks will give a big boost to any model. optimise the GEPA optimiser - gepa is great but pretty slow. What if we could teach a model to do this better? This was in my list for so long, finally with openreward was able to attempt it.

Introducing OpenReward. 🌍 330+ RL environments through one API ⚡ Autoscaled sandbox compute 🍒 4.5M+ unique RL tasks 🚂 Works like magic with Tinker, Miles, Slime Link and thread below.

Introducing OpenReward. 🌍 330+ RL environments through one API ⚡ Autoscaled sandbox compute 🍒 4.5M+ unique RL tasks 🚂 Works like magic with Tinker, Miles, Slime Link and thread below.

🤝 OpenReward is interoperable with any training library. Here we use the SETA environment by @Eigent_AI. We use @tinkerapi for model compute and @OpenReward for environment compute. This allows you to run agentic RL training from a laptop. github.com/OpenRewardAI/o….

If you want to experience a warm fuzzy feeling, try out the Tinker example from the cookbook: github.com/OpenRewardAI/o… You just need Tinker and OpenReward API keys.