Oversold Stock Hunter

61.3K posts

Oversold Stock Hunter

@OversoldHunter

Jack of some trades, master of none. Realist. INTP-A. Inventor.

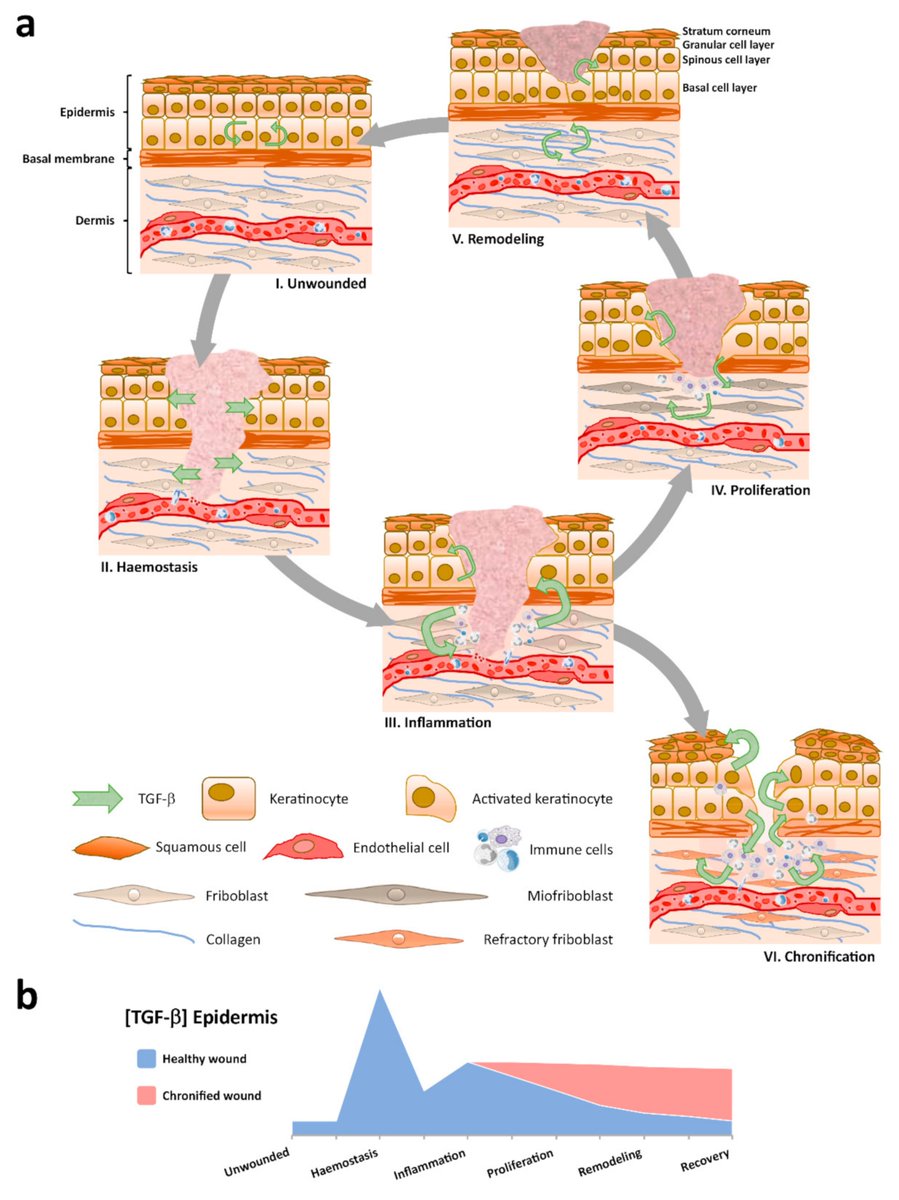

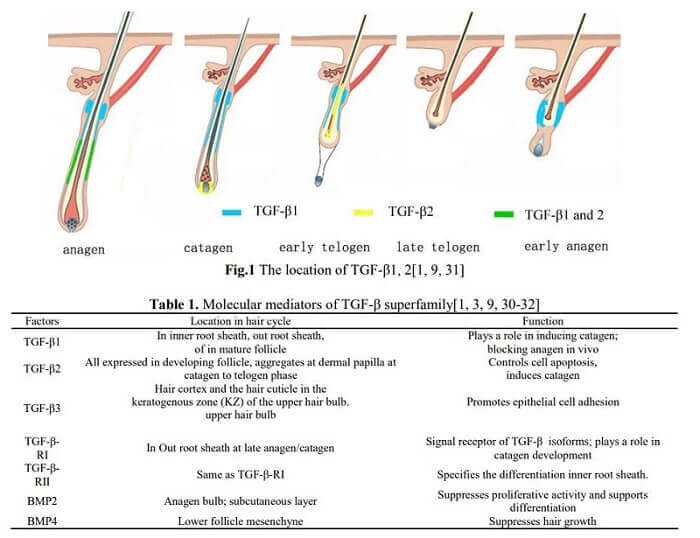

Oxylipins are oxidized metabolites of polyunsaturated fatty acids (PUFAs) and are associated with several pathological conditions. A high-fat diet based on high-PUFA soybean oil makes mice obese. Mice gain less weight on an isocaloric high-fat diet based on low-PUFA coconut oil. When they genetically alter mice to mess with the production of oxylipin from PUFAs, they gain less weight are more metabolically healthy on a high-fat soybean oil diet.

New libidomaxxing method just dropped

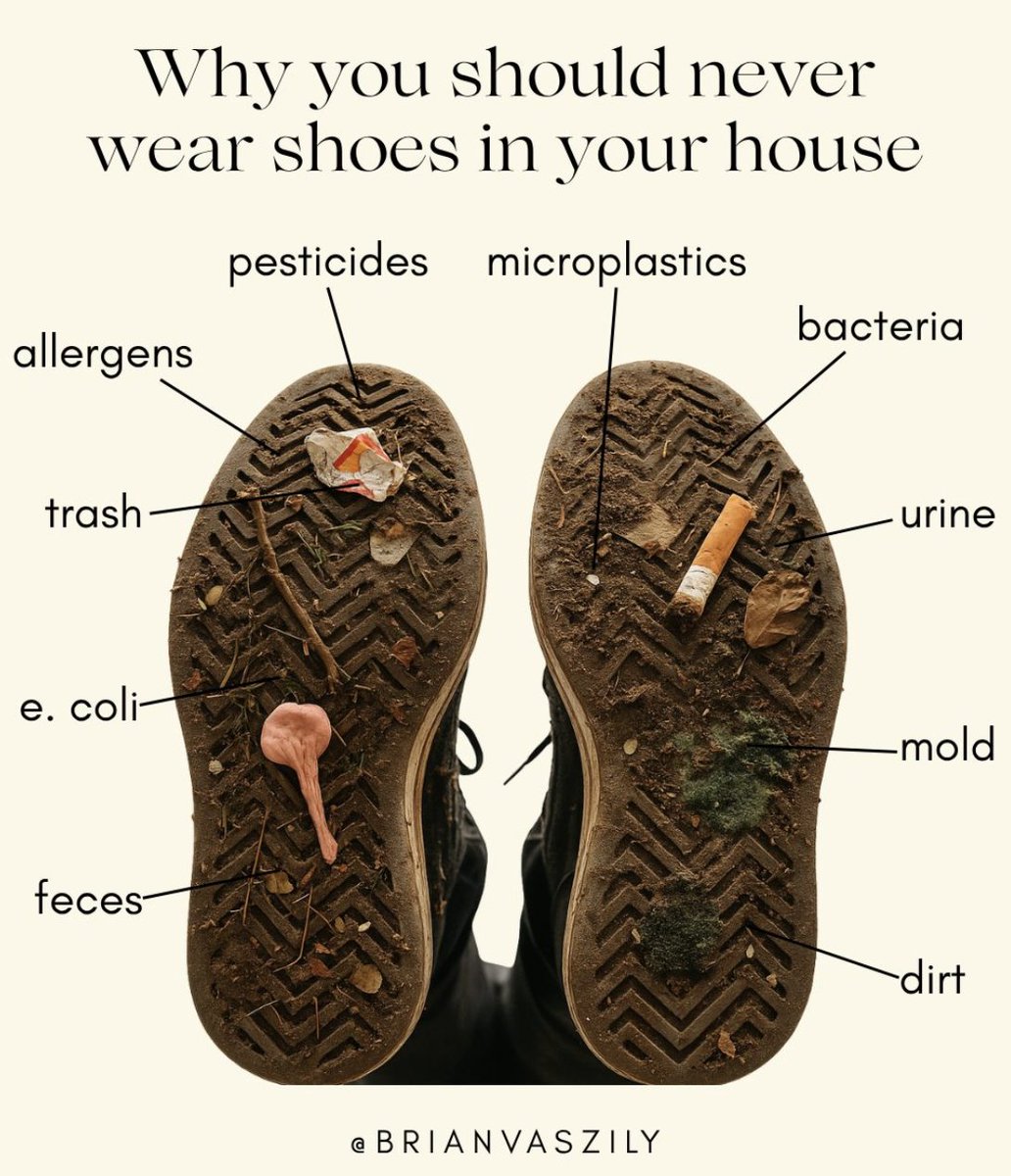

What is extremely unhygienic but everyone seems to do it anyway???

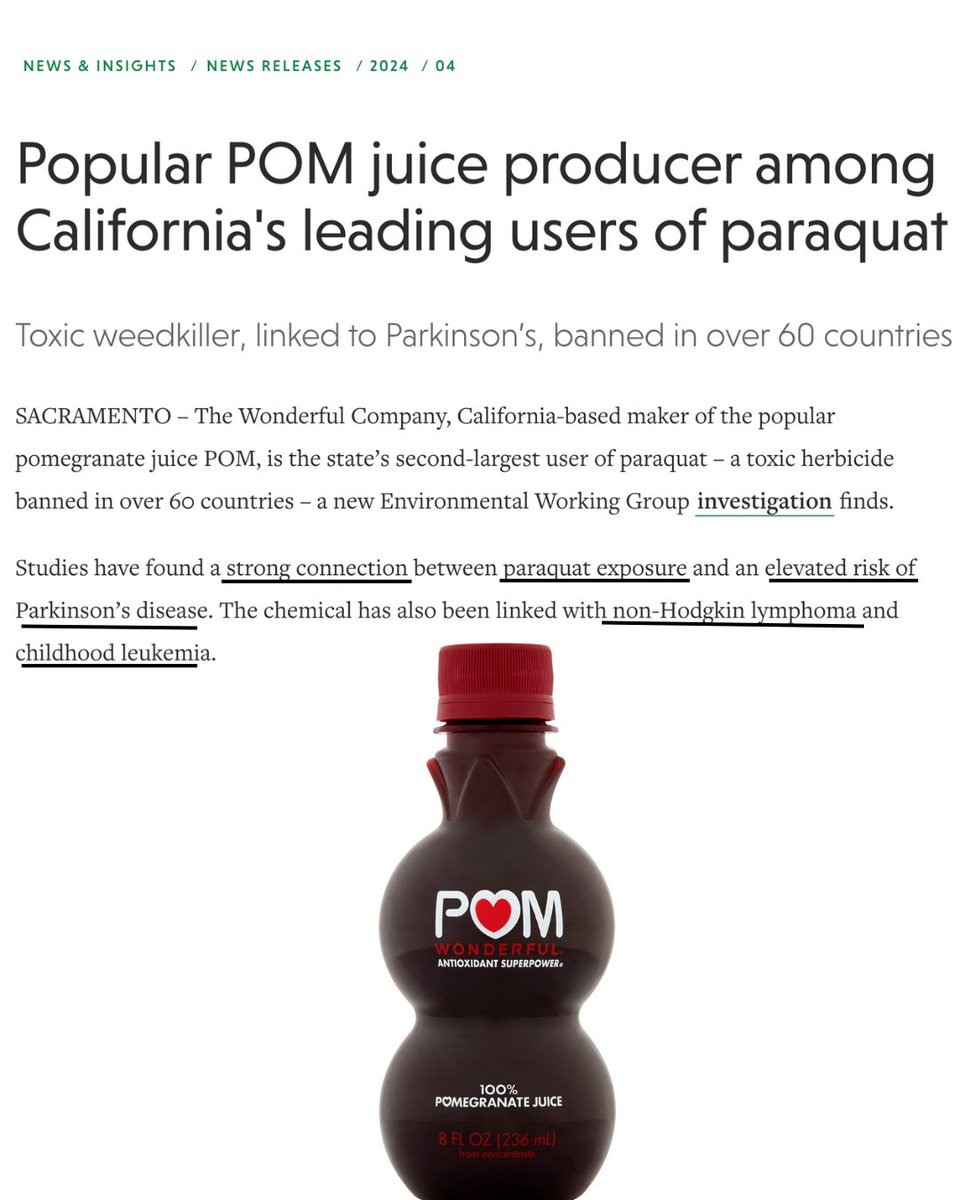

The company behind POM juice, a juice marketed as a superfood antioxidant-rich drink, was just named California’s 2nd-largest user of paraquat — one of the most toxic herbicides still allowed in the U.S. Over 56,000 pounds sprayed in a single year… on crops used to make this so called health drink. Paraquat has been linked to Parkinson’s disease, cancer, and neurological damage. It drifts into nearby communities and lingers in soil for years. More than 60 countries have already banned it. So why is it still being used here? Help us continue the work to get these chemicals out of our food supply. [momsacrossamerica.com/monthly_donati…]

Glucose spike from oat milk vs whole milk latte👀

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…