PhileasFoggAI

721 posts

PhileasFoggAI

@PFoggai

Film, music, AI prod-Amazon/HULU/Apple/Netflix dist. by Vision Films CA. |MFiT/Apple Dig Master Cert.| Sony/Focusrite🏆| AiMusic Vid Awards 2025 ‘New Creator’🥈

London, England Katılım Kasım 2024

920 Takip Edilen252 Takipçiler

A week ago, I knew nothing about AI video generation. Zero.

Then I joined the Runway community — and made my first AI-generated video ever.

Here's what hit me:

As a storyteller, I used to build entire worlds with just words. Readers would imagine the sky, the light, the silence in their imagination - all from text alone.

But prompting AI to generate visuals? That's a different craft.I had to learn camera angles. How to describe surreal scenes trapped inside my head so the AI could see what I see. Character design. Wardrobe. Props. Environment. Lighting. Mood.

Basically — every skill an entire film crew carries, compressed into one person and one prompt.

After years of searching, I think I've finally found my path.

Platform: @runwayml

Modal: Seedance 2.0

Music: Lyria 3 Pro

#RunwayBigPitchContest

Thanks to @IXITimmyIXI and Runway community.

Happy generating!

English

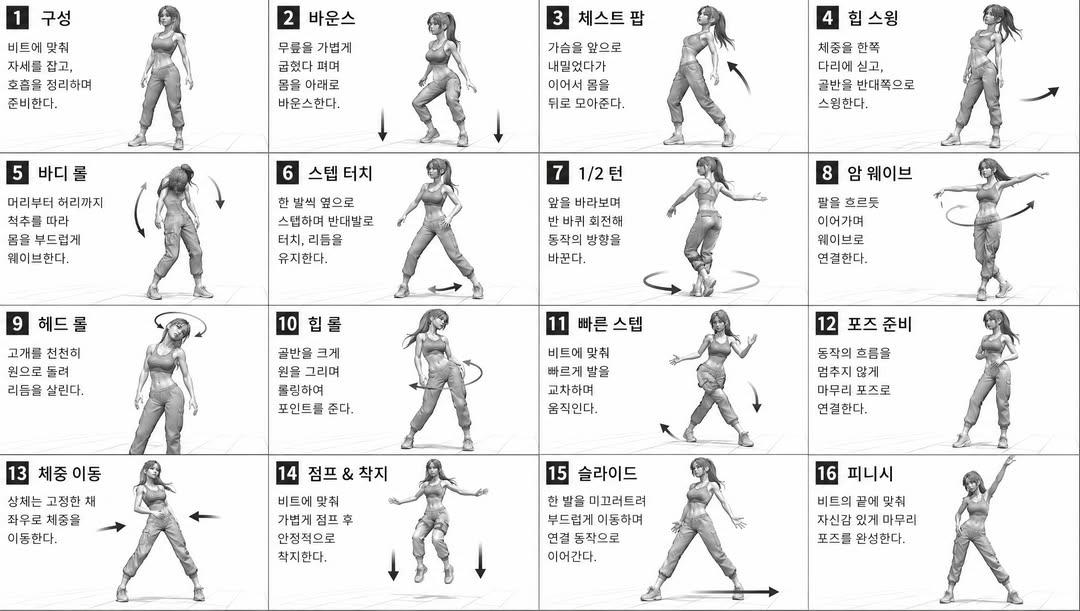

I used a movement sheet as a reference image to animate the dance using Seedance 2.0 + GPT image 2.0

GPT Image 2.0 prompt: [STYLE]

monochromatic grayscale illustration, 3D rendered character, clean instructional reference sheet,

white background, comic-style cell grid layout, technical diagram aesthetic

[LAYOUT]

4x4 grid layout, 16 panels total, each panel separated by thin black border lines,

numbered cells from 1 to 16, consistent panel size

[CHARACTER]

(캐릭터 설명 입력)

예: young female dancer, athletic build, ponytail hairstyle,

crop top and baggy pants, sneakers, same character in all panels

[PANEL STRUCTURE - per cell]

top-left: bold number badge + Korean title text

center: full-body character pose illustration

bottom-left: Korean description text (3-4 lines)

overlay: directional arrows indicating movement direction

[ARROWS / MOTION INDICATORS]

curved arrows, straight arrows, circular rotation indicators,

placed around the character to show movement flow and direction

[RENDERING STYLE]

high detail 3D sculpt style, soft studio lighting, subtle shadows,

no color, grayscale shading, clean linework, game concept art quality

[NEGATIVE]

no background scenery, no color tones, no extra characters,

no cluttered backgrounds

English

@aimikoda @_VVSVS @midjourney @runwayml Overall it's great value but I do wonder when waiting for a gen that gets cancelled 10 times in a row for supposed "violating" on a clean prompt with no NSFW 🙃

English

I ran out of fast hours in @midjourney, and trying to create a series pitch for @runwayml contest with the unlimited feature means I have to wait almost an hour for 2 seedance generations is painful, but in the meantime im reviewing the edint and polishing, and polishing...

English

@HauntedAI @JSFILMZ0412 Haha thought I was being targeted for usage…good to hear 🤣

English

@JSFILMZ0412 The wait times are pretty bad now too. 30-40 mins per video.

English

@chrisfirst Yes, it sucks! I drink a shot snd a beer to you sir for all you’ve done! 🫡 🥃 🍺

English

@PFoggai Thanks a lot! I put so much of my heart into growing this place. It's sad to see this end this way.

Their team never even tried to make these communities work.

English

Have read a few negative posts about the Runway Unlimited Plan which is unfair as #runway offers unparalleled opportunity for film-makers (and students), to make a film on a budget...exciting times!

💡Click-to-edit asset labels on Project Page

English

Happy Horse 1.0 is now live in EARLY ACCESS on Vadoo AI and the first wave is already in.

50 users have already been granted access.

We’re now opening it up to 100 more creators starting today 🐎✨

To get access:

⭐ Comment “happy”

⭐ Retweet this post

100 more creators will be selected in the next 24 hours 🎯

English

Happy to announce that I've received the 𝐆𝐫𝐚𝐧𝐝 𝐏𝐫𝐢𝐳𝐞 on @runwayml's Big Ad Contest Award. I'm always keeping an eye on AI competitions and AI festivals, but this one was special for me because Runway was the first video AI tool that I've ever tried in my life. Since then they became giants in the AI space, supporting artists and integrating many models in their suite, also I would encourage everyone to follow @c_valenzuelab on Twitter for some deeper thoughts about the future of AI.

The other reason is I've only realized the deadline was only days away, which forced me to ditch any complicated ideas and keep it very simple. And as it is with moving images: sometimes less is more.

After seeing the top25 finalists I also had to realize that the bar has raised, last year you could post a 15 second demo and hundreds of comments would flood, but now - finally - we are back to the purpose of any media: to tell something words can't tell, tell a story, and have a message behind the cinema screen. I'll post the top 25 finalists in the comments so if you have time you can check out the artists and their work.

Also planning to somehow help the local AI artists here in Vietnam, as soon as I have the plan for the best way to do so, will post about it later.

𝘐𝘮𝘢𝘨𝘦 𝘧𝘰𝘳 𝘵𝘩𝘦 𝘱𝘰𝘴𝘵 𝘸𝘢𝘴 𝘨𝘦𝘯𝘦𝘳𝘢𝘵𝘦𝘥 𝘪𝘯 𝘵𝘩𝘦 𝘙𝘶𝘯𝘸𝘢𝘺 𝘴𝘶𝘪𝘵𝘦, 𝘶𝘴𝘪𝘯𝘨 𝘉𝘍𝘓 𝘧𝘭𝘶𝘹2𝘔𝘢𝘹.

English

@vladimircherner Thanks for sharing 🙏 ...I also wonder if someone is using Fast or Pro

English

Seedance is now literally everywhere ⭐😎➡

This morning, we got access to face generation, and by the evening, the model was integrated wherever possible. No more messing around with VPNs or complicated registrations; the tool is now available on popular platforms. We checked out where and on what terms you can use it:

@higgsfield

They're asking for $234 for a monthly plan with 9,000 credits. This includes access to Seedance 2.0, which allows for several free 8-second generations. If you buy an annual subscription upfront, these 8-second generations become unlimited. However, there's a catch: you still have to verify photos, and then there is the speed issue—right now, all generations are getting stuck at the photo verification stage. A 15-second generation on this plan costs $2.34.

@freepik

They rolled out 48 camera presets and added a button to transfer motion to video. They don't require face verification; you just need to click once to confirm that it's supposedly you. It's the fastest among all the platforms. The cost in their standard monthly plan without discounts is $3.51. They might run a promo, though, so hold off for a bit. Either way, it's no longer the $6-7 it used to be.

@invideoOfficial

Under the hood, it's exactly the same, but it works faster than Higgsfield, without the extra hassle, and is frankly much more convenient when working with references. Somehow, video generation here is the cheapest at $1.67 for 15 seconds. This is the most affordable option.

Right now, Invideo and Freepik look like the most viable choices for everyday tasks, while Higgsfield is trying to lure clients to their plans with discounts but keeps freezing. As for tomorrow, we are all patiently waiting for the release of HappyHorse-1.0.

So, no need to rush!)

English

How to Generate a Cinematic Street Dance Breakdown with Seedance 2.0? (15s Full Motion Prompt)

Here’s a Seedance 2.0 cinematic dance sequence concept we built for a high-intensity street dance film style shot.

It’s designed as a 15-second continuous motion breakdown with precise camera movement, choreography direction, and cinematic realism in a controlled studio environment.

The idea focuses on:

explosive entry motion with strong spatial displacement

fast directional footwork and pivot transitions

floor work with power sweep dynamics

increasing motion density into pop-lock sequences

finishing with a clean aerial freeze for a cinematic end frame

Everything is structured like a real studio dance film shoot, with consistent lighting, minimal background, and camera language that evolves with the dancer’s energy (low-angle push-ins → orbit shots → tracking → pull-back → aerial lock).

The goal is to push maximum motion clarity + cinematic rhythm synchronization, so each 1–2 second segment feels like a deliberate camera-choreography interaction rather than just movement capture.

Prompt:

"SUBJECTS: A male street dance expert, white buzz cut, wearing small gold hoop earrings and a gold rope chain. Dressed in a dark brown hoodie, a pure white crew neck shirt underneath, dark brown loose pants, and white sneakers. ENVIRONMENT: Pure seamless background, slightly cool gray tone, clean space with no distractions. STYLE: cinematic realism, studio dance film, high contrast clean lighting Timeline 0:00-0:02: Full body, 24mm, low-angle fast push-in The dancer bursts directly into motion at high speed, right foot stomping the ground while the body performs a powerful rotation, left leg sweeping wide to create large spatial displacement, upper body hits and releases in sync 0:02-0:03: Full body, 28mm, tracking side move He performs continuous large cross steps and sliding combinations, feet rapidly crossing while driving lateral movement, the jacket swings with strong inertia from the motion 0:03-0:05: Full body, 28mm, orbit He transitions into continuous rotating footwork, rapidly switching pivot points with both feet to achieve multiple directional changes, forming an arcing trajectory in space 0:05-0:06: Low angle, 24mm, forward pressure He suddenly drops low, one hand touching the ground for support, quickly transitioning into floor movement 0:06-0:07: Low near-ground angle, 24mm, tracking Both legs sweep rapidly to complete a full power sweep rotation, the body forming a high-speed circular motion around the supporting arm, then using the momentum to spring back up 0:07-0:08: Full body, 28mm, tilt up He explosively rises from the ground, landing on both feet and immediately executing a full-body wave, while continuing to move forward 0:08-0:10: Half body to full body, 35mm, forward tracking He enters a high-speed pop locking sequence, upper body locking precisely on beats while the footwork continues to move and shift directions, increasing motion density 0:10-0:12: Full body, 28mm, pull back to open space He transitions into larger groove and jumping step combinations, steps becoming wider while still precisely on beat, body remains fully extended 0:12-0:14: Low angle, 24mm, fast push-in He suddenly accelerates, stomps the ground with his right foot to launch upward, performing a fast aerial rotation while fully extending his limbs 0:14-0:15: Full body fully in frame, 35mm, slight push-in lock He enters a one-hand support freeze mid-air while maintaining motion inertia, body fully extended horizontally, legs stretched into clean lines, the entire figure fully visible in the frame"

If anyone here is experimenting with Seedance / Kling / Runway-style motion models, this structure works really well for:

clean subject tracking

stable identity across fast motion

high-energy breakdance sequences

cinematic short-form AI dance films

Would be interested to see how others adapt or enhance this kind of timeline-based choreography structure. Share your thoughts about Seedance 2.0 in the comments below!

#Seedance #Seedance2 #AI #AIVIDEO #AIart️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️ #Prompt

English

@GrimfelOfficial Great work! 🤩 FYI - Tried to look at your site but down

English

Announcing

Grimfel: Echoes Of Frost is releasing June 16th

A grimdark fantasy film (runtime 89 minutes)

I'm just one guy working on this. But here is a short snippet of that film.

There are also 4 other planned films for the Grimfel series.

Also releasing with:

Echoes Of Frost 200 page Novella

Echoes Of Frost companion comic (3 part comic series)

Echoes Of Frost D&D Campaign Book

Kickstarter backers will receive all the above early.

English

Seedance 2.0's OMNI feature is probably the most powerful feature in existence for AI filmmaking. If you're not using it, you're missing out on the final piece of the puzzle for consistency. OMNI lets you add characters, objects and places together to generate a complete scene.

You can build out an entire consistent sequence with OMNI and even use it to extend scenes, reference voices and use video as reference. I'm putting a tutorial together to showcase just how INSANE and powerful the OMNI feature is. Here is a short I created called "CHILD OF THE SOIL"".

-----

An ancient artifact known as The Root was forged for one purpose, to restore barren land to life. But the civilization that created it mysteriously vanished before that purpose was ever fulfilled, not knowing that one day the same artifact would be used to restore their former home.

In a remote location of Madagascar, Nyoni finds it. Ancient scriptures lead her to The Root, a force older than memory, one that answers to the soil and to bloodline.

Nyoni carries the right blood.

Her ancestral lineage makes her the conduit. Through her, the artifact finally wakes. Together, they do what was always intended, transform a barren landscape into a thriving oasis, returning water, greenery, and sanctuary to every creature that called that place home.

A purpose, dormant for centuries. Fulfilled at last.

-----

#CapCutSeedance2 @capcutapp #DreaminaSeedance2 #DreaminaAI #DreaminaCPP #Seedance2 @dreamina_ai

English

اصنع فيلم سينمائي بضغطة زر باستخدام الذكاء الاصطناعي

للأسف هذا اللي يتخيله أشخاص كثير، وكأن الذكاء الاصطناعي عبارة عن شيء بيسوي لك المستحيل بضغطة زر.

الذكاء الاصطناعي في الحقيقة هو أداة رهيبة تساعدك تحسن سير عملك، ومن خلال خبرتك راح تعزز هذي الأداة شغلك وتسهله وتختصر عليك الوقت. بس المهم هنا، إنك تفهم إيش قاعد تسوي وإيش تكتب بالضبط. الموضوع ماهو بس اكتب "برومبت" احترافي وخلصنا، ولا الوضع عبارة عن إن كل واحد بيسوي مكتبة أفلام لحاله أو يبرمج موقع قوقل من جديد.

القصة بكل بساطة تبدأ من إنك تكون عارف أساسيات البرومبت عشان تعرف تستخدم الذكاء الاصطناعي صح، لكن النقطة الأهم واللي يمكن مو الكل يتكلم عنها:

هي فهمك العميق للمجال اللي بتشتغل فيه.

مثلاً، صناعة الأفلام والإعلانات تتطلب فهم لأساسيات المجال وكيف يمشي سير العمل فيه. كذلك البرمجة، مو كل من استخدم الـ Vibe Coding صار قوي وفاهم، الموضوع يتطلب فهم في عدة مجالات، ومن أهمها أمن المعلومات، عشان ما تنشر موقعك وتكتشف إن الـ API مكشوف في الكود الرئيسي للموقع.

لذلك، استخدامك للذكاء الاصطناعي مو معناته إنك تقدر تسوي أي شيء من الصفر بدون خلفية.

صحيح ممكن تستخدم برومبتات أنشرها أنا أو أي أحد غيري، لكن هل أنت فاهم الآلية خلفها؟ ومين اللي فكر فيها بالأساس؟ الغالبية تفكر بس كيف تحصل على النتيجة وتطبقها بدون ما تشوف السياق، وهالشيء مو عيب، لكن مو معناه إنك صرت خبير استخدام في هالمجال.

عشان كذا أنصحك تفكر بطريقة أقوى عن كيف تستخدم الذكاء الاصطناعي بشكل صحيح، وتسبر أغواره بطريقة بطلة تخدم مجالك وتقويك فيه. لأن خبرتك في مجالك بتكون أقوى بنسبة 80% لو كنت عارف الـ 20% الأساسية من الذكاء الاصطناعي.

كذلك في الأوامر، لازم تزود الذكاء الاصطناعي بكافة المعلومات، خلينا نقول بنسبة 80% من السياق الصحيح، وتخليه يطور المحتوى بنسبة 20%، عشان تضمن أفضل المخرجات بدقة ومصداقية عالية، وبدون أي "هلوسة".

قولوا لي متى آخر مرة سمعتوا كلمة الذكاء الاصطناعي يسويها بضغطة زر؟

العربية