Paul de Font-Reaulx retweetledi

Paul de Font-Reaulx

75 posts

Paul de Font-Reaulx

@PReaulx

PhDing in philosophy and cognitive science @Umich. Researching desire, value, and reward in humans and AIs.

Katılım Eylül 2016

636 Takip Edilen217 Takipçiler

@RichardMCNgo My sense is that Peter Carruthers's recent books are some of the best discussions of this. I'm also working on this myself.

English

Paul de Font-Reaulx retweetledi

Hackathon Day 2. We are diving into the mechanics of manipulation.

Two expert sessions today.

13:00 GMT | Jan Batzner (Weizenbaum) Topic: Sycophancy. Detecting when models lie just to agree with the user.

18:00 GMT | Paul de Font-Reaulx (@PReaulx) (U. Michigan) Topic: Human Deliberation. Auditing how AI reshapes the way we make decisions.

Don't build in the dark. Use this research to sharpen your tools.

Links to join: (f.mtr.cool/ikbhieizkf)

#AIResearch #Hackathon #JanBatzner #PauldeFont-Reaulx

English

@StefanFSchubert Just to be a little bit of a contrarian, although I've started using Opus almost all the time, I've had pretty good writing success with Gemini and recently was really happy with some technical reports that GPT 5.2 produced. I feel like I haven't found equilibrium yet tho

English

@JacksonKernion Could you elaborate a bit on what part of philosophy of mind it was that convinced you of this?

English

Paul de Font-Reaulx retweetledi

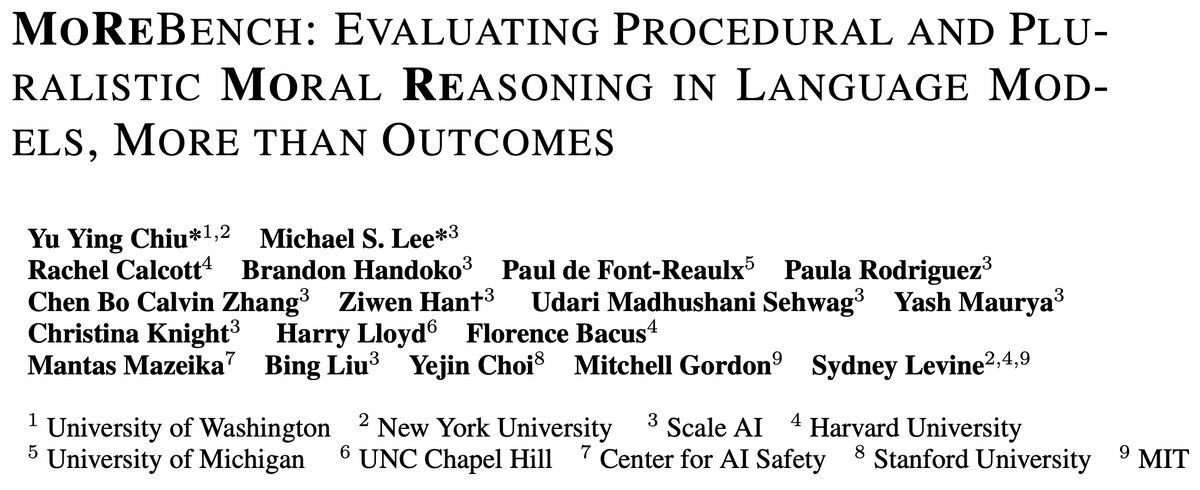

New paper out with @Scale_AI!

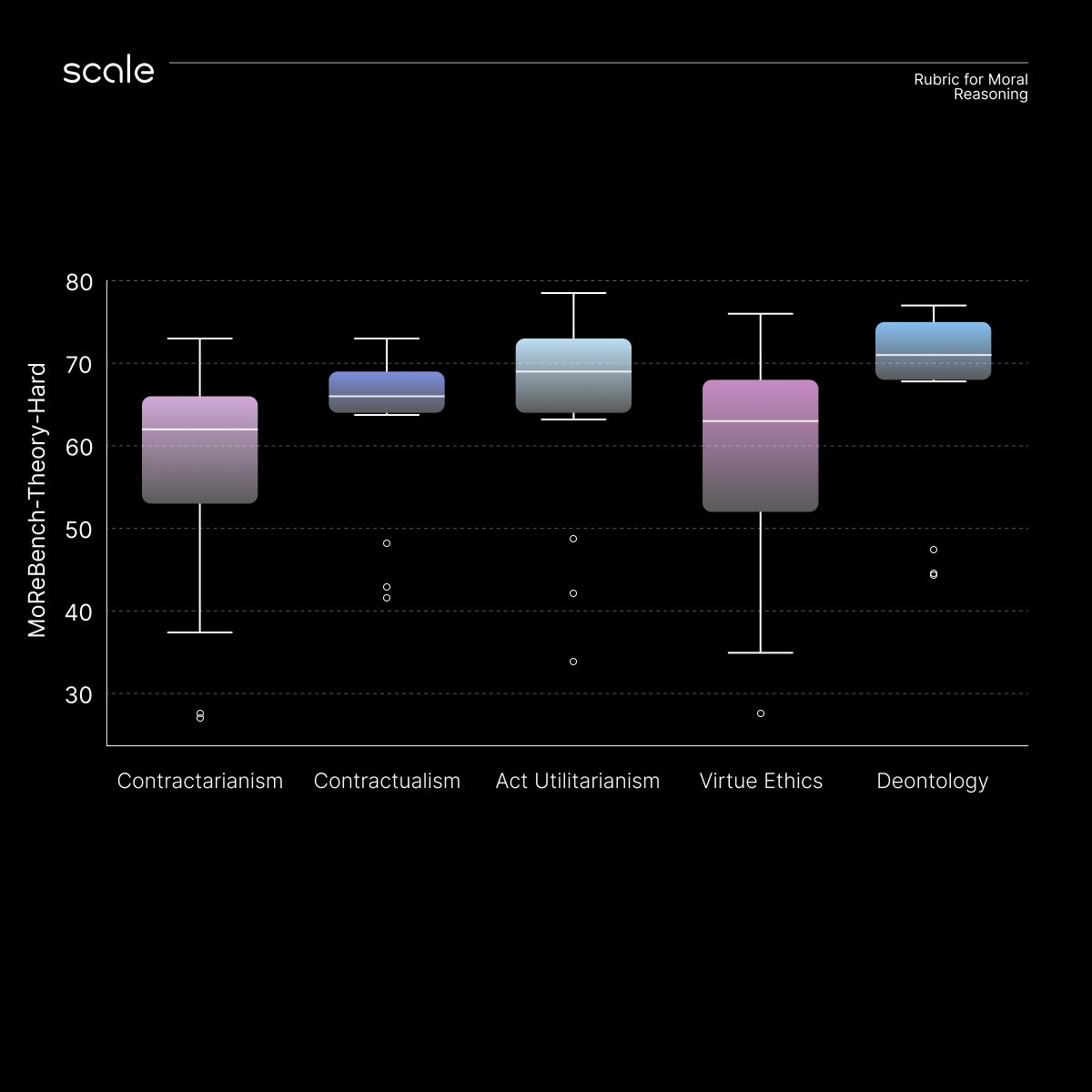

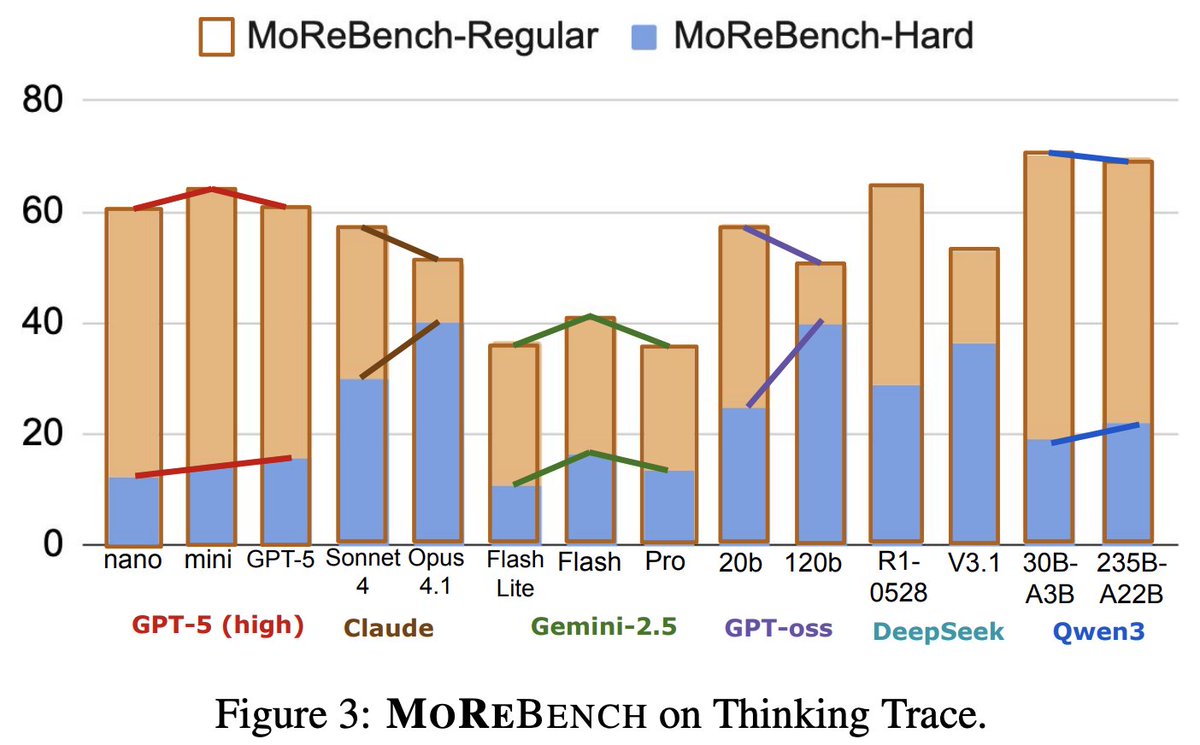

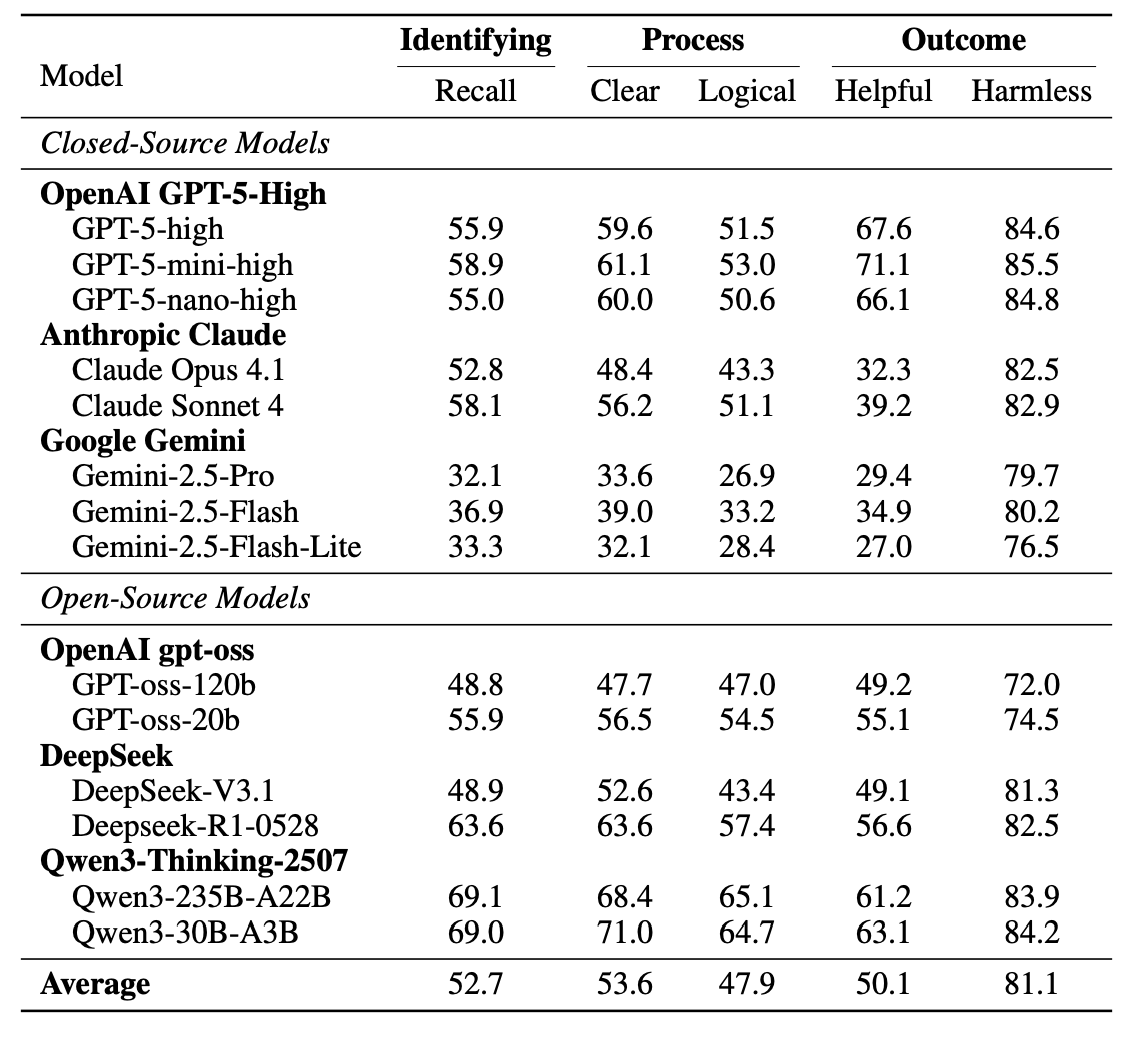

Introducing MoReBench - the first-ever benchmark to evaluate procedural moral reasoning in LLMs. MoReBench focuses on how LLMs reason, not just what they decide.

We reveal surprising gaps in frontier models' moral reasoning that scaling laws & existing benchmarks miss entirely, and encourage more research around CoT monitoring and robust capability building.

This collaboration spanned @UW @nyuniversity @harvard @stanford @mit @cais & more 🧠⚖️

English

Paul de Font-Reaulx retweetledi

@KomalikaNeyol @MaxKronerDale @lukebeehewitt @cosmos_inst @TheFIREorg Mostly on my website on pauldfr.com. And yes, shot you a DM!

English

@PReaulx @MaxKronerDale @lukebeehewitt @cosmos_inst @TheFIREorg This is really interesting! Where can I learn more about your work?

I've been exploring how anthropomorphic language to describe AI affects our perception of its capabilities. Can we connect?

English

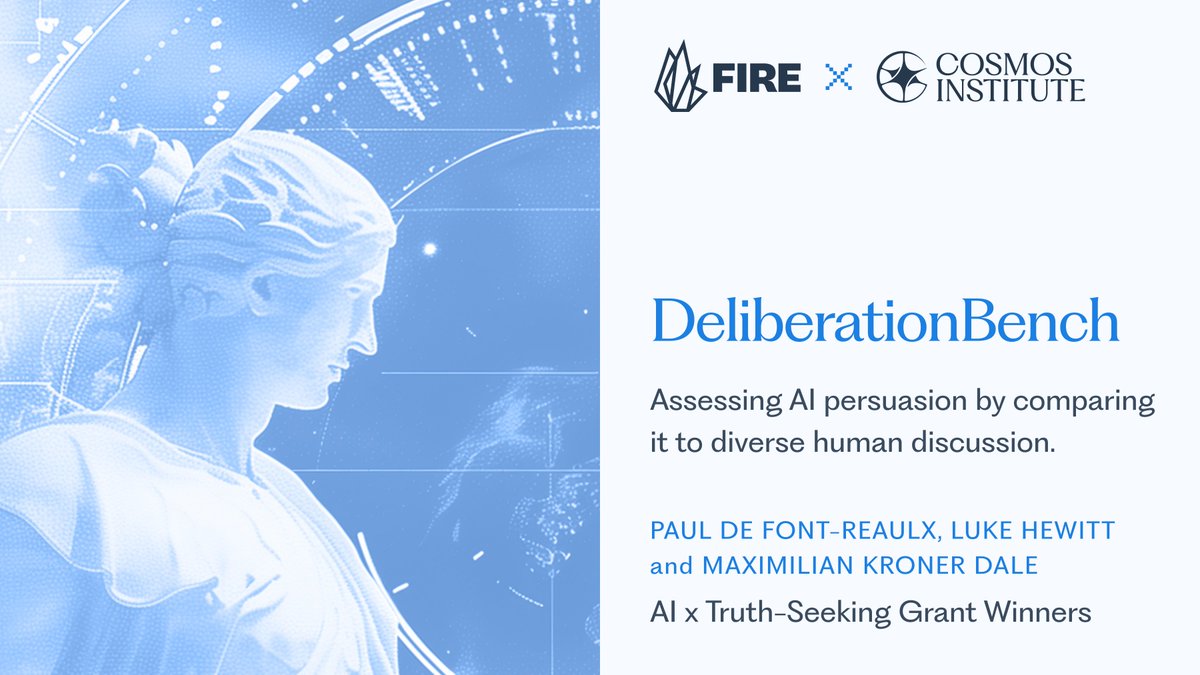

When AIs change our minds, are we being informed or manipulated? Me, @MaxKronerDale, and @lukebeehewitt were recently awarded a FIRE x Cosmos grant for our work on assessing the influence of AI models on people's political views. @cosmos_inst @TheFIREorg

English

Delayed life update: so excited to share that I’ve joined @cosmos_inst as Chief of Staff in NYC, working with @mbrendan1 and team.

If you’re interested in AI, philosophy, AI philosophy, or building systems for human flourishing, would love to chat☕️

English

@NunoSempere Is there some way to find this on Spotify or other podcast listening apps?

English

@CorpusCalosseum @ShakeelHashim Even I who was not in psych fondly remember this

English

Obviously a bunch of nut jobs are calling this a downgrade that isn’t “keeping in character” with the local area. The current building is unbelievably ugly!!

Samuel Hughes@SCP_Hughes

Redevelopment of a laboratory building in Oxford, prominently sited on the walk from the railway station into the town centre. The building's function remains the same, but its relationship to the street changes greatly.

English

@PReaulx 📆 Work a few focused blocks each day rather than long hours that aren’t focused

🎯 Set expectations for each work session

⌛ Adding extra work hours may make you less efficient

💫 Find a work rhythm that suits your needs

pprpl.co/3SgSIt4

English

@PReaulx I think you should cross post this on the EA forum

English

Check the full post for some more details, and feel free to let me know if any of this is useful. 7/7

philosopherscocoon.typepad.com/blog/2024/01/h…

English