Patrick Bush

1.7K posts

Patrick Bush

@Patrick_Bush_VE

Crypto at VanEck, Mainstream antagonist, Diet Coke maximalist, history enjoyer, Consider the end Disclosure: https://t.co/jSTlkp5ILe

ATB Bank of Alberta has initiated coverage on Abaxx. We also appreciate the work that their analyst did on Abaxx’s ID++ and our emerging Tech platform(s) (which we believe can be more important than enterprise blockchain as a category…and yes “there can only be one”). We’re big fans of Alberta around this company, even working on some important Canadian commodity markets as I type. #29ers $ABXX #NowWeScale

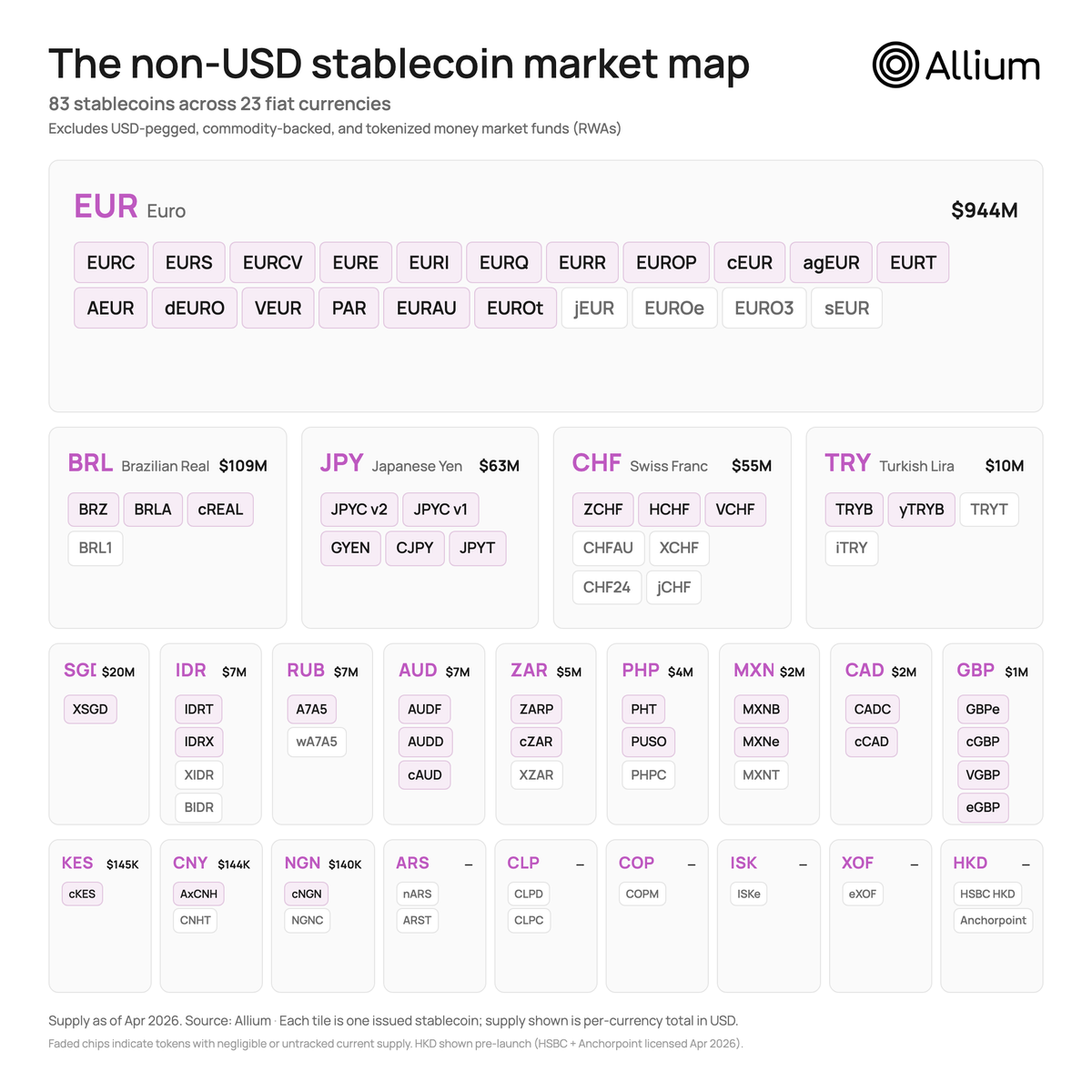

An estimated $60 billion was spent on remittance fees in 2025. This could be almost zero with stablecoins.

non-USD stablecoins up only on @base 20+ currencies supported with more every week

We are very close to the birth of the #SovereignComputer. 🆔++ We are currently in the transition stage of AI, quickly evolving from chatbots and monolithic applications adding an agentic help chat, to the early CLI agents executing the blurry line between deterministic software runtime and a random walk of compounding decision agency to reach an outcome. To run with the PC revolution analogy, I agree that we are in the “Apple II / Commodore PET” era where the ‘hobbyist’ harness engineers of today are showing a sneak peak of the inevitable, moving from personal computing to agentic sovereign computing. What we’re waiting for is our “VisiCalc” moment, ie the birth of the PC spreadsheet. Here’s my thesis: 🔹 The Personal Computer vision moved from debateable to inevitable with the birth of the spreadsheet “cell”. 🔹 The Sovereign Computer vision will move from debatable to inevitable with the birth of a robust Agent Identity application to the harness. What was so powerful about the spreadsheet? It was a sovereign interface of infinite paths. Before the cell, software was a series of rigid, temporal "if/then" corridors built by a programmer who had to anticipate your every move. The spreadsheet flipped the script. It provided a functional, two-dimensional grid where the user defined the relationship and the engine managed the plumbing. It was a "programmable canvas" that didn't require a computer science degree to master, it just required a mental model of the problem. In the Sovereign Computer era, the "Skill" is the new "Cell," but the Agent Identity layer is the grid that makes it functional. The spreadsheet succeeded because it gave the user "Computational Sovereignty", the power to build complex logic without a middleman. But the cell was a static unit, it was a container for data and math. The Agent Identity is the breakthrough primitive because it transforms a static skill into a personalized, executable extension of the self. When you anchor a Skill to a Sovereign Identity, the paradigm shifts: 🔹Identity as the Primary Key: In a spreadsheet, a cell knows its coordinates. In a Sovereign Computer, a Skill knows its owner. It doesn't need to ask for your name, your preferences, or your history, it inherits them from your Personal Knowledge Graph, and the private "data lake" (ID++ DWN) of your life. 🔹 From "Apps" to "Orchestration": We are moving from a world of "navigation" (opening apps, moving cursors, pulling drop down menus) to a world of "intent." You don’t "use" an application, you grant a Skill the right to represent your Identity. The "Robot Hand on the Doorknob" disappears because the door recognizes your cryptographic signature and opens itself. 🔹 The Sovereign Stack: If the spreadsheet allowed a finance pro to turn their mental model into a tool, the Sovereign Computer allows anyone to turn their Identity context into a swarm of delegated intelligences. The "VisiCalc moment" for this era is the realization that Identity is the ultimate Integration. We no longer need to wait for SaaS companies to build "bridges" between their walled garden silos. When you own your identity and your knowledge graph, you are the platform. The skills are simply the formulas you choose to run across the infinite grid of your own agency. The old computer organized your files. The Sovereign Computer organizes an infinitely scalable extension of your left brain.

New essay on the economics of structural change and the post-commodity future of work. 1. Almost any question about the impact of advanced AI on the economy needs to start at the same place: what is still scarce? Answer that, and the analysis becomes pretty straightforward. This essay explores what becomes scarce if AI really can replicate most of what humans do in production, and what this mean for the future of jobs. 2. My conjecture, working through the economics: labor reallocates across sectors, and the sector it reallocates to has properties that keep labor a meaningful share of the economy. Ultimately this is about the structure of demand itself. For this, we have to go back to Girard, Augustine and Rousseau: once people's base needs are met, their preferences shift to comparative motives (e.g., status, exclusivity, social desirability). This motive is inherently non-satiated. 4. The key paper is Comin, Lashkari, and Mestieri (Econometrica 2021). As people get richer, they don't buy proportionally more of everything. They shift spending toward sectors with higher income elasticity. They estimate income effects account for 75%+ of observed structural change. 5. The ironic consequence: the sector that gets automated becomes a smaller share of the economy, not a larger one. Agriculture got massively more productive and its share of employment collapsed. Manufacturing too. The "stagnant" sectors absorb the spending and the jobs. 6. So the question is: which sectors have high income elasticity in a post-AGI world? I argue it's what I call the relational sector. Categories where the human isn't just an input into production, it is part of the value. 7. Why does the relational sector have high income elasticity? Because human desire has a mimetic, relational dimension. We don't just want things for their intrinsic properties. We want what others want, and we want it more when others can't have it. Girard, Rousseau, Augustine, and Hobbes all saw this. 8. In work with Kristóf Madarász, we showed this experimentally: WTP roughly doubles when a random subset of others is excluded from the good. And in new work with Graelin Mandel, AI involvement kills the premium. Human-made art gains 44% from exclusivity; AI-made art only 21%. 9. This all comes together for the core argument. The sector that absorbs spending as AI makes commodity production cheap is one where human provenance is part of the value, and demand for it grows faster than income. Exactly the profile that keeps labor meaningful. 10. To be clear about the claim: I'm NOT saying aggregate labor share must rise. It may fall. The claim is about sectoral composition, i.e., where expenditure and employment go once commodities get cheap, and the fact that the sector that will absorb reallocated labor maps to a substantial component of human preferences and desire. 11. If you're interested in the formal model, a linked companion technical note works out all the economics. Read the essay here: aleximas.substack.com/p/what-will-be…

Another record day! Hour and a half to go and over 28,000 lots and $93k in rev. @abaxx_tech @abaxx_exchange @JoeRaia5 @RussRobSG @JoshCrumb

$FIGR as the seasonally strong months come up let me spell out what these numbers mean. so they did 1.2B in marketplace volume in march. if they just maintain that for the next 3 months that'll total 3.6B for q2. 3.6B Q2 / 2.9B Q1 = 24% QoQ growth. 24% QoQ growth assuming 0 monthly growth from march to june. since they reported ~160m revenue q4, i'm expecting a little greater than that for q1. which means base case revenue target for q2 should be in the 200m range. hope the market understands simple math. feel like my assumptions are very conservative.

@NYCMayor Steinway subway station renovation is 6 months delayed.

Today is a monumentous day for quantum computing and cryptography. Two breakthrough papers just landed (links in next tweet). Both papers improve Shor's algorithm, infamous for cracking RSA and elliptic curve cryptography. The two results compound, optimising separate layers of the quantum stack. The results are shocking. I expect a narrative shift and a further R&D boost toward post-quantum cryptography. The first paper is by Google Quantum AI. They tackle the (logical) Shor algorithm, tailoring it to crack Bitcoin and Ethereum signatures. The algorithm runs on ~1K logical qubits for the 256-bit elliptic curve secp256k1. Due to the low circuit depth, a fast superconducting computer would recover private keys in minutes. I'm grateful to have joined as a late paper co-author, in large part for the chance to interact with experts and the alpha gleaned from internal discussions. The second paper is by a stealthy startup called Oratomic, with ex-Google and prominent Caltech faculty. Their starting point is Google's improvements to the logical quantum circuit. They then apply improvements at the physical layer, with tricks specific to neutral atom quantum computers. The result estimates that 26,000 atomic qubits are sufficient to break 256-bit elliptic curve signatures. This would be roughly a 40x improvement in physical qubit count over previous state-of-the-art. On the flip side, a single Shor run would take ~10 days due to the relatively slow speed of neutral atoms. Below are my key takeaways. As a disclaimer, I am not a quantum expert. Time is needed for the results to be properly vetted. Based on my interactions with the team, I have faith the Google Quantum AI results are conservative. The Oratomic paper is much harder for me to assess, especially because of the use of more exotic qLDPC codes. I will take it with a grain of salt until the dust settles. → q-day: My confidence in q-day by 2032 has shot up significantly. IMO there's at least a 10% chance that by 2032 a quantum computer recovers a secp256k1 ECDSA private key from an exposed public key. While a cryptographically-relevant quantum computer (CRQC) before 2030 still feels unlikely, now is undoubtedly the time to start preparing. → censorship: The Google paper uses a zero-knowledge (ZK) proof to demonstrate the algorithm's existence without leaking actual optimisations. From now on, assume state-of-the-art algorithms will be censored. There may be self-censorship for moral or commercial reasons, or because of government pressure. A blackout in academic publications would be a tell-tale sign. → cracking time: A superconducting quantum computer, the type Google is building, could crack keys in minutes. This is because the optimised quantum circuit is just 100M Toffoli gates, which is surprisingly shallow. (Toffoli gates are hard because they require production of so-called "magic states".) Toffoli gates would consume ~10 microseconds on a superconducting platform, totalling ~1,000 sec of Shor runtime. → latency optimisations: Two latency optimisations bring key cracking time to single-digit minutes. The first parallelises computation across quantum devices. The second involves feeding the pubkey to the quantum computer mid-flight, after a generic setup phase. → fast- and slow-clock: At first approximation there are two families of quantum computers. The fast-clock flavour, which includes superconducting and photonic architectures, runs at roughly 100 kHz. The slow-clock flavour, which includes trapped ion and neutral atom architectures, runs roughly 1,000x slower (~100 Hz, or ~1 week to crack a single key). → qubit count: The size-optimised variant of the algorithm runs on 1,200 logical qubits. On a superconducting computer with surface code error correction that's roughly 500K physical qubits, a 400:1 physical-to-logical ratio. The surface code is conservative, assuming only four-way nearest-neighbour grid connectivity. It was demonstrated last year by Google on a real quantum computer. → future gains: Low-hanging fruit is still being picked, with at least one of the Google optimisations resulting from a surprisingly simple observation. Interestingly, AI was not (yet!) tasked to find optimisations. This was also the first time authors such as Craig Gidney attacked elliptic curves (as opposed to RSA). Shor logical qubit count could plausibly go under 1K soonish. → error correction: The physical-to-logical ratio for superconducting computers could go under 100:1. For superconducting computers that would be mean ~100K physical qubits for a CRQC, two orders of magnitude away from state of the art. Neutral atoms quantum computers are amenable to error correcting codes other than the surface code. While much slower to run, they can bring down the physical to logical qubit ratio closer to 10:1. → Bitcoin PoW: Commercially-viable Bitcoin PoW via Grover's algorithm is not happening any time soon. We're talking decades, possibly centuries away. This observation should help focus the discussion on ECDSA and Schnorr. (Side note: as unofficial Bitcoin security researcher, I still believe Bitcoin PoW is cooked due to the dwindling security budget.) → team quality: The folks at Google Quantum AI are the real deal. Craig Gidney (@CraigGidney) is arguably the world's top quantum circuit optimisooor. Just last year he squeezed 10x out of Shor for RSA, bringing the physical qubit count down from 10M to 1M. Special thanks to the Google team for patiently answering all my newb questions with detailed, fact-based answers. I was expecting some hype, but found none.