Subba Reddy

13K posts

Subba Reddy

@PostPCEra

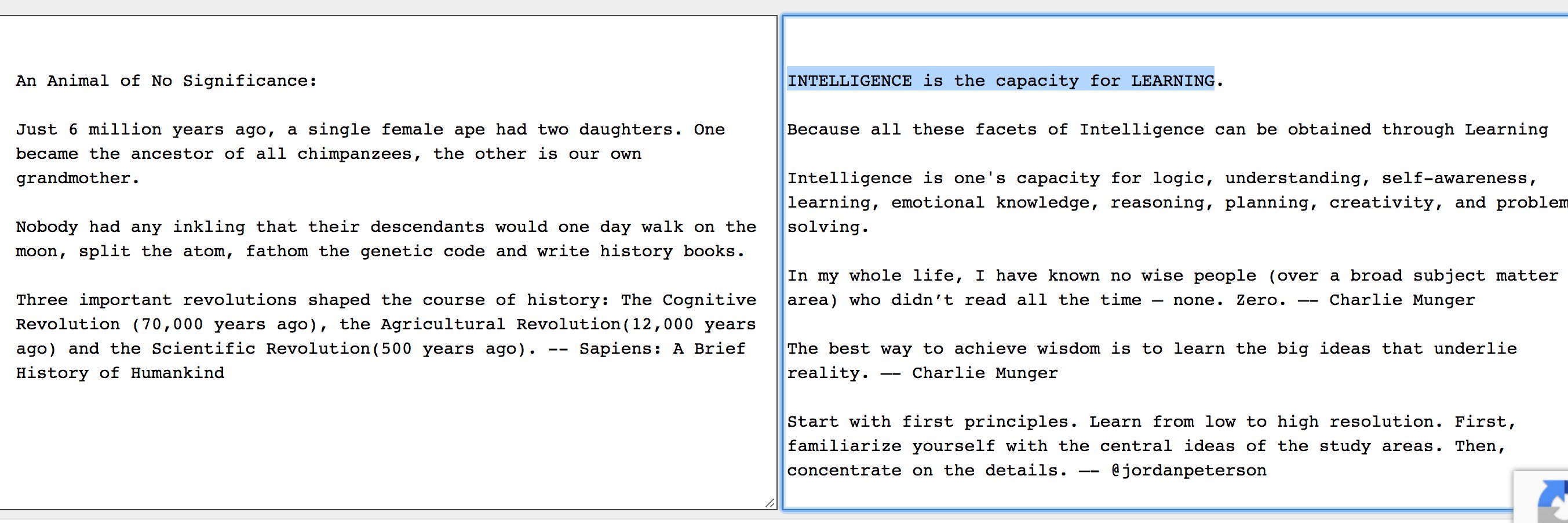

Everything around me was someone's lifework| Intelligence is the capacity for learning| Entrepreneur at heart(CS grad), broadly curious, I live & breathe EdTech

First 5-year SCA signed. New EUV layer roadmap. ✍️

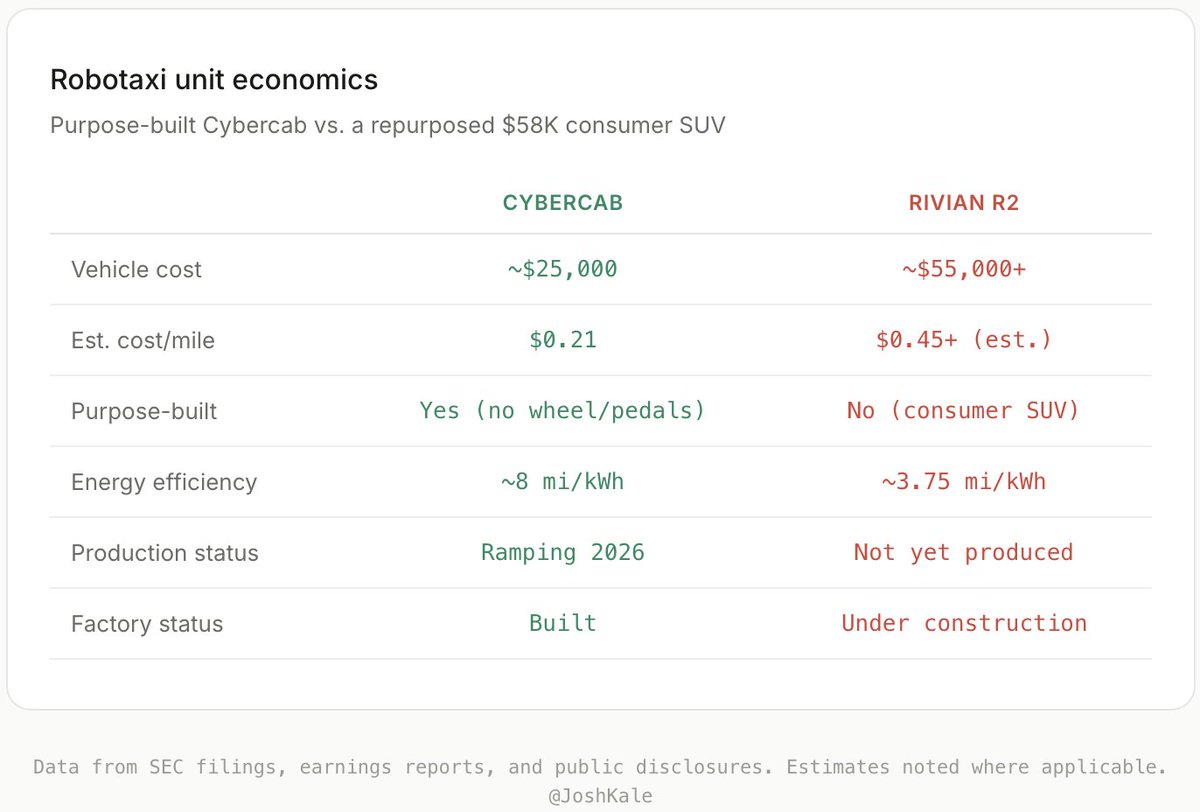

A fleet of R2 Robotaxis is coming exclusively to @Uber. ⚡🌿 Today, we announced a partnership to help both companies accelerate their autonomous vehicle plans across 25 cities in the US, Canada and Europe by the end of 2031. rivn.co/uber

@TechLayoffLover "We don't need talent acquisition. We need talent extraction." That one line explains everything CS grads are experiencing right now. They hired us to learn from us. Not to keep us. Figured this out the hard way. Now I'm building something for myself instead.

This AI cycle is fundamentally different from every prior memory supercycle in $MU history. Past supercycles were driven by unit volume growth with more phones + servers buying largely commoditized DRAM but but this one is a capacity-constrained cycle where HBM sells at ~5x conventional DRAM ASPs. Hyperscalers are willing to pay whatever it takes because the real cost is leaving massive GPU clusters underutilized. That is how Micron ends up producing $16B of operating profit in a single quarter.