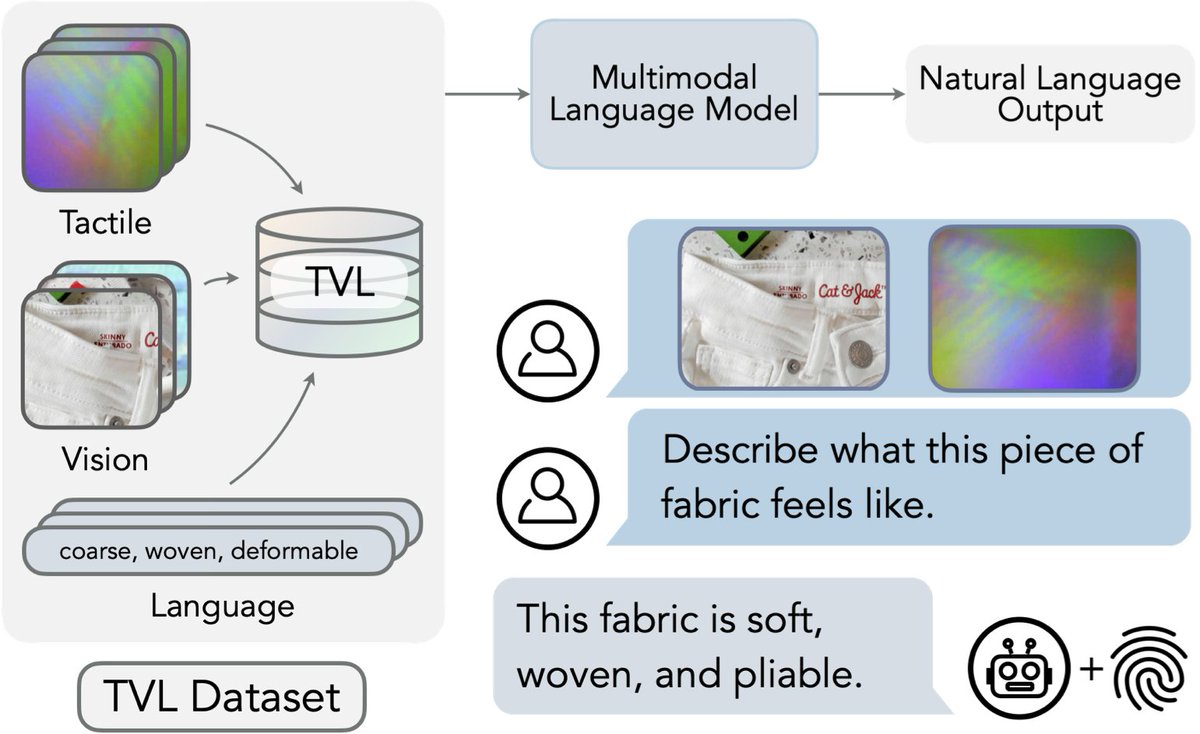

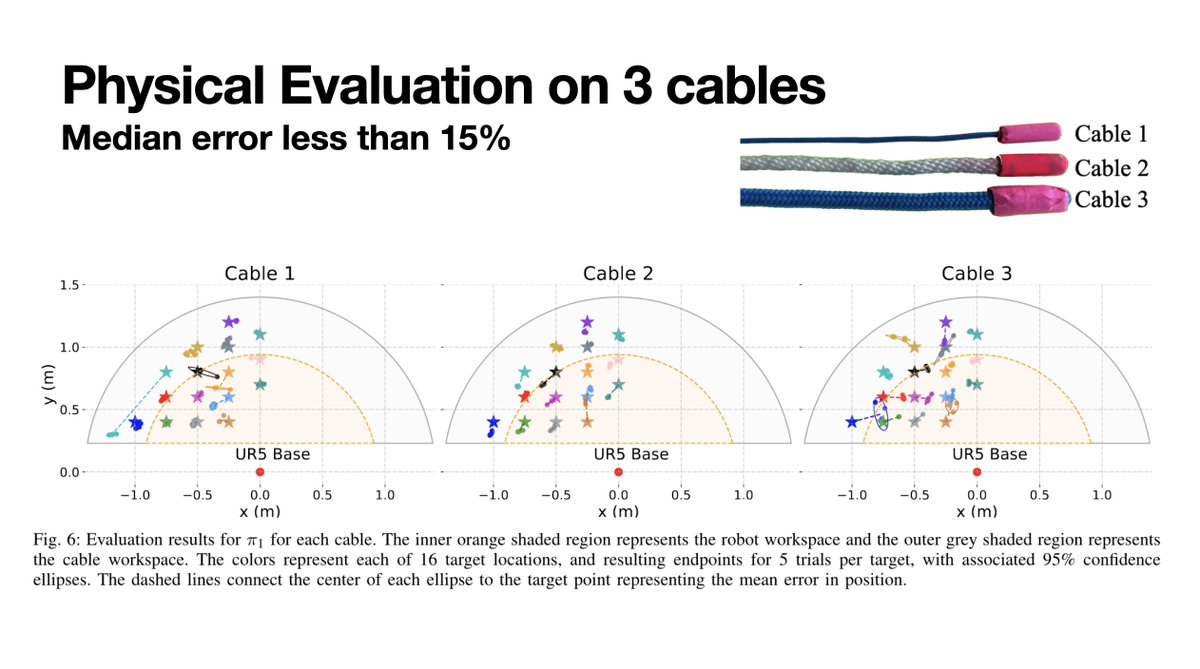

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab capgym.github.io 🧵