Max Fu

205 posts

Max Fu

@letian_fu

scaling robotics. Intern @NVIDIA. PhD student @UCBerkeley @berkeley_ai. Prev @Apple @autodesk

SONIC is now open-source! Generalist whole-body teleoperation for EVERYONE! Our team has long been building comprehensive pipelines for whole-body control, kinematic planner, and teleoperation, and they will all be shared. This will be a continuous update; inference code + model already there, training code and gr00t integration coming soon! Code: github.com/NVlabs/GR00T-W… Docs: nvlabs.github.io/GR00T-WholeBod… Site: nvlabs.github.io/GEAR-SONIC/

We’re rolling out an upgrade designed to help robots reason about the physical world. 🤖 Gemini Robotics-ER 1.6 has significantly better visual and spatial understanding in order to plan and complete more useful tasks. Here’s why this is important 🧵

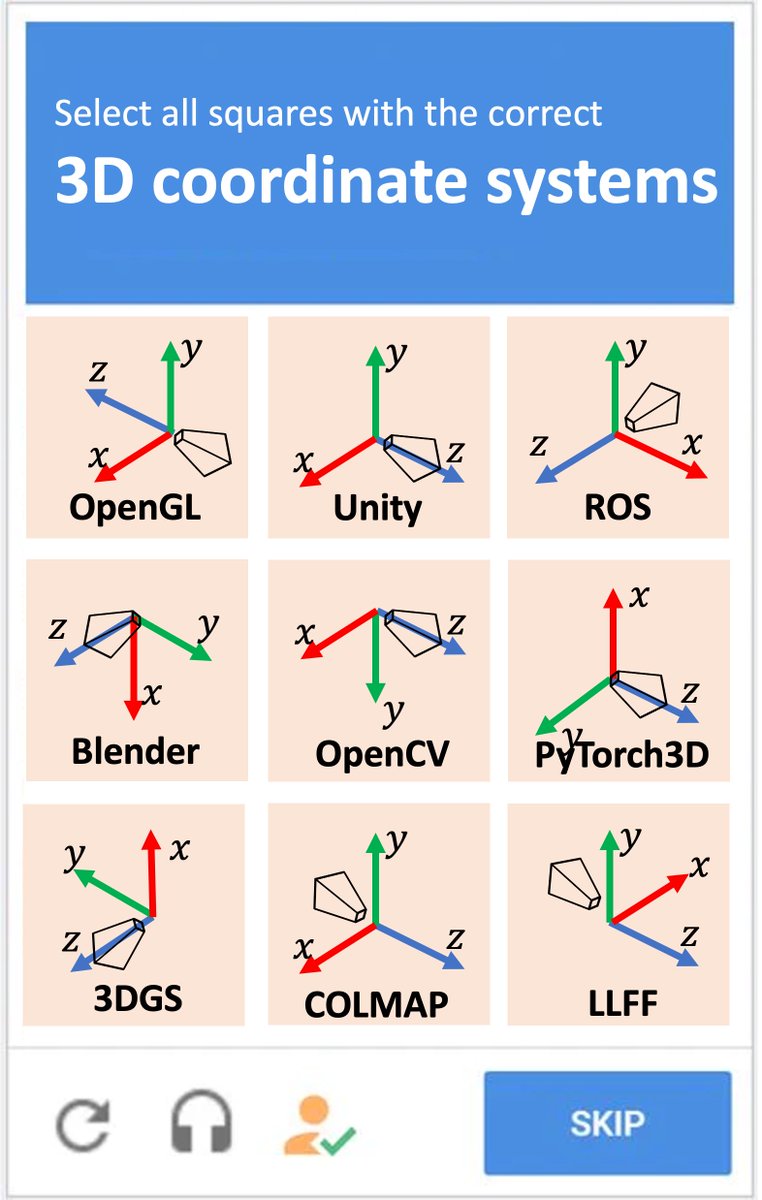

wait wait wait. So a new kind of 3D representation (gaussian splats) was invented and they decided to use Y-DOWN as the standard up-direction ?? In 2023 ?!?!!! Need to have some words w/ my old friends at INRIA...and I guess we need a new chart...

ThreadWeaver Adaptive Threading for Efficient Parallel Reasoning in Language Models

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab capgym.github.io 🧵

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab capgym.github.io 🧵

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab capgym.github.io 🧵

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab capgym.github.io 🧵

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab capgym.github.io 🧵

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab capgym.github.io 🧵

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab capgym.github.io 🧵

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab capgym.github.io 🧵

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab capgym.github.io 🧵

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab capgym.github.io 🧵