(2/7) 💵 With training costs exceeding $100M for GPT-4, efficient alternatives matter. We show that diffusion LMs unlock a new paradigm for compute-optimal language pre-training.

RedBedDread

9K posts

(2/7) 💵 With training costs exceeding $100M for GPT-4, efficient alternatives matter. We show that diffusion LMs unlock a new paradigm for compute-optimal language pre-training.

Android has always been about choice. Today, we’re sharing how we’re evolving the ecosystem so you don’t have to choose between an open platform and a secure one. We’re introducing a new advanced flow for sideloading where power users can go through a one time flow to enable their devices to load software from unverified developers. We’ve designed this process to stop more vulnerable users from being coerced to do this during a guided scam (which are a major problem today for people) We also have new account types for students and hobbyists that address their feedback.

I got mad about people defending MCP so I made this video. The first minute is just me being very mad, but then I tried to contribute something of value after that. youtube.com/watch?v=m0VyZU…

It's fun shopping for new banks because it seems all banks have terrible ratings, and when you look at them, like 66% of them are like, "We were overdrawn by $10,000 and the bank closed our account and refused to work with us!!!!!!"

quick woman drawing tip, if she's on her back her chest will flatten like pancakes and not look like this... unless she has like implants or something

The multi-vector era is here and there is no going back. Reason-ModernColBERT tops BrowseComp-Plus, the hardest agentic search benchmark available, by 7.59 points on accuracy. 🥇on accuracy. 🥇on recall. 🥇on calibration. 📉 Fewest search calls. The models it outperforms? Up to 54× larger. Reasoning-intensive retrieval (BRIGHT), code search (MTEB Code), agentic Deep Research (BrowseComp-Plus). The pattern is the same: late interaction dominates, with a fraction of the parameters. 149M parameters. Open weights. Open code. Built with PyLate in a few hours. Full results, analysis and recipe on LightOn blog: lighton.ai/lighton-blogs/…

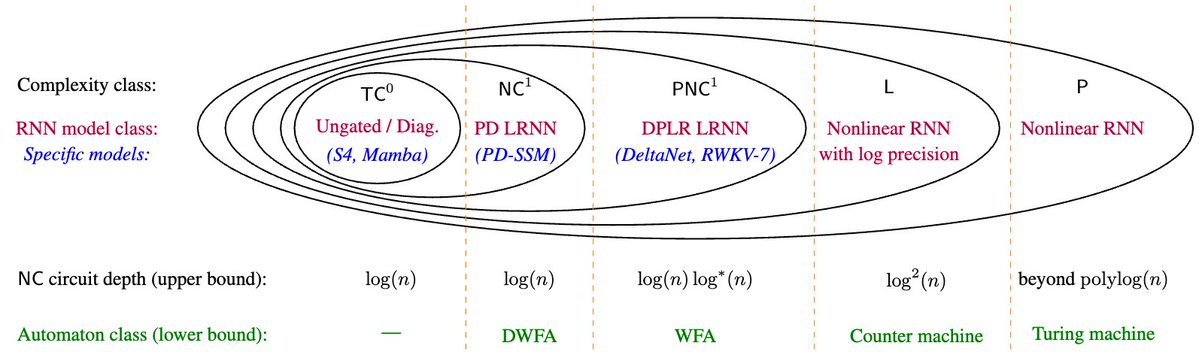

Introducing Olmo Hybrid, a 7B fully open model combining transformer and linear RNN layers. It decisively outperforms Olmo 3 7B across evals, w/ new theory & scaling experiments explaining why. 🧵