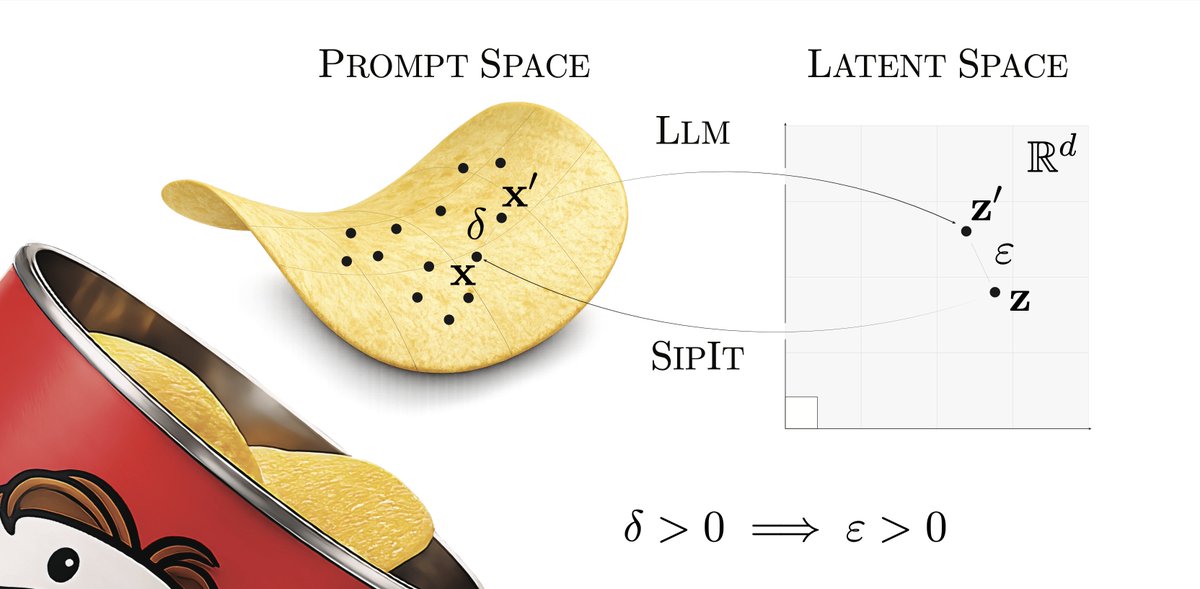

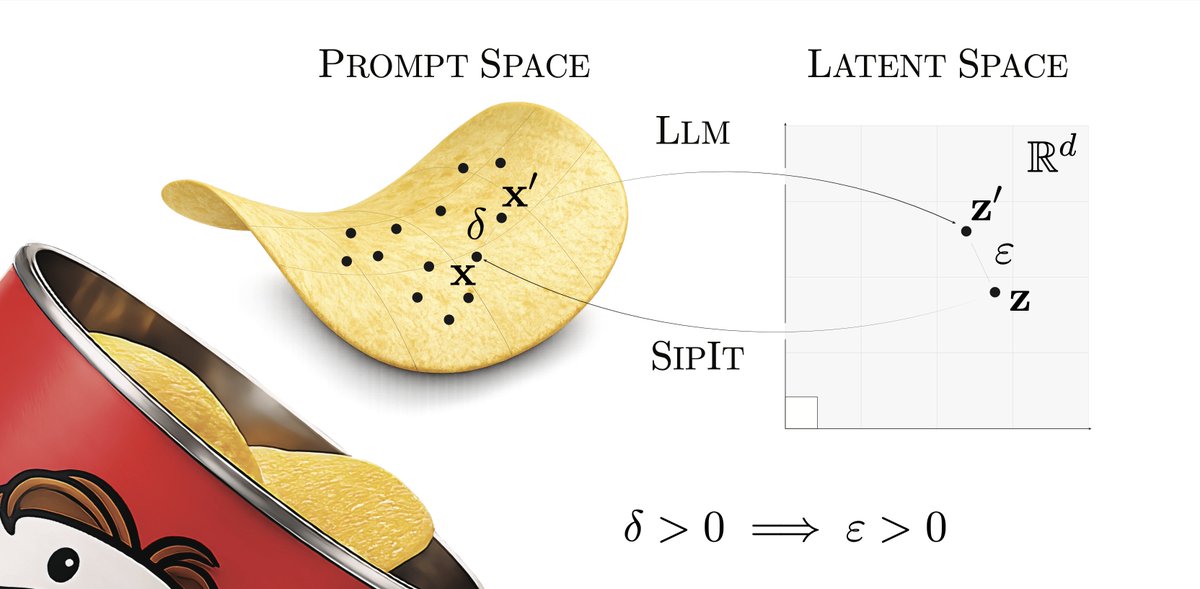

LLMs are injective and invertible. In our new paper, we show that different prompts always map to different embeddings, and this property can be used to recover input tokens from individual embeddings in latent space. (1/6)

raulpuri.eth

486 posts

@TheRealRPuri

AI @ hrooeu sjmrtPectPs | past: OpenAI - ChatGPT Multimodal, Her, 4o, GPT4V, 4, 3.5, Codex | NVIDIA - megatron, language | 🐻

LLMs are injective and invertible. In our new paper, we show that different prompts always map to different embeddings, and this property can be used to recover input tokens from individual embeddings in latent space. (1/6)

My raw thoughts on the job market -- both for those hiring and those searching -- at the cutting edge of AI. interconnects.ai/p/thoughts-on-…

To preserve or improve chain-of-thought (CoT) monitorability, we have to be able to measure it. I'm excited to announce our new research on this at OpenAI

Simple proposal: “Eataly but Chinese”

Westfield Mall has 1.5M square feet and is 93% vacant It just sold for $134M! What would you do if you bought it and had free rein?

Machines that can predict what their sensors (touch, cameras, keyboard, temperature, microphones, gyros, …) will perceive are already aware and have subjective experience. It’s all a matter of degree now. More sensors, data, compute, tasks will lead without any doubt to the “I think therefore I am” moment for computers, and we’re not ready for it yet. arxiv.org/pdf/1804.06318 share.google/kxx6WyqHpwPmo6…