@GoJun315 欢迎试用我们开源的100M TTS模型,Mac本地也可以跑,中文强支持:github.com/OpenMOSS/MOSS-…

中文

Yang Gao

104 posts

@YangGao07

Researcher @Open_MOSS | ex-Shanghai AI Lab | Dev @OpenMMLab #MMRazor | Member @intern_lm | Building open-source LLM & ML systems

DEEPSEEK-V4 IS RELEASED

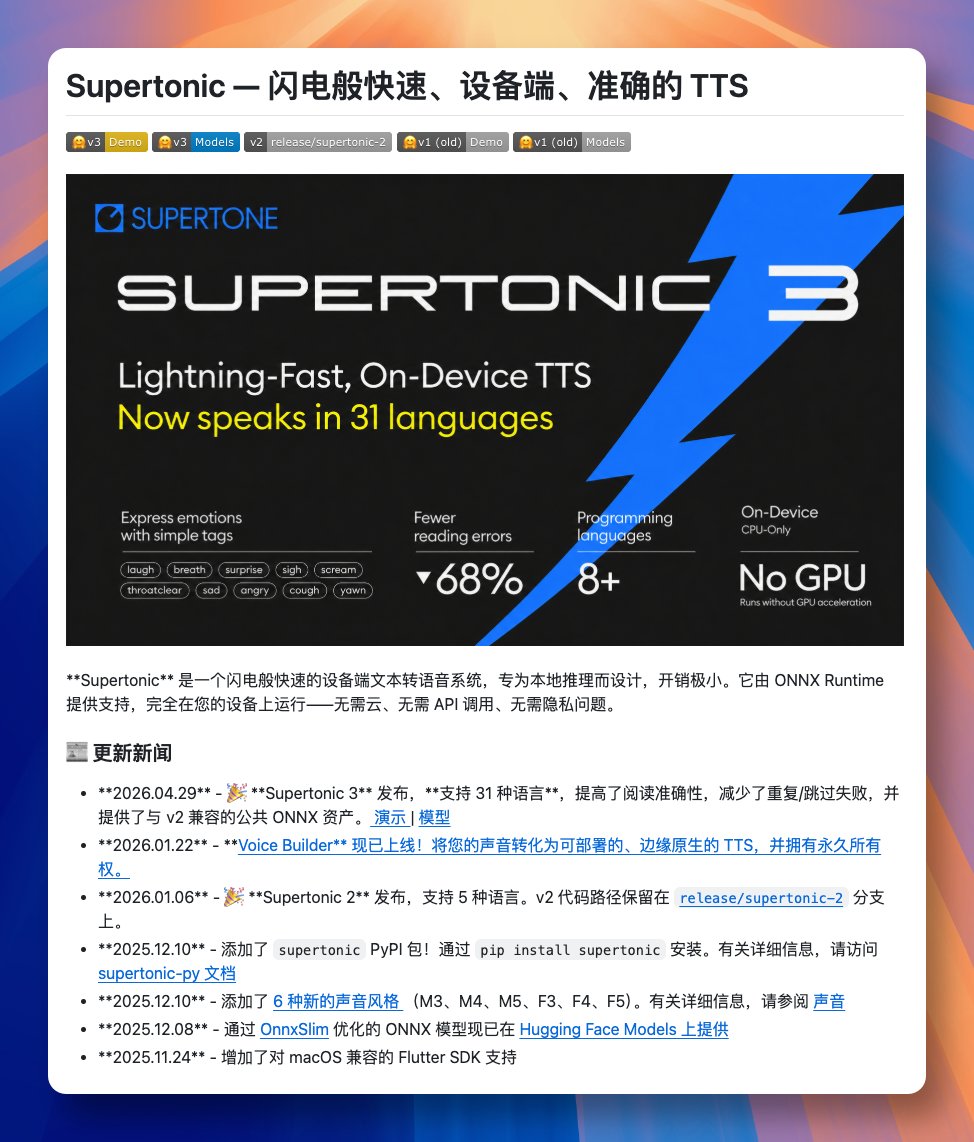

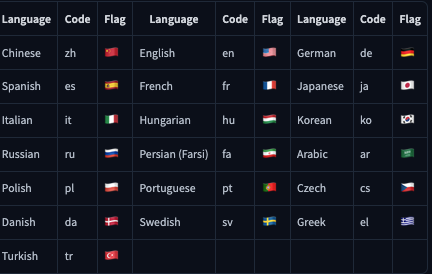

Say hello to MOSS-TTS-Nano 🚀 0.1B multilingual TTS from MOSI.AI and OpenMOSS. Designed for realtime speech generation without a GPU. Runs directly on CPU, keeping the deployment stack simple enough for local demos, web serving, and lightweight product integration. Part of the MOSS-TTS family alongside the 1.7B and 8B flagship models. 🤖 modelscope.cn/models/openmos… 🌍 modelscope.ai/models/openmos… 💻 github.com/OpenMOSS/MOSS-…

Participating in an offline meeting