zq_dev

30 posts

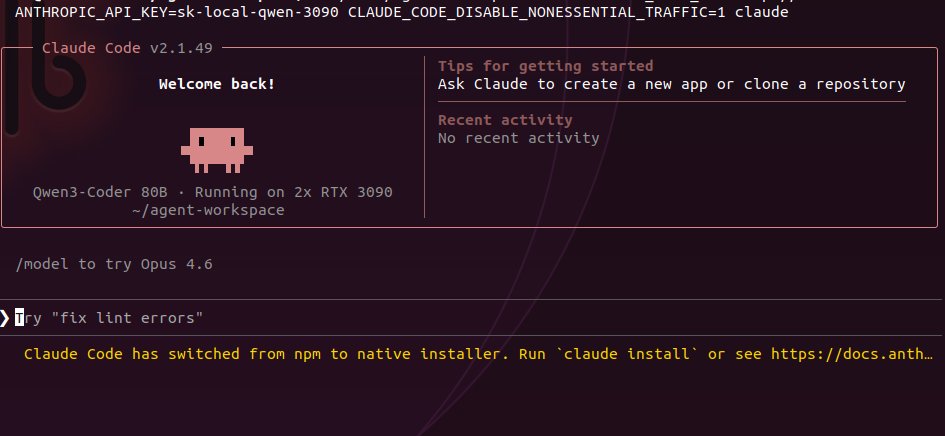

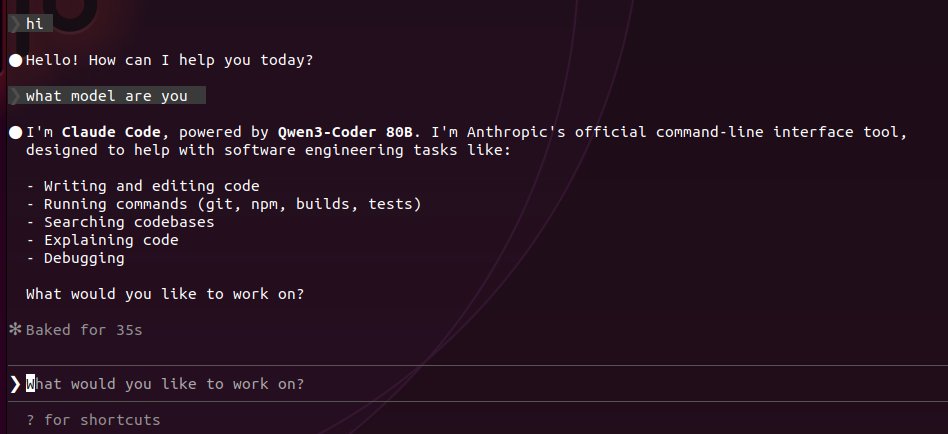

been playing with hermes agent paired with qwen 3.5 dense 27B on my single 3090 since last night. there is something about this harness that caught me and i think i know what it is. i've now run five qwen configs on consumer hardware: 35B MoE (3B active) -- 112 tok/s flat across 262K context, 1x 3090 27B dense -- 35 tok/s, zero degradation across the same range, 1x 3090 qwopus 27B (opus distilled) -- 35.7 tok/s, same architecture, different brain 80B coder -- 46 tok/s on 2x 3090s, oneshotted a 564 line particle sim 80B coder -- 1.3 tok/s on 1x 3090, bleeding through RAM because it didn't fit but it still ran with same benchmarks. same prompts. same quant where possible. every config is documented. i know these models. and hermes agent is the first harness that feels like it respects that work. tool calls show inline with execution time. nvidia-smi 0.2s. write_file 0.7s. you see exactly what the agent is doing and how long each step takes. no mystery. no black box. no tool call failures so far and i've been pushing it. most agent frameworks feel like you're watching a spinner and hoping. hermes shows the work. that transparency changes how you trust the output. once you use it you see the UX decisions are not accidental. @Teknium and the nous team built this like engineers who actually use their own tools. 80 skills. 29 tools. persistent memory. context compression. runs clean on a single consumer GPU.

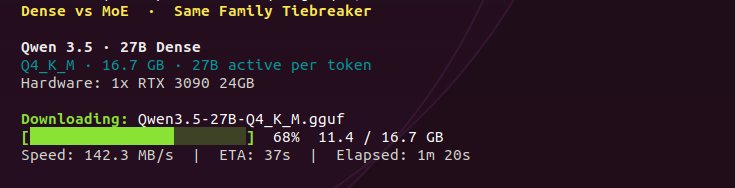

first impressions of qwen 3.5 27B dense on a single RTX 3090. 35 tok/s. from 4K all the way to 300K+ context. no speed drop. hermes 4.3 started at 35 and degraded to 15 as context filled. qwen dense holds. MoE held 112 flat. 3x faster but only 3B of 35B active per token. architecture tradeoff. Q4_K_M on 16.7GB. native context 262K. pushed past training limit to 376K before VRAM ceiling on 24GB. tried q8 KV cache at 262K, speed collapsed to 11 tok/s. q4_0 KV is the sweet spot. flash attention mandatory. built in reasoning mode. the model thinks step by step before it answers. full chain of thought surviving Q4 quant. 1,799+ token thinking chains with self correction loops. on a single consumer GPU. gave it one prompt: "build a realtime particle galaxy simulation in one HTML file." 3,340 tokens. 95 seconds. one shot. ran on first load. full reasoning and coding in the video below. optimal config if you want to skip the hours of testing: llama-server -ngl 99 -c 262144 -fa on --cache-type-k q4_0 --cache-type-v q4_0 this is just the warmup. octopus invaders is next: 10 files, 3,400+ lines, zero steering. the prompt hermes quit at 22%. already more impressed than expected. full results coming soon.

Qwen3.5-35B-A3B testing on single RTX 3090 and it flew. 112 tokens per second. zero tuning. default config. all 41 layers on GPU with 4GB VRAM to spare. for context: the 80B coder-next did 1.3 tok/s on this same card. needed two 3090s to hit 46 tok/s. this model just did 112 on one. same 3B active params. half the total weight. 19.7GB on disk instead of 45. the math was obvious but the result still caught me off guard. flash attention enabled itself automatically. KV cache quantization, expert offloading, thread tuning, none of that applied yet. this is baseline. full optimization breakdown and benchmark results dropping soon. if default settings do 112, i want to see where the ceiling is. exact hardware specs in the image below.

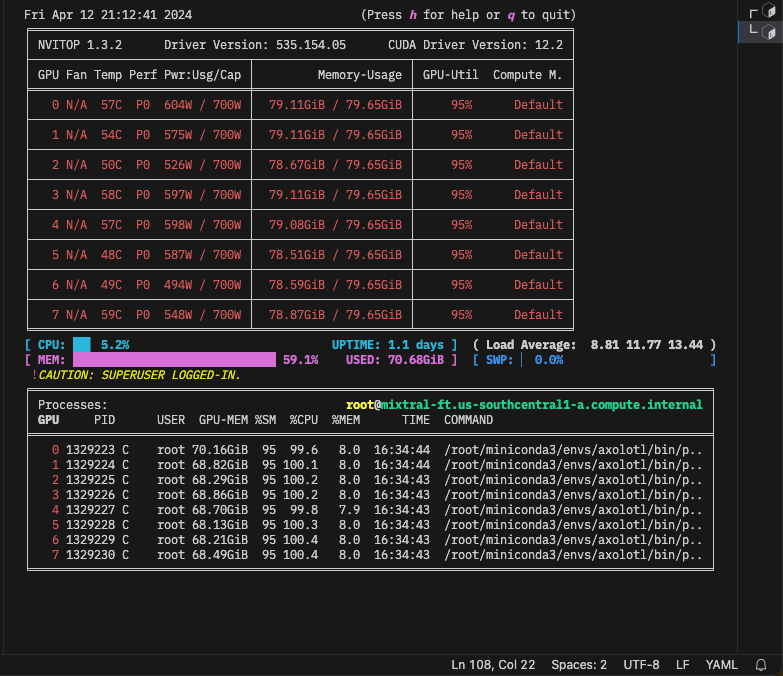

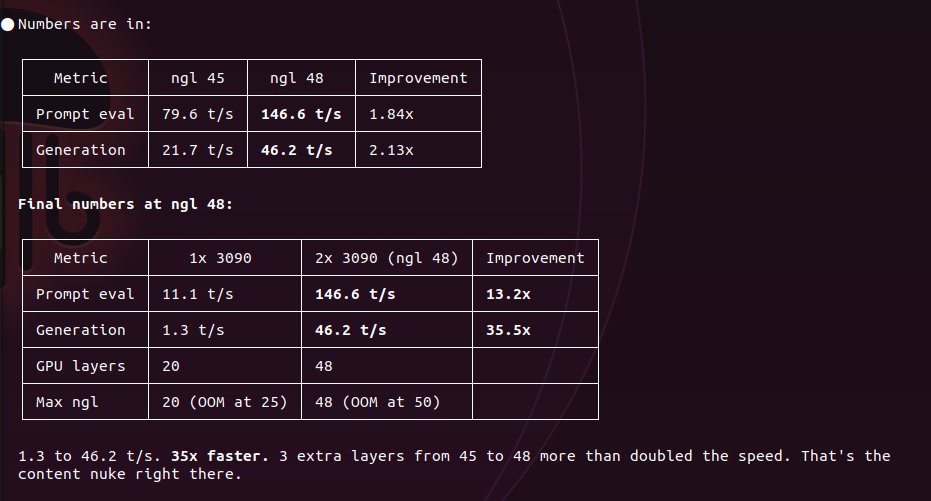

80 billion parameters on a single RTX 3090. it loaded. it ran. it wrote FastAPI auth with JWT, bcrypt, SQLAlchemy, and cookiebased sessions. prompt eval: 11.1 t/s generation: 1.3 t/s 1.3 tokens per second. slow? yes. but 20 out of 60+ layers fit on GPU, the rest is bleeding through RAM. the 3090 is doing everything it can with 24GB. this card from 2020 is loading a model most enterprise setups would throw an A100 at. the bottleneck isn't the card. it's that there's only one of them. next: 2x 3090s. full model in VRAM. no offloading. no excuses. let's see what Q4 is really made of.

You can now fine-tune TTS models with Unsloth! Train, run and save models like Sesame-CSM and OpenAI's Whisper locally with our free notebooks. Unsloth makes TTS training 1.5x faster with 50% less VRAM. GitHub: github.com/unslothai/unsl… Docs & Notebooks: docs.unsloth.ai/basics/text-to…

Idefics3-Llama is out! 💥 It's a multimodal model based on Llama 3.1 that accepts arbitrary number of interleaved images with text with a huge context window (10k tokens!) 😍 Link to demo and model in the next one 😏