Sabitlenmiş Tweet

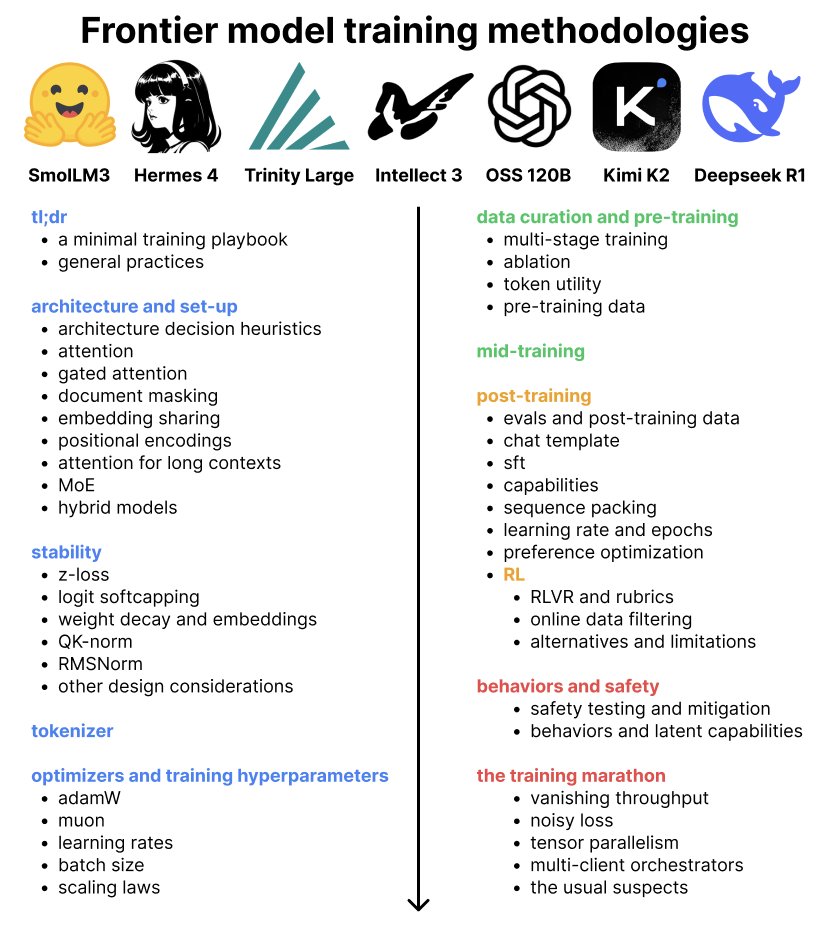

new blog! What methodologies do labs use to train frontier models?

The blog distills 7 open-weight model reports from frontier labs, covering architecture, stability, optimizers, data curation, pre/mid/post-training + RL, and behaviors/safety

djdumpling.github.io/2026/01/31/fro…

English