pranav

1.2K posts

pranav

@_pranavnt

robot learning @uwcse • prev @morph_labs @atlasfellow

Think about the power Hegseth is asserting here. He is claiming that the DoD can force all contractors to stop doing business of any kind with arbitrary other companies. In other words, every operating system vendor, every manufacturer of hardware, every hyperscaler, every type of firm the DoD contracts with—all their services and products can be denied to any economic actor at will by the Secretary of War. This is obviously a psychotic power grab. It is almost surely illegal, but the message it sends is that the United States Government is a completely unreliable partner for any kind of business. The damage done to our business environment is profound. No amount of deregulatory vibes sent by this administration matters compared to this arson.

text is the universal interface

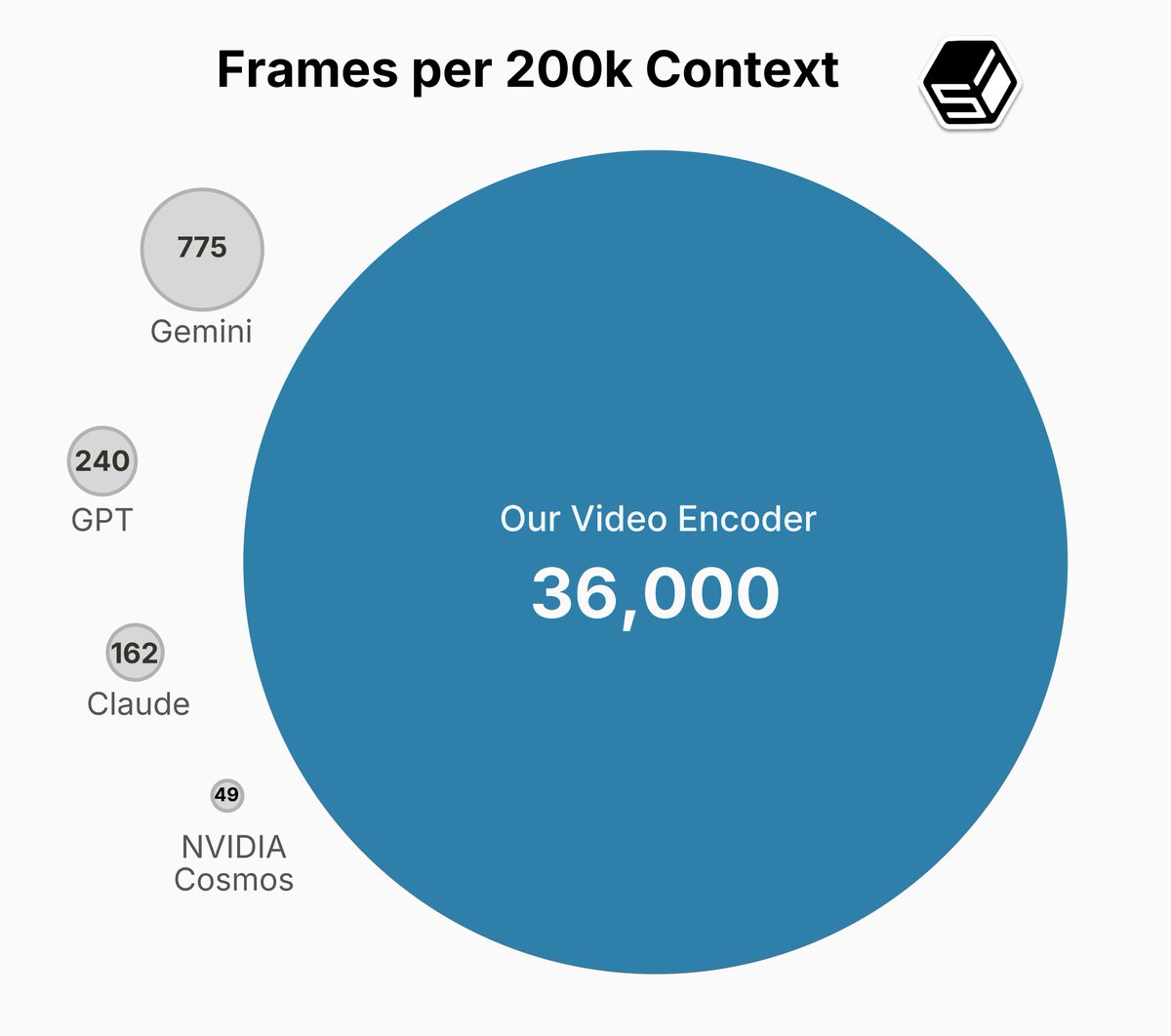

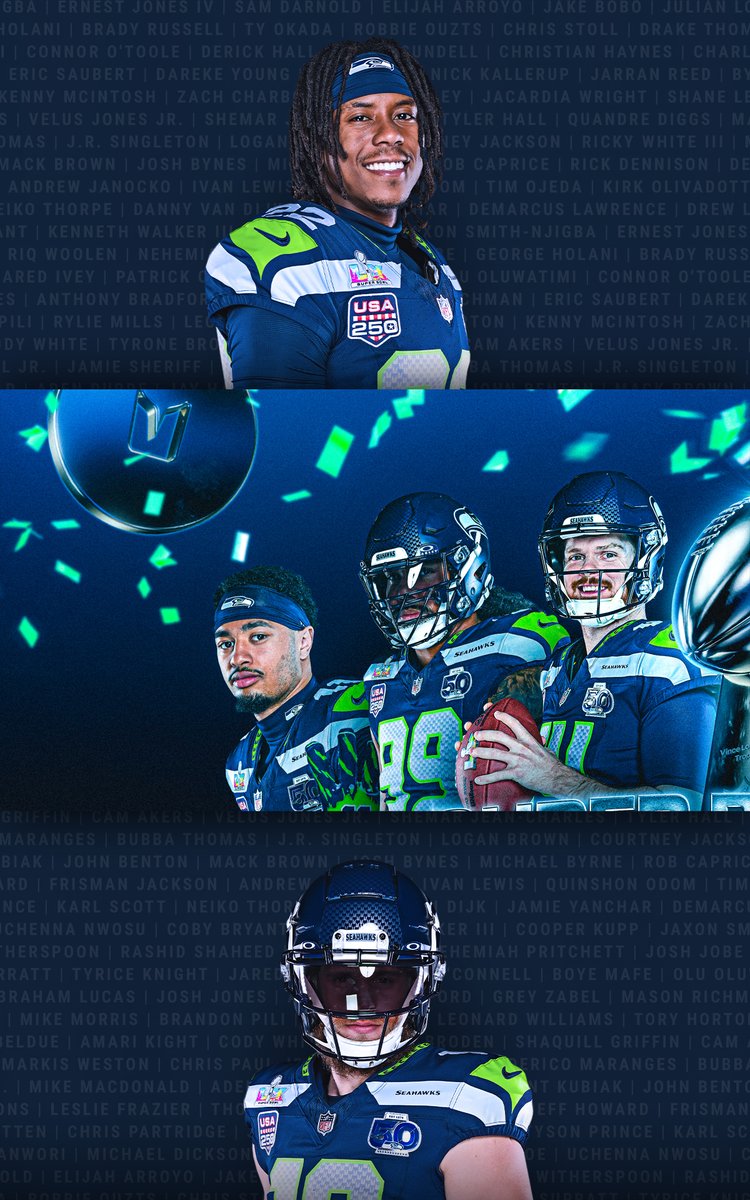

True computer use is fully general. FDM-1 uses arrow keys on a computer to steer a car in San Francisco with less than 1 hour of fine-tuning data. The action policy is critical: tuning FDM-1 to drive gets much higher accuracy than tuning just the video encoder on the same data.

Computer use models shouldn't learn from screenshots. We built a new foundation model that learns from video like humans do. FDM-1 can construct a gear in Blender, find software bugs, and even drive a real car through San Francisco using arrow keys.

Computer use models shouldn't learn from screenshots. We built a new foundation model that learns from video like humans do. FDM-1 can construct a gear in Blender, find software bugs, and even drive a real car through San Francisco using arrow keys.

Introducing EVMbench—a new benchmark that measures how well AI agents can detect, exploit, and patch high-severity smart contract vulnerabilities. openai.com/index/introduc…

A conventional narrative you might come across is that AI is too far along for a new, research-focused startup to outcompete and outexecute the incumbents of AI. This is exactly the sentiment I listened to often when OpenAI started ("how could the few of you possibly compete with Google?") and 1) it was very wrong, and then 2) it was very wrong again with a whole another round of startups who are now challenging OpenAI in turn, and imo it still continues to be wrong today. Scaling and locally improving what works will continue to create incredible advances, but with so much progress unlocked so quickly, with so much dust thrown up in the air in the process, and with still a large gap between frontier LLMs and the example proof of the magic of a mind running on 20 watts, the probability of research breakthroughs that yield closer to 10X improvements (instead of 10%) imo still feels very high - plenty high to continue to bet on and look for. The tricky part ofc is creating the conditions where such breakthroughs may be discovered. I think such an environment comes together rarely, but @bfspector & @amspector100 are brilliant, with (rare) full-stack understanding of LLMs top (math/algorithms) to bottom (megakernels/related), they have a great eye for talent and I think will be able to build something very special. Congrats on the launch and I look forward to what you come up with!

Announcing Flapping Airplanes! We’ve raised $180M from GV, Sequoia, and Index to assemble a new guard in AI: one that imagines a world where models can think at human level without ingesting half the internet.

Introducing Ai2 Open Coding Agents—starting with SERA, our first-ever coding models. Fast, accessible agents (8B–32B) that adapt to any repo, including private codebases. Train a powerful specialized agent for as little as ~$400, & it works with Claude Code out of the box. 🧵