devansh

1.4K posts

devansh

@devanshpandey

building aligned general learners. cofounder @si_pbc

Yep, Composer 2 started from an open-source base! We will do full pretraining in the future. Only ~1/4 of the compute spent on the final model came from the base, the rest is from our training. This is why evals are very different. And yes, we are following the license through our inference partner terms.

Open weights isn't open training. @AddieF38654 on our team wrote up her experience trying to post-train a 1T parameter MoE model using the existing open source infra. Let's find out how many monkey-patches it takes to post-train an open-weights model. A thread🧵

We’ve developed a memory system for our models that provides both short-term visual memory and long-term semantic memory. Our approach allows us to train robots to perform long and complex tasks, like cleaning up a kitchen or preparing a grilled cheese sandwich from scratch 👇

text is the universal interface

Computer use models shouldn't learn from screenshots. We built a new foundation model that learns from video like humans do. FDM-1 can construct a gear in Blender, find software bugs, and even drive a real car through San Francisco using arrow keys.

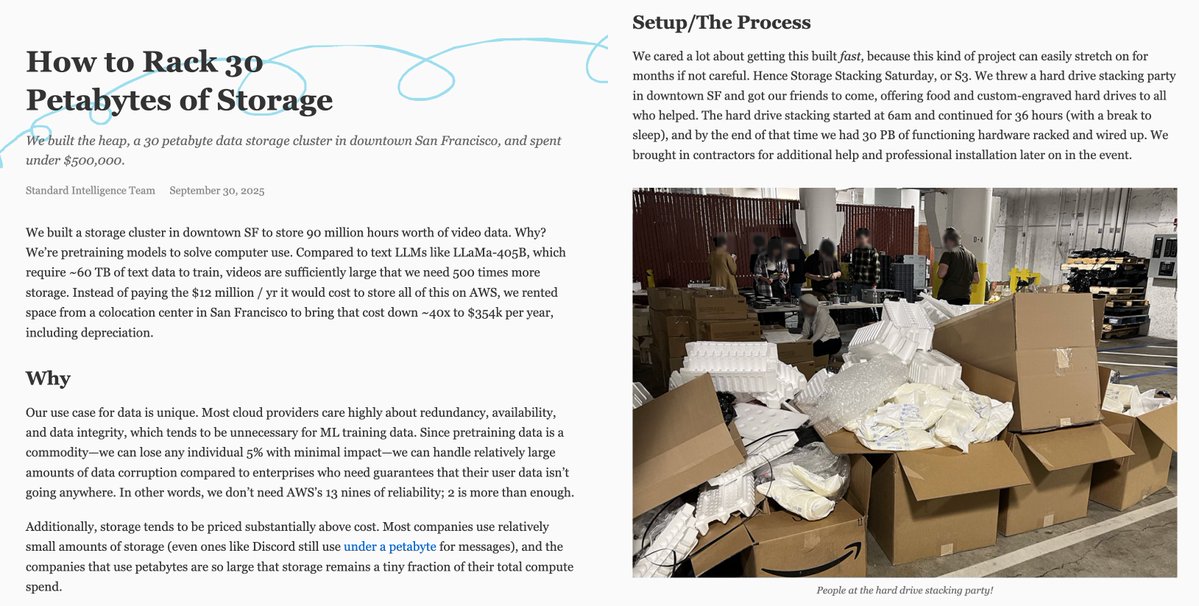

our S3 bill was way too expensive… so we built a 30 PB storage cluster in the heart of SF.

Computer use models shouldn't learn from screenshots. We built a new foundation model that learns from video like humans do. FDM-1 can construct a gear in Blender, find software bugs, and even drive a real car through San Francisco using arrow keys.